Post Syndicated from Esra Kayabali original https://aws.amazon.com/blogs/aws/aws-weekly-roundup-anthropics-claude-3-opus-in-amazon-bedrock-meta-llama-3-in-amazon-sagemaker-jumpstart-and-more-april-22-2024/

AWS Summits continue to rock the world, with events taking place in various locations around the globe. AWS Summit London (April 24) is the last one in April, and there are nine more in May, including AWS Summit Berlin (May 15–16), AWS Summit Los Angeles (May 22), and AWS Summit Dubai (May 29). Join us to connect, collaborate, and learn about AWS!

While you decide which summit to attend, let’s look at the last week’s new announcements.

Last week’s launches

Last week was another busy one in the world of artificial intelligence (AI). Here are some launches that got my attention.

Anthropic’s Claude 3 Opus now available in Amazon Bedrock – After Claude 3 Sonnet and Claude 3 Haiku, two of the three state-of-the-art models of Anthropic’s Claude 3, Opus is now available in Amazon Bedrock. Cluade 3 Opus is at the forefront of generative AI, demonstrating comprehension and fluency on complicated tasks at nearly human levels. Like the rest of the Claude 3 family, Opus can process images and return text outputs. Claude 3 Opus shows an estimated twofold gain in accuracy over Claude 2.1 on difficult open-ended questions, reducing the likelihood of faulty responses.

Meta Llama 3 now available in Amazon SageMaker JumpStart – Meta Llama 3 is now available in Amazon SageMaker JumpStart, a machine learning (ML) hub that can help you accelerate your ML journey. You can deploy and use Llama 3 foundation models (FMs) with a few steps in Amazon SageMaker Studio or programmatically through the Amazon SageMaker Python SDK. Llama is available in two parameter sizes, 8B and 70B, and can be used to support a broad range of use cases, with improvements in reasoning, code generation, and instruction following. The model will be deployed in an AWS secure environment under your VPC controls, helping ensure data security.

Built-in SQL extension with Amazon SageMaker Studio Notebooks – SageMaker Studio’s JupyterLab now includes a built-in SQL extension to discover, explore, and transform data from various sources using SQL and Python directly within the notebooks. You can now seamlessly connect to popular data services and easily browse and search databases, schemas, tables, and views. You can also preview data within the notebook interface. New features such as SQL command completion, code formatting assistance, and syntax highlighting improve developer productivity. To learn more, visit Explore data with ease: Use SQL and Text-to-SQL in Amazon SageMaker Studio JupyterLab notebooks and the SageMaker Developer Guide.

AWS Split Cost Allocation Data for Amazon EKS – You can now receive granular cost visibility for Amazon Elastic Kubernetes Service (Amazon EKS) in the AWS Cost and Usage Reports (CUR) to analyze, optimize, and chargeback cost and usage for your Kubernetes applications. You can allocate application costs to individual business units and teams based on how Kubernetes applications consume shared Amazon EC2 CPU and memory resources. You can aggregate these costs by cluster, namespace, and other Kubernetes primitives to allocate costs to individual business units or teams. These cost details will be accessible in the CUR 24 hours after opt-in. You can use the Containers Cost Allocation dashboard to visualize the costs in Amazon QuickSight and the CUR query library to query the costs using Amazon Athena.

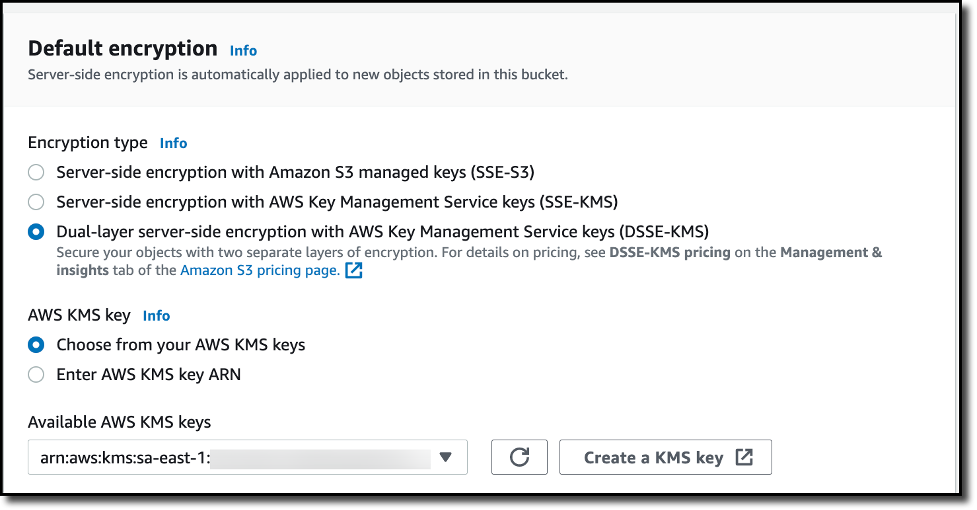

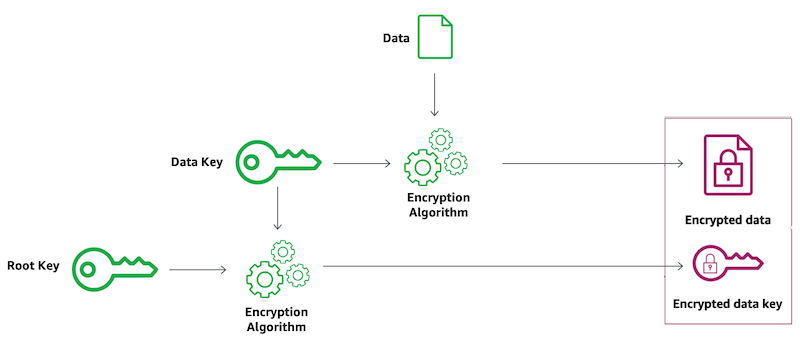

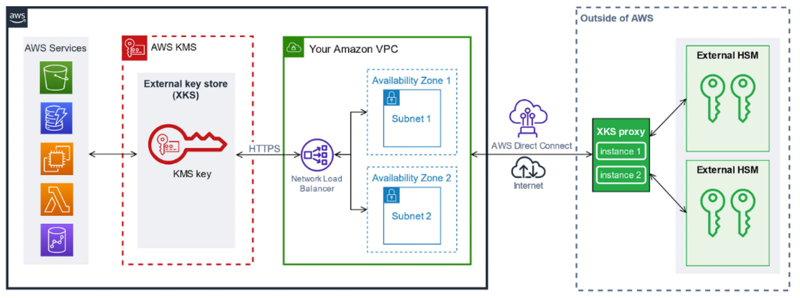

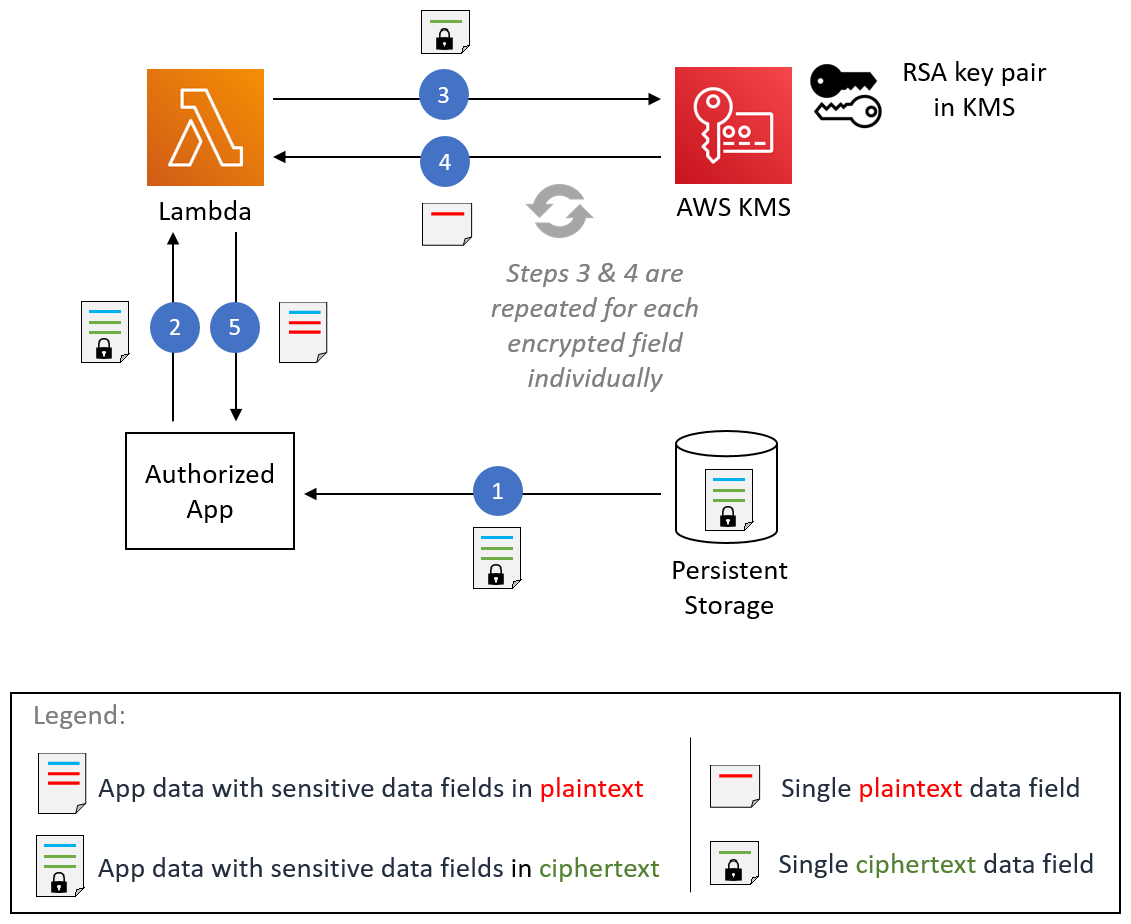

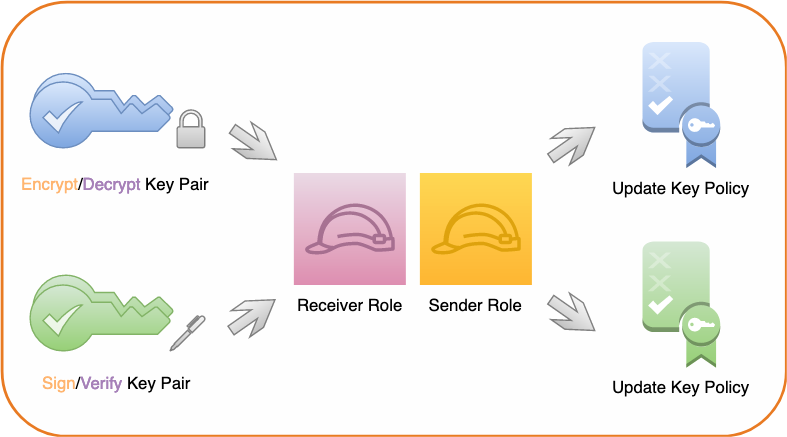

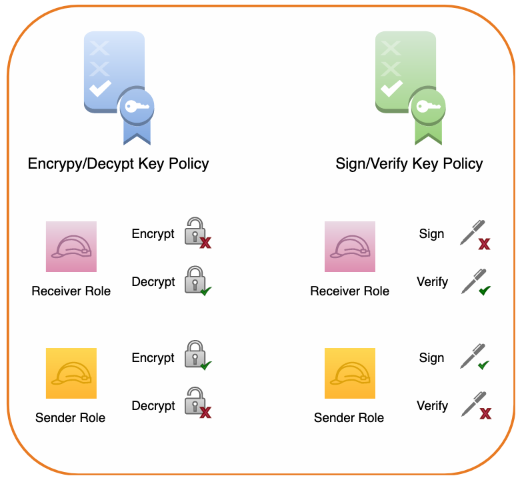

AWS KMS automatic key rotation enhancements – AWS Key Management Service (AWS KMS) introduces faster options for automatic symmetric key rotation. You can now customize rotation frequency between 90 days to 7 years, invoke key rotation on demand for customer-managed AWS KMS keys, and view the rotation history for any rotated AWS KMS key. There is a nice post on the Security Blog you can visit to learn more about this feature, including a little bit of history about cryptography.

Amazon Personalize automatic solution training – Amazon Personalize now offers automatic training for solutions. With automatic training, you can set a cadence for your Amazon Personalize solutions to automatically retrain using the latest data from your dataset group. This process creates a newly trained machine learning (ML) model, also known as a solution version, and maintains the relevance of Amazon Personalize recommendations for end users. Automatic training mitigates model drift and makes sure recommendations align with users’ evolving behaviors and preferences. With Amazon Personalize, you can personalize your website, app, ads, emails, and more, using the same machine learning technology used by Amazon, without requiring any prior ML experience. To get started with Amazon Personalize, visit our documentation.

For a full list of AWS announcements, be sure to keep an eye on the What’s New at AWS page.

We launched existing services and instance types in additional Regions:

- Amazon RDS for Oracle extends support for x2iedn in Asia Pacific (Hyderabad, Jakarta, and Osaka), Europe (Milan and Paris), US West (N. California), AWS GovCloud (US-East), and AWS GovCloud (US-West). X2iedn instances are targeted for enterprise-class high-performance databases with high compute (up to 128 vCPUs), large memory (up to 4 TB) and storage throughput requirements (up to 256K IOPS) with a 32:1 ratio of memory to vCPU.

- Amazon MSK is now available in Canada West (Calgary) Region. Amazon Managed Streaming for Apache Kafka (Amazon MSK) is a fully managed service for Apache Kafka and Kafka Connect that makes it easier for you to build and run applications that use Apache Kafka as a data store.

- Amazon Cognito is now available in Europe (Spain) Region. Amazon Cognito makes it easy to add authentication, authorization, and user management to your web and mobile apps supporting sign-in with social identity providers such as Apple, Facebook, Google, and Amazon, and enterprise identity providers through standards such as SAML 2.0 and OpenID Connect.

- AWS Network Manager is now available in AWS Israel (Tel Aviv) Region. AWS Network Manager reduces the operational complexity of managing global networks across AWS and on-premises locations by providing a single global view of your private network.

- AWS Storage Gateway is now available in AWS Canada West (Calgary) Region. AWS Storage Gateway is a hybrid cloud storage service that provides on-premises applications access to virtually unlimited storage in the cloud.

- Amazon SQS announces support for FIFO dead-letter queue redrive in the AWS GovCloud (US) Regions. Dead-letter queue redrive is an enhanced capability to improve the dead-letter queue management experience for Amazon Simple Queue Service (Amazon SQS) customers.

- Amazon EC2 R6gd instances are now available in Europe (Zurich) Region. R6gd instances are powered by AWS Graviton2 processors and are built on the AWS Nitro System. These instances offer up to 25 Gbps of network bandwidth, up to 19 Gbps of bandwidth to the Amazon Elastic Block Store (Amazon EBS), up to 512 GiB RAM, and up to 3.8TB of NVMe SSD local instance storage.

- Amazon Simple Email Service is now available in the AWS GovCloud (US-East) Region. Amazon Simple Email Service (SES) is a scalable, cost-effective, and flexible cloud-based email service that allows you to send marketing, notification, and transactional emails from within any application. To learn more, visit Amazon SES page.

- AWS Glue Studio Notebooks is now available in the Middle East (UAE), Asia Pacific (Hyderabad), Asia Pacific (Melbourne), Israel (Tel Aviv), Europe (Spain), and Europe (Zurich) Regions. AWS Glue Studio Notebooks provides interactive job authoring in AWS Glue, which helps simplify the process of developing data integration jobs. To learn more, visit Authoring code with AWS Glue Studio notebooks.

- Amazon S3 Access Grants is now available in in the Middle East (UAE), Asia Pacific (Melbourne), Asia Pacific (Hyderabad), and Europe (Spain) Regions. Amazon Simple Storage Service (Amazon S3) Access Grants map identities in directories such as Microsoft Entra ID, or AWS Identity and Access Management (IAM) principals, to datasets in S3. This helps you manage data permissions at scale by automatically granting S3 access to end-users based on their corporate identity. To learn more, visit Amazon S3 Access Grants page.

Other AWS news

Here are some additional news that you might find interesting:

The PartyRock Generative AI Hackathon winners – The PartyRock Generative AI Hackathon concluded with over 7,650 registrants submitting 1,200 projects across four challenge categories, featuring top winners like Parable Rhythm – The Interactive Crime Thriller, Faith – Manga Creation Tools, and Arghhhh! Zombie. Participants showed remarkable creativity and technical prowess, with prizes totaling $60,000 in AWS credits.

I tried the Faith – Manga Creation Tools app using my daughter Arya’s made-up stories and ideas and the result was quite impressive.

Visit Jeff Barr’s post to learn more about how to try the apps for yourself.

AWS open source news and updates – My colleague Ricardo writes about open source projects, tools, and events from the AWS Community. Check out Ricardo’s page for the latest updates.

Upcoming AWS events

Check your calendars and sign up for upcoming AWS events:

AWS Summits – Join free online and in-person events that bring the cloud computing community together to connect, collaborate, and learn about AWS. Register in your nearest city: Singapore (May 7), Seoul (May 16–17), Hong Kong (May 22), Milan (May 23), Stockholm (June 4), and Madrid (June 5).

AWS re:Inforce – Explore cloud security in the age of generative AI at AWS re:Inforce, June 10–12 in Pennsylvania for 2.5 days of immersive cloud security learning designed to help drive your business initiatives.

AWS Community Days – Join community-led conferences that feature technical discussions, workshops, and hands-on labs led by expert AWS users and industry leaders from around the world: Turkey (May 18), Midwest | Columbus (June 13), Sri Lanka (June 27), Cameroon (July 13), Nigeria (August 24), and New York (August 28).

You can browse all upcoming AWS led in-person and virtual events and developer-focused events here.

That’s all for this week. Check back next Monday for another Weekly Roundup!

This post is part of our Weekly Roundup series. Check back each week for a quick roundup of interesting news and announcements from AWS!