Post Syndicated from Magesh Dhanasekaran original https://aws.amazon.com/blogs/security/strengthen-the-devops-pipeline-and-protect-data-with-aws-secrets-manager-aws-kms-and-aws-certificate-manager/

In this blog post, we delve into using Amazon Web Services (AWS) data protection services such as Amazon Secrets Manager, AWS Key Management Service (AWS KMS), and AWS Certificate Manager (ACM) to help fortify both the security of the pipeline and security in the pipeline. We explore how these services contribute to the overall security of the DevOps pipeline infrastructure while enabling seamless integration of data protection measures. We also provide practical insights by demonstrating the implementation of these services within a DevOps pipeline for a three-tier WordPress web application deployed using Amazon Elastic Kubernetes Service (Amazon EKS).

DevOps pipelines involve the continuous integration, delivery, and deployment of cloud infrastructure and applications, which can store and process sensitive data. The increasing adoption of DevOps pipelines for cloud infrastructure and application deployments has made the protection of sensitive data a critical priority for organizations.

Some examples of the types of sensitive data that must be protected in DevOps pipelines are:

- Credentials: Usernames and passwords used to access cloud resources, databases, and applications.

- Configuration files: Files that contain settings and configuration data for applications, databases, and other systems.

- Certificates: TLS certificates used to encrypt communication between systems.

- Secrets: Any other sensitive data used to access or authenticate with cloud resources, such as private keys, security tokens, or passwords for third-party services.

Unintended access or data disclosure can have serious consequences such as loss of productivity, legal liabilities, financial losses, and reputational damage. It’s crucial to prioritize data protection to help mitigate these risks effectively.

The concept of security of the pipeline encompasses implementing security measures to protect the entire DevOps pipeline—the infrastructure, tools, and processes—from potential security issues. While the concept of security in the pipeline focuses on incorporating security practices and controls directly into the development and deployment processes within the pipeline.

By using Secrets Manager, AWS KMS, and ACM, you can strengthen the security of your DevOps pipelines, safeguard sensitive data, and facilitate secure and compliant application deployments. Our goal is to equip you with the knowledge and tools to establish a secure DevOps environment, providing the integrity of your pipeline infrastructure and protecting your organization’s sensitive data throughout the software delivery process.

Sample application architecture overview

WordPress was chosen as the use case for this DevOps pipeline implementation due to its popularity, open source nature, containerization support, and integration with AWS services. The sample architecture for the WordPress application in the AWS cloud uses the following services:

- Amazon Route 53: A DNS web service that routes traffic to the correct AWS resource.

- Amazon CloudFront: A global content delivery network (CDN) service that securely delivers data and videos to users with low latency and high transfer speeds.

- AWS WAF: A web application firewall that protects web applications from common web exploits.

- AWS Certificate Manager (ACM): A service that provides SSL/TLS certificates to enable secure connections.

- Application Load Balancer (ALB): Routes traffic to the appropriate container in Amazon EKS.

- Amazon Elastic Kubernetes Service (Amazon EKS): A scalable and highly available Kubernetes cluster to deploy containerized applications.

- Amazon Relational Database Service (Amazon RDS): A managed relational database service that provides scalable and secure databases for applications.

- AWS Key Management Service (AWS KMS): A key management service that allows you to create and manage the encryption keys used to protect your data at rest.

- AWS Secrets Manager: A service that provides the ability to rotate, manage, and retrieve database credentials.

- AWS CodePipeline: A fully managed continuous delivery service that helps to automate release pipelines for fast and reliable application and infrastructure updates.

- AWS CodeBuild: A fully managed continuous integration service that compiles source code, runs tests, and produces ready-to-deploy software packages.

- AWS CodeCommit: A secure, highly scalable, fully managed source-control service that hosts private Git repositories.

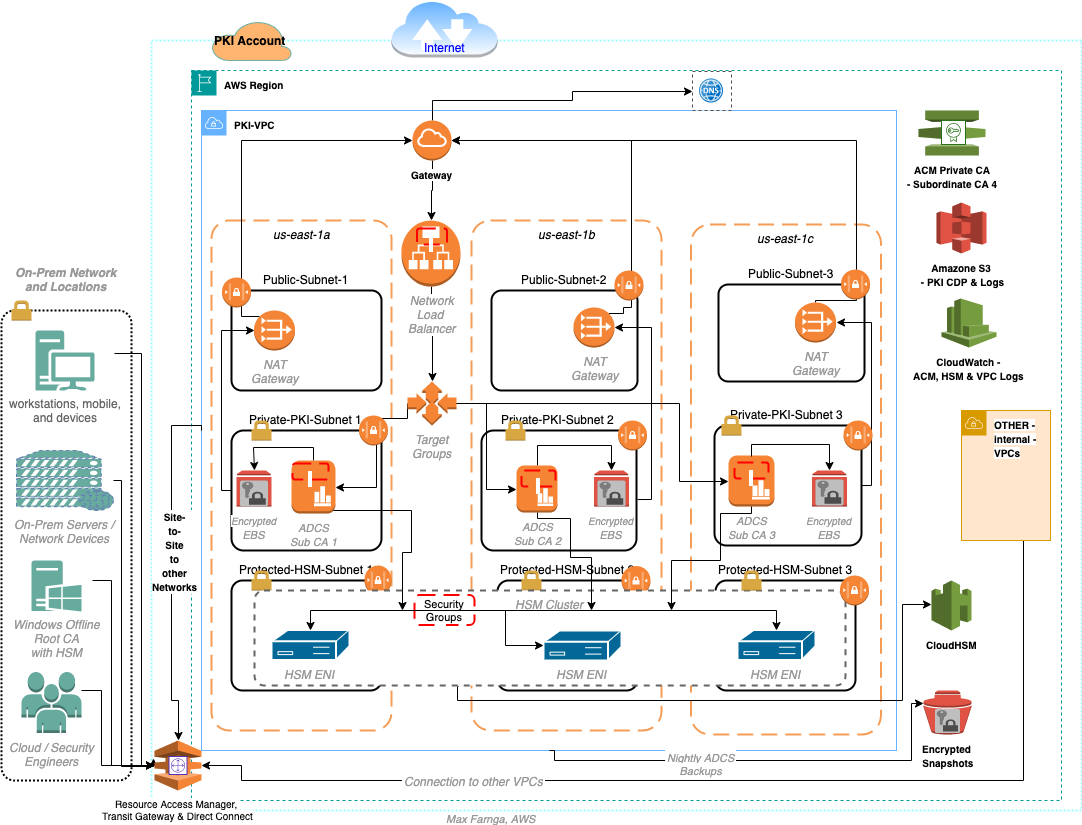

Before we explore the specifics of the sample application architecture in Figure 1, it’s important to clarify a few aspects of the diagram. While it displays only a single Availability Zone (AZ), please note that the application and infrastructure can be developed to be highly available across multiple AZs to improve fault tolerance. This means that even if one AZ is unavailable, the application remains operational in other AZs, providing uninterrupted service to users.

Figure 1: Sample application architecture

The flow of the data protection services in the post and depicted in Figure 1 can be summarized as follows:

First, we discuss securing your pipeline. You can use Secrets Manager to securely store sensitive information such as Amazon RDS credentials. We show you how to retrieve these secrets from Secrets Manager in your DevOps pipeline to access the database. By using Secrets Manager, you can protect critical credentials and help prevent unauthorized access, strengthening the security of your pipeline.

Next, we cover data encryption. With AWS KMS, you can encrypt sensitive data at rest. We explain how to encrypt data stored in Amazon RDS using AWS KMS encryption, making sure that it remains secure and protected from unauthorized access. By implementing KMS encryption, you add an extra layer of protection to your data and bolster the overall security of your pipeline.

Lastly, we discuss securing connections (data in transit) in your WordPress application. ACM is used to manage SSL/TLS certificates. We show you how to provision and manage SSL/TLS certificates using ACM and configure your Amazon EKS cluster to use these certificates for secure communication between users and the WordPress application. By using ACM, you can establish secure communication channels, providing data privacy and enhancing the security of your pipeline.

Note: The code samples in this post are only to demonstrate the key concepts. The actual code can be found on GitHub.

Securing sensitive data with Secrets Manager

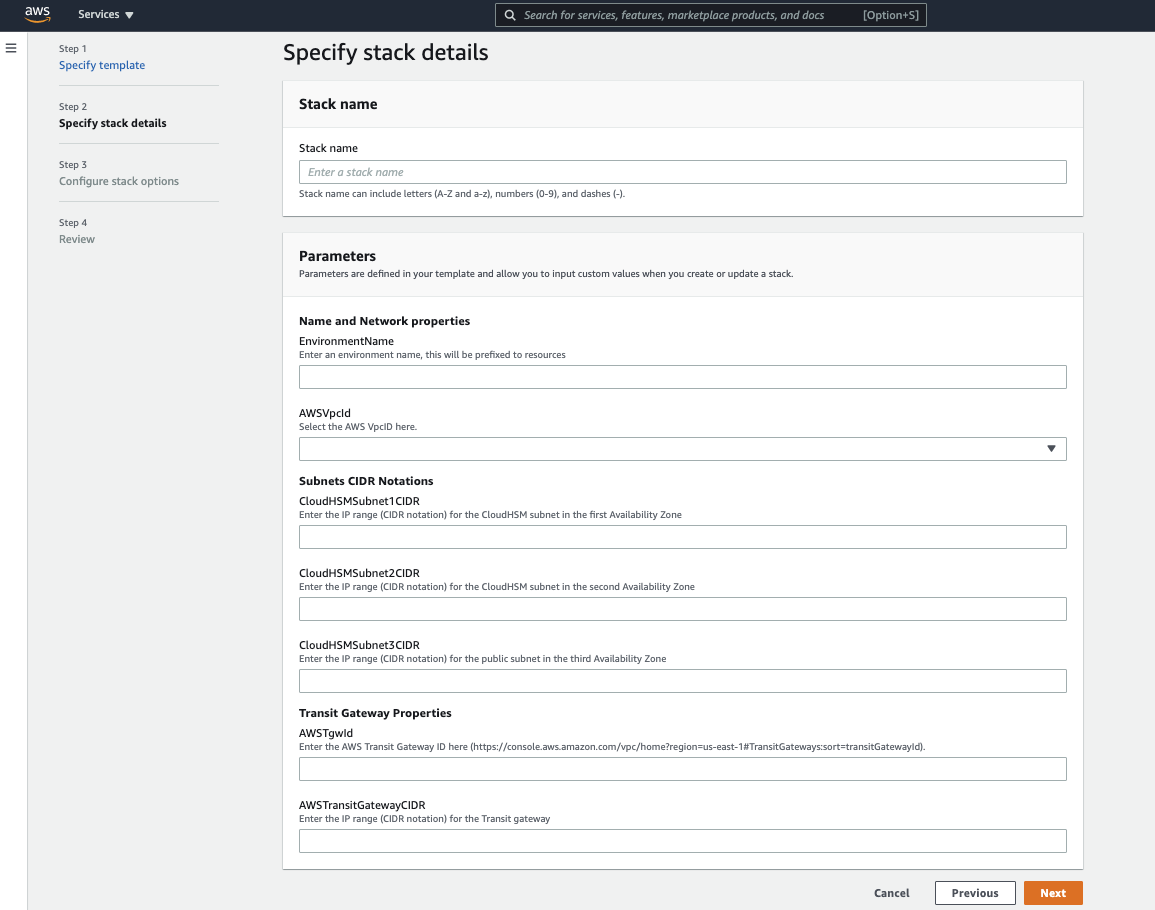

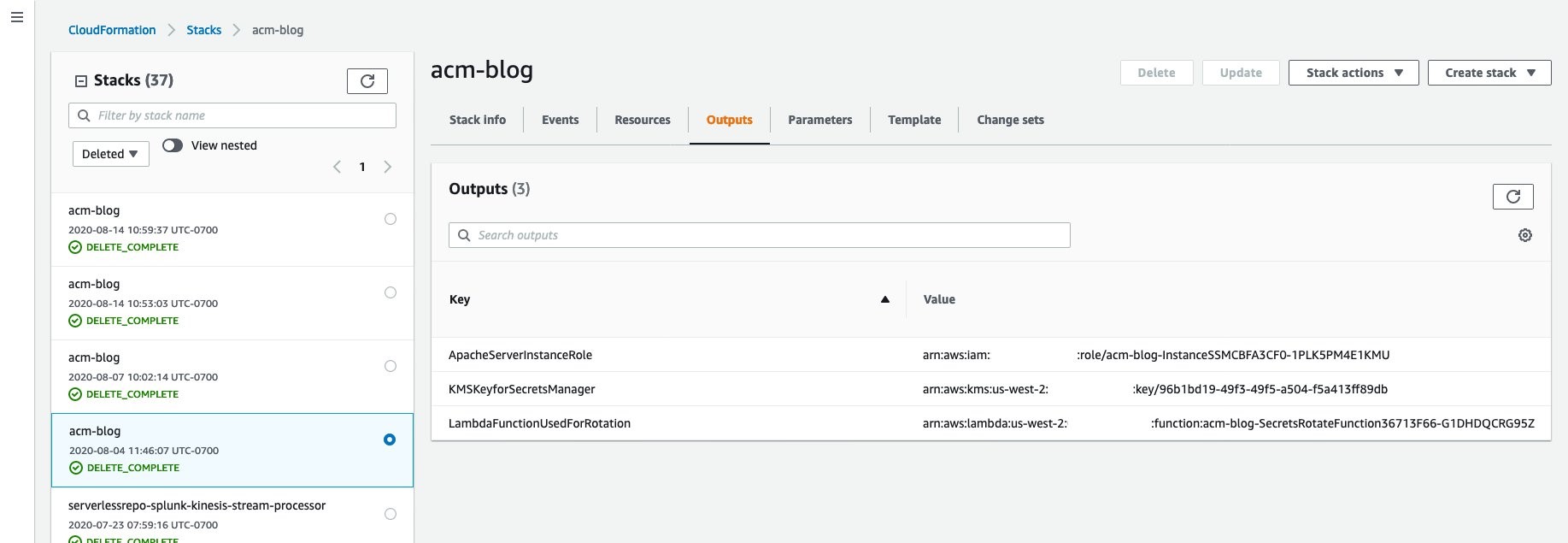

In this sample application architecture, Secrets Manager is used to store and manage sensitive data. The AWS CloudFormation template provided sets up an Amazon RDS for MySQL instance and securely sets the master user password by retrieving it from Secrets Manager using KMS encryption.

Here’s how Secrets Manager is implemented in this sample application architecture:

- Creating a Secrets Manager secret.

- Create a Secrets Manager secret that includes the Amazon RDS database credentials using CloudFormation.

- The secret is encrypted using an AWS KMS customer managed key.

- Sample code:

The ManageMasterUserPassword: true line in the CloudFormation template indicates that the stack will manage the master user password for the Amazon RDS instance. To securely retrieve the password for the master user, the CloudFormation template uses the MasterUserSecret parameter, which retrieves the password from Secrets Manager. The KmsKeyId: !Ref RDSMySqlSecretEncryption line specifies the KMS key ID that will be used to encrypt the secret in Secrets Manager.

By setting the MasterUserSecret parameter to retrieve the password from Secrets Manager, the CloudFormation stack can securely retrieve and set the master user password for the Amazon RDS MySQL instance without exposing it in plain text. Additionally, specifying the KMS key ID for encryption adds another layer of security to the secret stored in Secrets Manager.

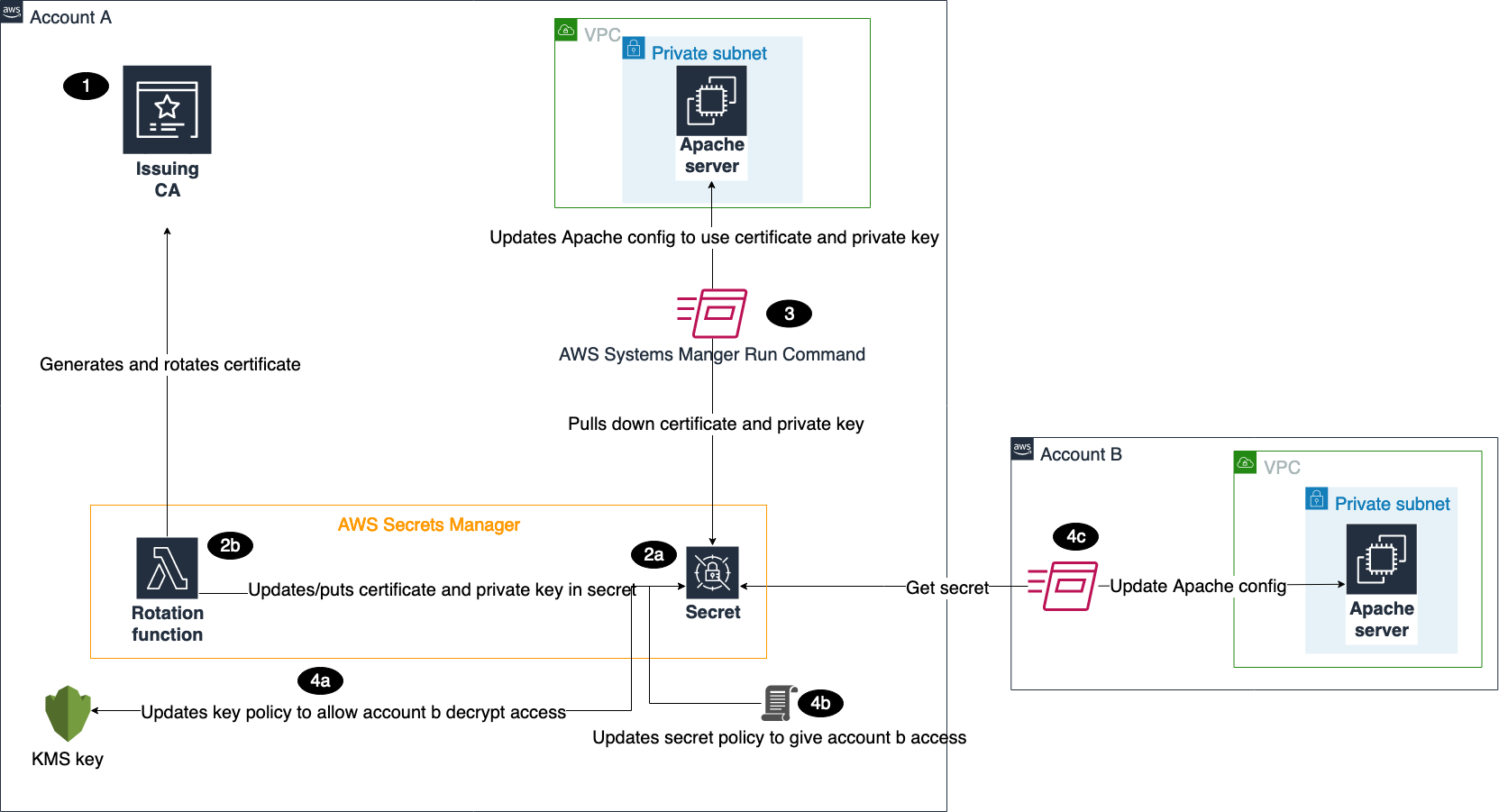

- Retrieving secrets from Secrets Manager.

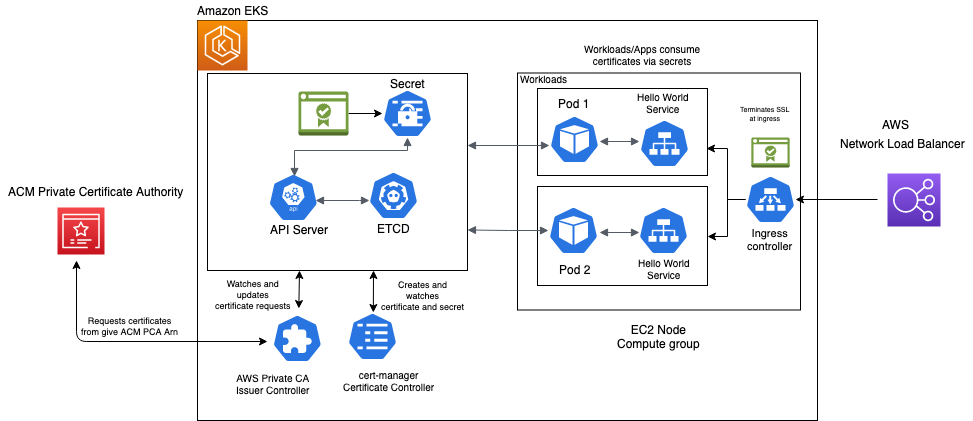

- The secrets store CSI driver is a Kubernetes-native driver that provides a common interface for Secrets Store integration with Amazon EKS. The secrets-store-csi-driver-provider-aws is a specific provider that provides integration with the Secrets Manager.

- To set up Amazon EKS, the first step is to create a SecretProviderClass, which specifies the secret ID of the Amazon RDS database. This SecretProviderClass is then used in the Kubernetes deployment object to deploy the WordPress application and dynamically retrieve the secrets from the secret manager during deployment. This process is entirely dynamic and verifies that no secrets are recorded anywhere. The SecretProviderClass is created on a specific app namespace, such as the wp namespace.

- Sample code:

When using Secrets manager, be aware of the following best practices for managing and securing Secrets Manager secrets:

- Use AWS Identity and Access Management (IAM) identity policies to define who can perform specific actions on Secrets Manager secrets, such as reading, writing, or deleting them.

- Secrets Manager resource policies can be used to manage access to secrets at a more granular level. This includes defining who has access to specific secrets based on attributes such as IP address, time of day, or authentication status.

- Encrypt the Secrets Manager secret using an AWS KMS key.

- Using CloudFormation templates to automate the creation and management of Secrets Manager secrets including rotation.

- Use AWS CloudTrail to monitor access and changes to Secrets Manager secrets.

- Use CloudFormation hooks to validate the Secrets Manager secret before and after deployment. If the secret fails validation, the deployment is rolled back.

Encrypting data with AWS KMS

Data encryption involves converting sensitive information into a coded form that can only be accessed with the appropriate decryption key. By implementing encryption measures throughout your pipeline, you make sure that even if unauthorized individuals gain access to the data, they won’t be able to understand its contents.

Here’s how data at rest encryption using AWS KMS is implemented in this sample application architecture:

- Amazon RDS secret encryption

- Encrypting secrets: An AWS KMS customer managed key is used to encrypt the secrets stored in Secrets Manager to ensure their confidentiality during the DevOps build process.

- Sample code:

- Amazon RDS data encryption

- Enable encryption for an Amazon RDS instance using CloudFormation. Specify the KMS key ARN in the CloudFormation stack and RDS will use the specified KMS key to encrypt data at rest.

- Sample code:

- Kubernetes Pods storage

- Use encrypted Amazon Elastic Block Store (Amazon EBS) volumes to store configuration data. Create a managed encrypted Amazon EBS volume using the following code snippet, and then deploy a Kubernetes pod with the persistent volume claim (PVC) mounted as a volume.

- Sample code:

- Amazon ECR

- To secure data at rest in Amazon Elastic Container Registry (Amazon ECR), enable encryption at rest for Amazon ECR repositories using the AWS Management Console or AWS Command Line Interface (AWS CLI). ECR uses AWS KMS to encrypt the data at rest.

- Create a KMS key for Amazon ECR and use that key to encrypt the data at rest.

- Automate the creation of encrypted ECR repositories and enable encryption at rest using a DevOps pipeline, use CodePipeline to automate the deployment of the CloudFormation stack.

- Define the creation of encrypted Amazon ECR repositories as part of the pipeline.

- Sample code:

AWS best practices for managing encryption keys in an AWS environment

To effectively manage encryption keys and verify the security of data at rest in an AWS environment, we recommend the following best practices:

- Use separate AWS KMS customer managed KMS keys for data classifications to provide better control and management of keys.

- Enforce separation of duties by assigning different roles and responsibilities for key management tasks, such as creating and rotating keys, setting key policies, or granting permissions. By segregating key management duties, you can reduce the risk of accidental or intentional key compromise and improve overall security.

- Use CloudTrail to monitor AWS KMS API activity and detect potential security incidents.

- Rotate KMS keys as required by your regulatory requirements.

- Use CloudFormation hooks to validate KMS key policies to verify that they align with organizational and regulatory requirements.

Following these best practices and implementing encryption at rest for different services such as Amazon RDS, Kubernetes Pods storage, and Amazon ECR, will help ensure that data is encrypted at rest.

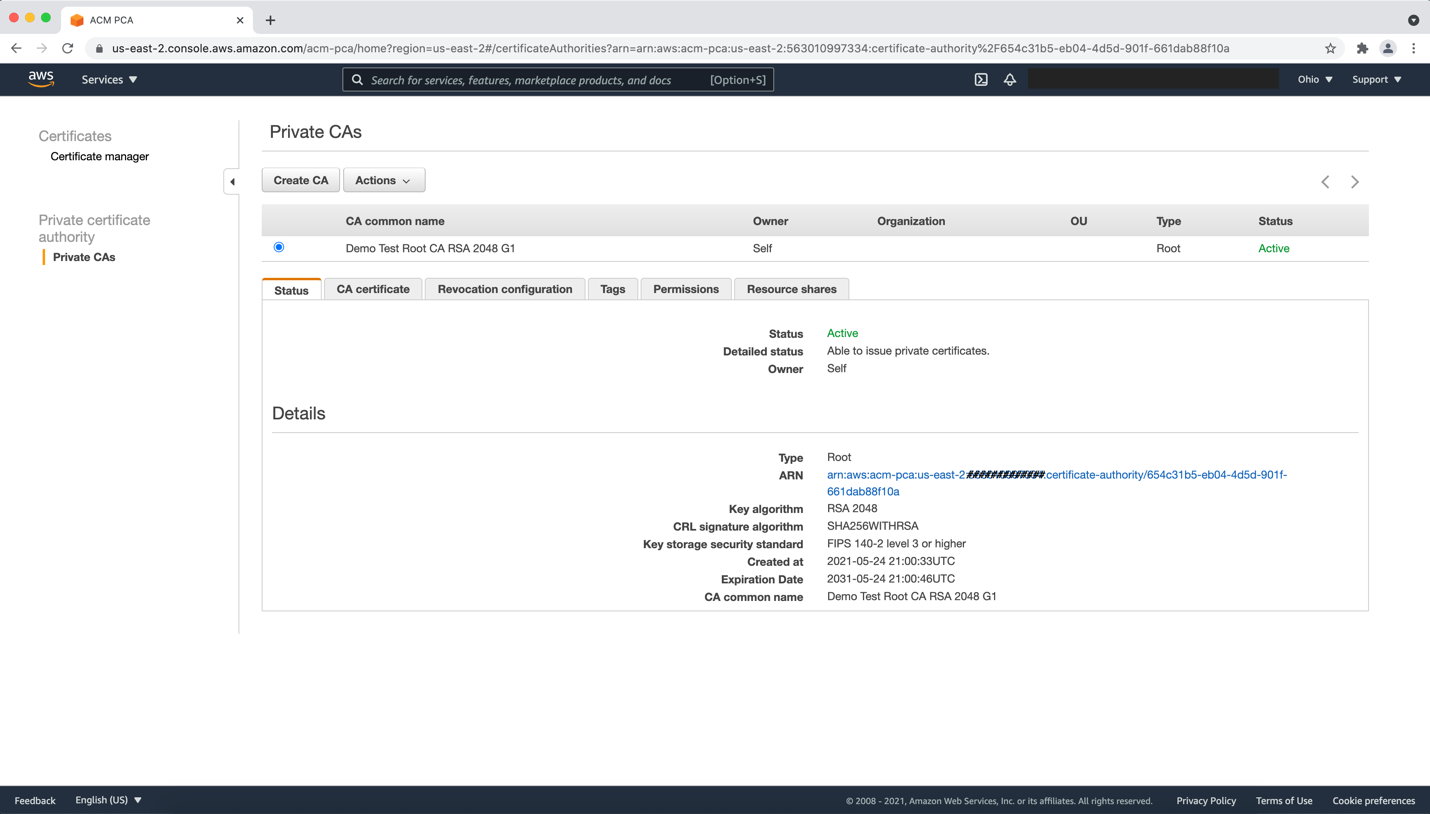

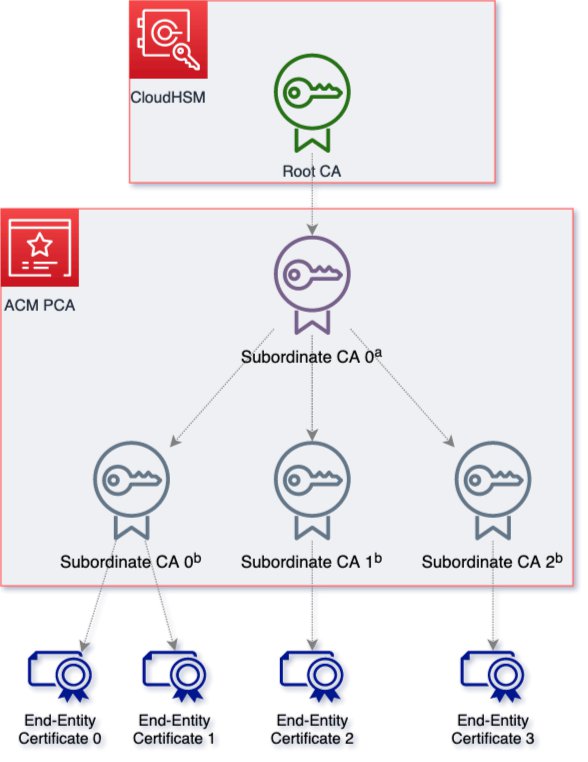

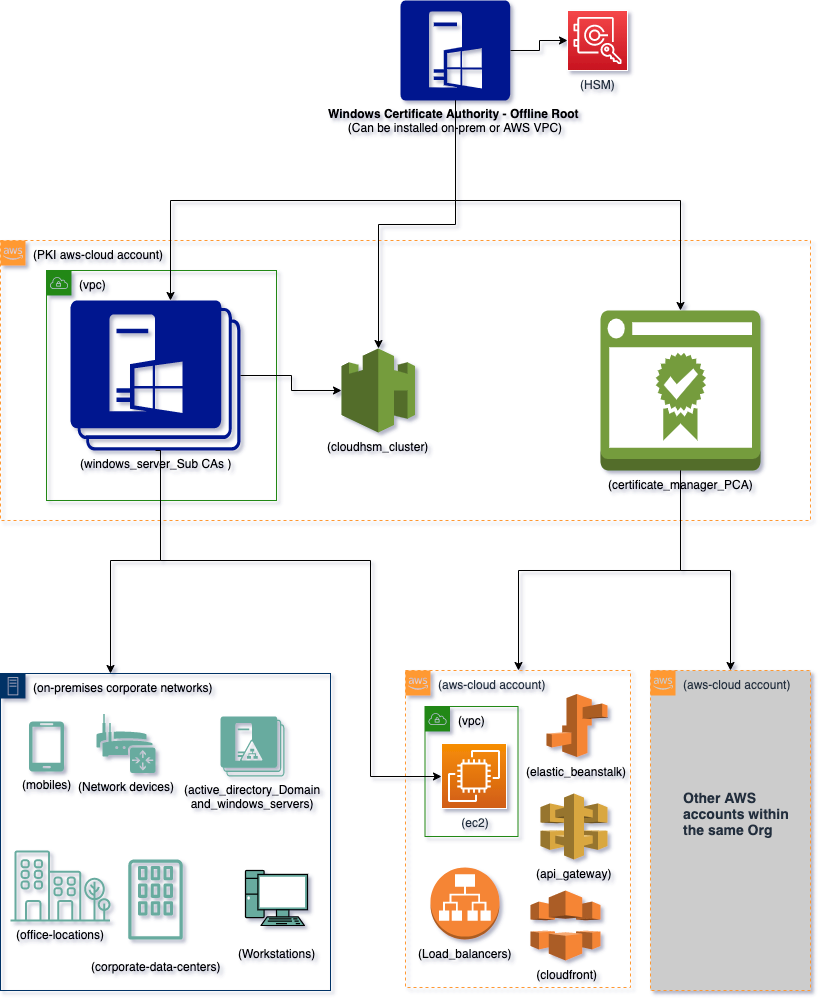

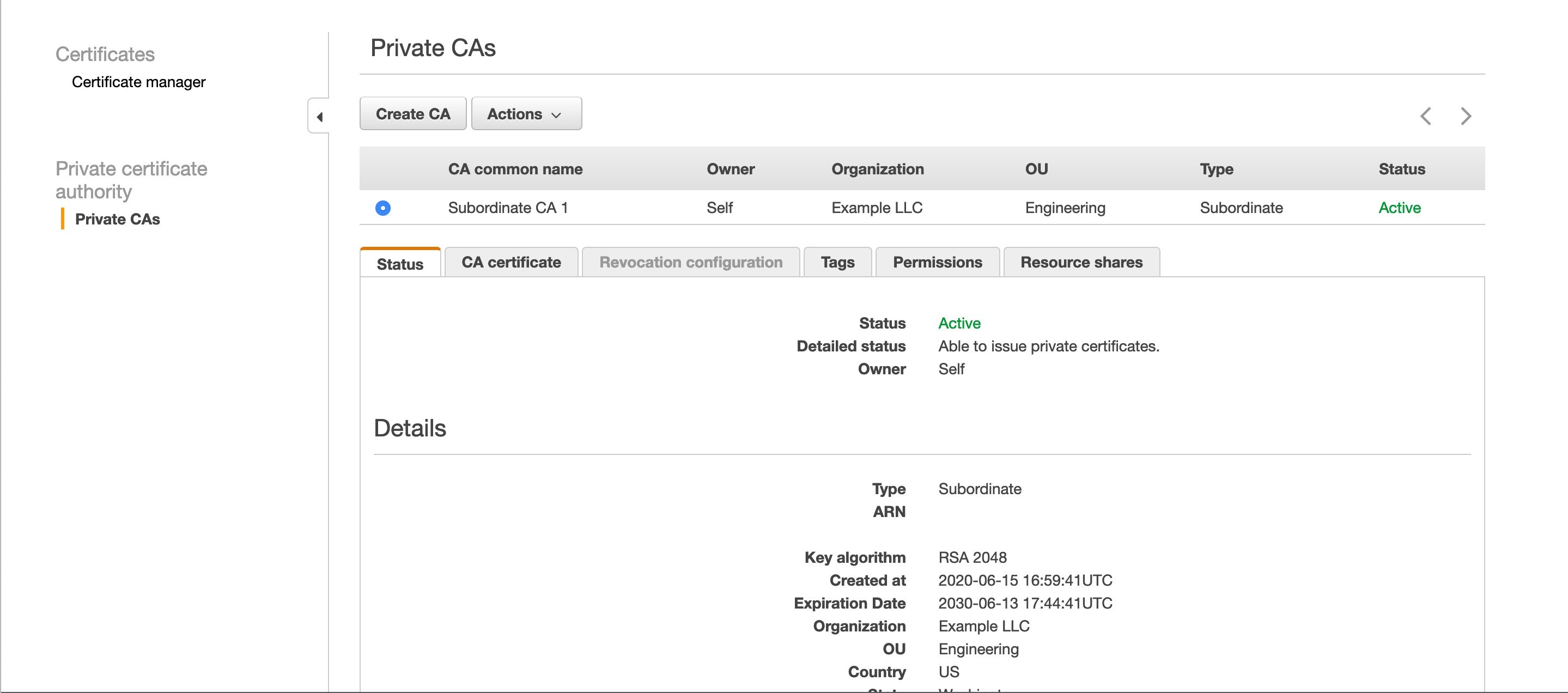

Securing communication with ACM

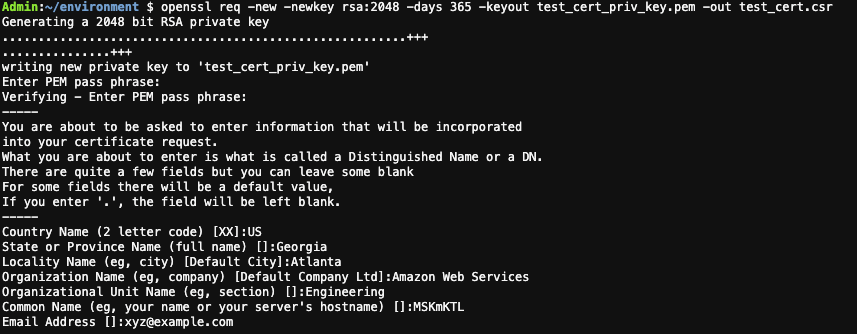

Secure communication is a critical requirement for modern environments and implementing it in a DevOps pipeline is crucial for verifying that the infrastructure is secure, consistent, and repeatable across different environments. In this WordPress application running on Amazon EKS, ACM is used to secure communication end-to-end. Here’s how to achieve this:

- Provision TLS certificates with ACM using a DevOps pipeline

- To provision TLS certificates with ACM in a DevOps pipeline, automate the creation and deployment of TLS certificates using ACM. Use AWS CloudFormation templates to create the certificates and deploy them as part of infrastructure as code. This verifies that the certificates are created and deployed consistently and securely across multiple environments.

- Sample code:

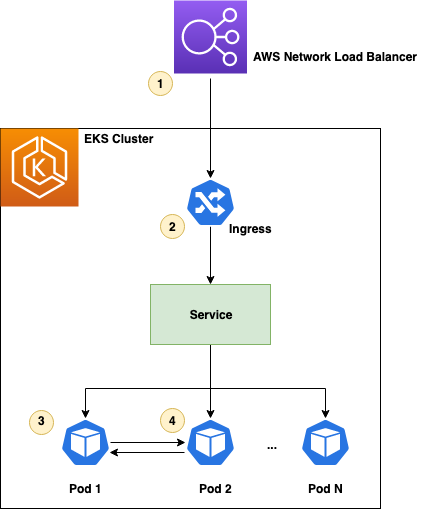

- Provisioning of ALB and integration of TLS certificate using AWS ALB Ingress Controller for Kubernetes

- Use a DevOps pipeline to create and configure the TLS certificates and ALB. This verifies that the infrastructure is created consistently and securely across multiple environments.

- Sample code:

- CloudFront and ALB

- To secure communication between CloudFront and the ALB, verify that the traffic from the client to CloudFront and from CloudFront to the ALB is encrypted using the TLS certificate.

- Sample code:

- ALB to Kubernetes Pods

- To secure communication between the ALB and the Kubernetes Pods, use the Kubernetes ingress resource to terminate SSL/TLS connections at the ALB. The ALB sends the PROTO metadata http connection header to the WordPress web server. The web server checks the incoming traffic type (http or https) and enables the HTTPS connection only hereafter. This verifies that pod responses are sent back to ALB only over HTTPS.

- Additionally, using the X-Forwarded-Proto header can help pass the original protocol information and help avoid issues with the $_SERVER[‘HTTPS’] variable in WordPress.

- Sample code:

- Kubernetes Pods to Amazon RDS

- To secure communication between the Kubernetes Pods in Amazon EKS and the Amazon RDS database, use SSL/TLS encryption on the database connection.

- Configure an Amazon RDS MySQL instance with enhanced security settings to verify that only TLS-encrypted connections are allowed to the database. This is achieved by creating a DB parameter group with a parameter called require_secure_transport set to ‘1‘. The WordPress configuration file is also updated to enable SSL/TLS communication with the MySQL database. Then enable the TLS flag on the MySQL client and the Amazon RDS public certificate is passed to ensure that the connection is encrypted using the TLS_AES_256_GCM_SHA384 protocol. The sample code that follows focuses on enhancing the security of the RDS MySQL instance by enforcing encrypted connections and configuring WordPress to use SSL/TLS for communication with the database.

- Sample code:

In this architecture, AWS WAF is enabled at CloudFront to protect the WordPress application from common web exploits. AWS WAF for CloudFront is recommended and use AWS managed WAF rules to verify that web applications are protected from common and the latest threats.

Here are some AWS best practices for securing communication with ACM:

- Use SSL/TLS certificates: Encrypt data in transit between clients and servers. ACM makes it simple to create, manage, and deploy SSL/TLS certificates across your infrastructure.

- Use ACM-issued certificates: This verifies that your certificates are trusted by major browsers and that they are regularly renewed and replaced as needed.

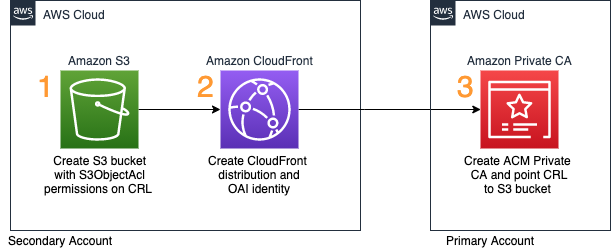

- Implement certificate revocation: Implement certificate revocation for SSL/TLS certificates that have been compromised or are no longer in use.

- Implement strict transport security (HSTS): This helps protect against protocol downgrade attacks and verifies that SSL/TLS is used consistently across sessions.

- Configure proper cipher suites: Configure your SSL/TLS connections to use only the strongest and most secure cipher suites.

Monitoring and auditing with CloudTrail

In this section, we discuss the significance of monitoring and auditing actions in your AWS account using CloudTrail. CloudTrail is a logging and tracking service that records the API activity in your AWS account, which is crucial for troubleshooting, compliance, and security purposes. Enabling CloudTrail in your AWS account and securely storing the logs in a durable location such as Amazon Simple Storage Service (Amazon S3) with encryption is highly recommended to help prevent unauthorized access. Monitoring and analyzing CloudTrail logs in real-time using CloudWatch Logs can help you quickly detect and respond to security incidents.

In a DevOps pipeline, you can use infrastructure-as-code tools such as CloudFormation, CodePipeline, and CodeBuild to create and manage CloudTrail consistently across different environments. You can create a CloudFormation stack with the CloudTrail configuration and use CodePipeline and CodeBuild to build and deploy the stack to different environments. CloudFormation hooks can validate the CloudTrail configuration to verify it aligns with your security requirements and policies.

It’s worth noting that the aspects discussed in the preceding paragraph might not apply if you’re using AWS Organizations and the CloudTrail Organization Trail feature. When using those services, the management of CloudTrail configurations across multiple accounts and environments is streamlined. This centralized approach simplifies the process of enforcing security policies and standards uniformly throughout the organization.

By following these best practices, you can effectively audit actions in your AWS environment, troubleshoot issues, and detect and respond to security incidents proactively.

Complete code for sample architecture for deployment

The complete code repository for the sample WordPress application architecture demonstrates how to implement data protection in a DevOps pipeline using various AWS services. The repository includes both infrastructure code and application code that covers all aspects of the sample architecture and implementation steps.

The infrastructure code consists of a set of CloudFormation templates that define the resources required to deploy the WordPress application in an AWS environment. This includes the Amazon Virtual Private Cloud (Amazon VPC), subnets, security groups, Amazon EKS cluster, Amazon RDS instance, AWS KMS key, and Secrets Manager secret. It also defines the necessary security configurations such as encryption at rest for the RDS instance and encryption in transit for the EKS cluster.

The application code is a sample WordPress application that is containerized using Docker and deployed to the Amazon EKS cluster. It shows how to use the Application Load Balancer (ALB) to route traffic to the appropriate container in the EKS cluster, and how to use the Amazon RDS instance to store the application data. The code also demonstrates how to use AWS KMS to encrypt and decrypt data in the application, and how to use Secrets Manager to store and retrieve secrets. Additionally, the code showcases the use of ACM to provision SSL/TLS certificates for secure communication between the CloudFront and the ALB, thereby ensuring data in transit is encrypted, which is critical for data protection in a DevOps pipeline.

Conclusion

Strengthening the security and compliance of your application in the cloud environment requires automating data protection measures in your DevOps pipeline. This involves using AWS services such as Secrets Manager, AWS KMS, ACM, and AWS CloudFormation, along with following best practices.

By automating data protection mechanisms with AWS CloudFormation, you can efficiently create a secure pipeline that is reproducible, controlled, and audited. This helps maintain a consistent and reliable infrastructure.

Monitoring and auditing your DevOps pipeline with AWS CloudTrail is crucial for maintaining compliance and security. It allows you to track and analyze API activity, detect any potential security incidents, and respond promptly.

By implementing these best practices and using data protection mechanisms, you can establish a secure pipeline in the AWS cloud environment. This enhances the overall security and compliance of your application, providing a reliable and protected environment for your deployments.

If you have feedback about this post, submit comments in the Comments section below. If you have questions about this post, contact AWS Support.

Want more AWS Security news? Follow us on Twitter.