Post Syndicated from Macey Neff original https://aws.amazon.com/blogs/compute/create-use-and-troubleshoot-launch-scripts-on-amazon-lightsail/

This blog post is written by Brian Graf, Senior Developer Advocate, Amazon Lightsail and Sophia Parafina, Senior Developer Advocate.

Amazon Lightsail is a virtual private server (VPS) for deploying both operating systems (OS) and pre-packaged applications, such as WordPress, Plesk, cPanel, PrestaShop, and more. When deploying these instances, you can run launch scripts with additional commands such as installation of applications, configuration of system files, or installing pre-requisites for your application.

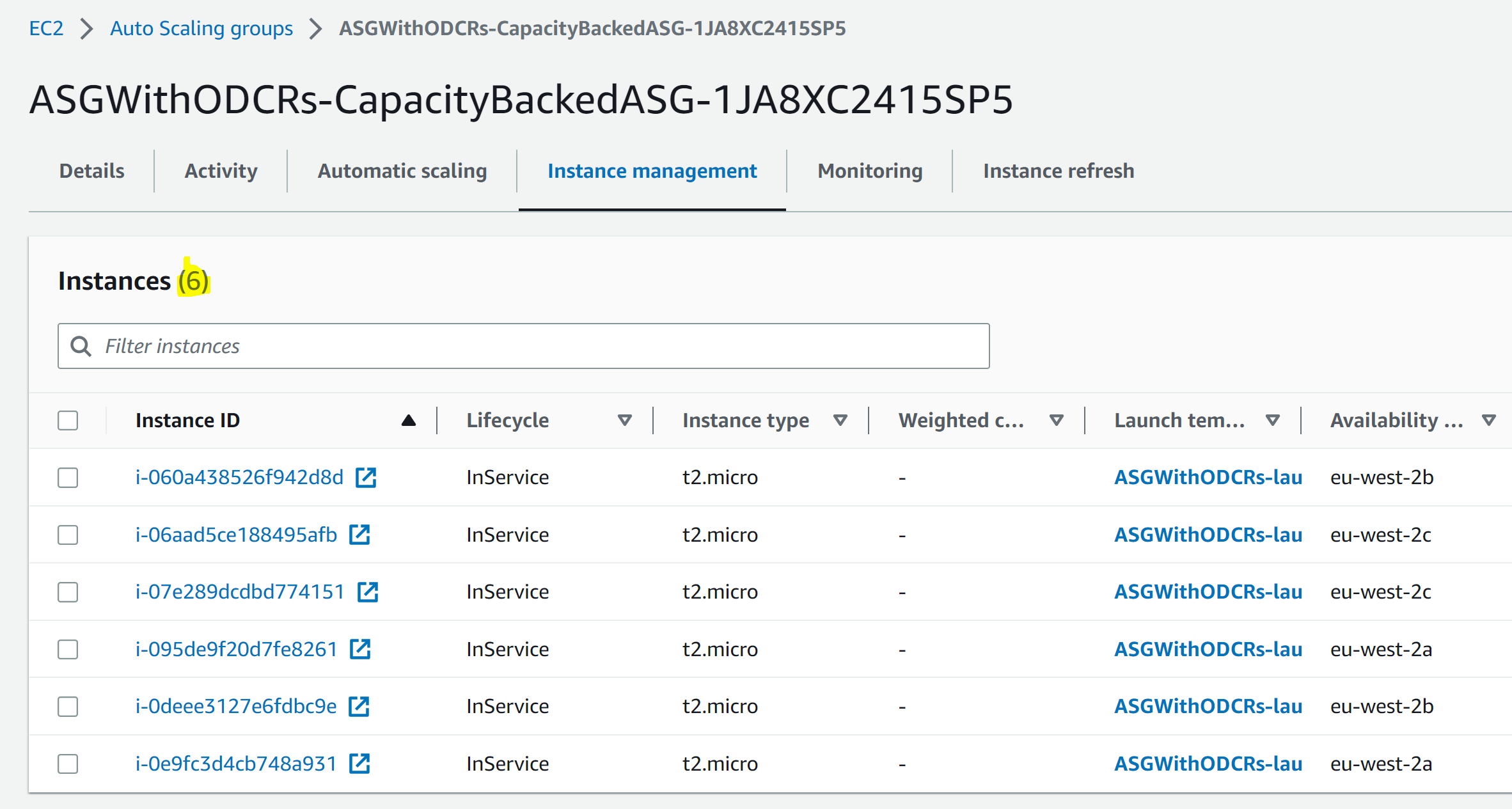

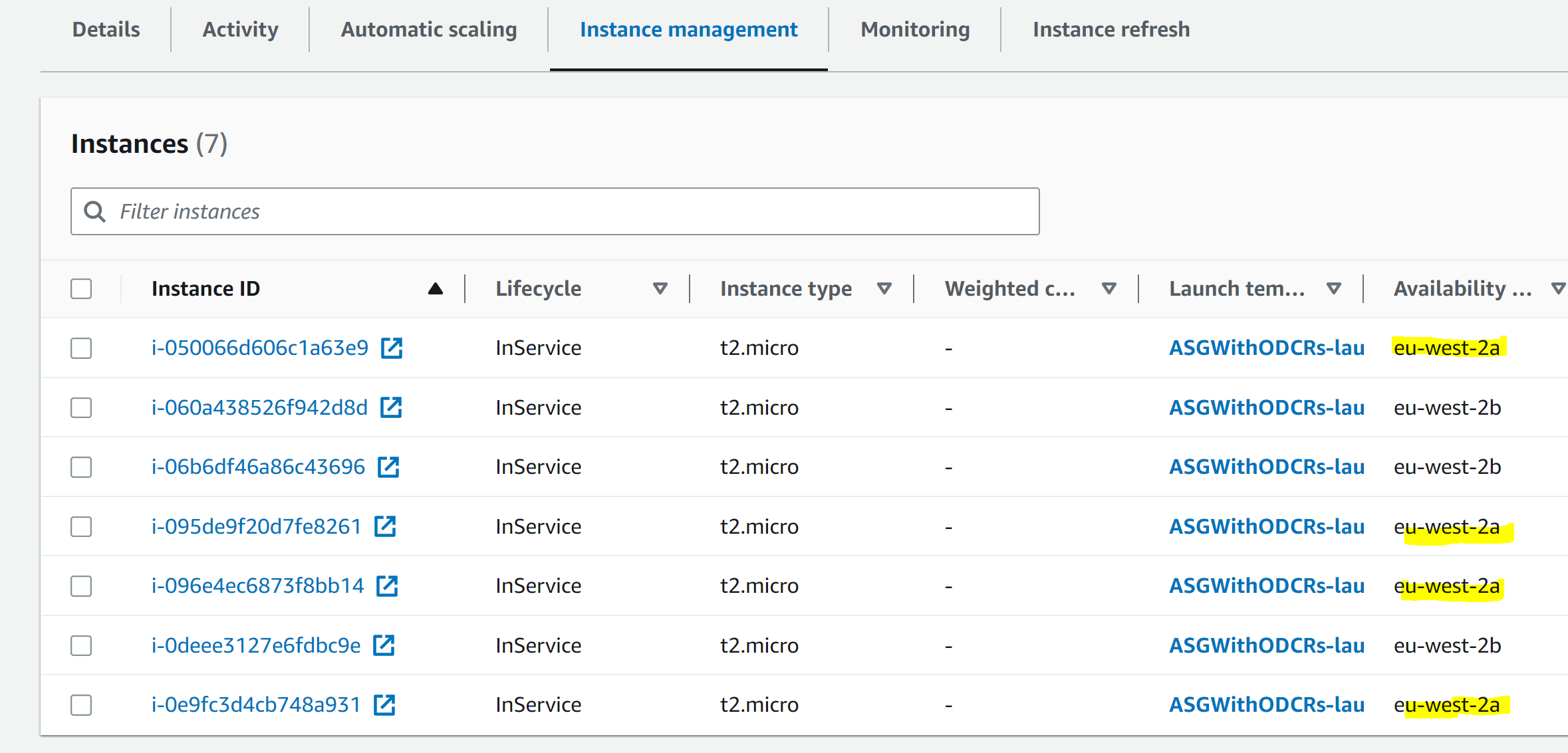

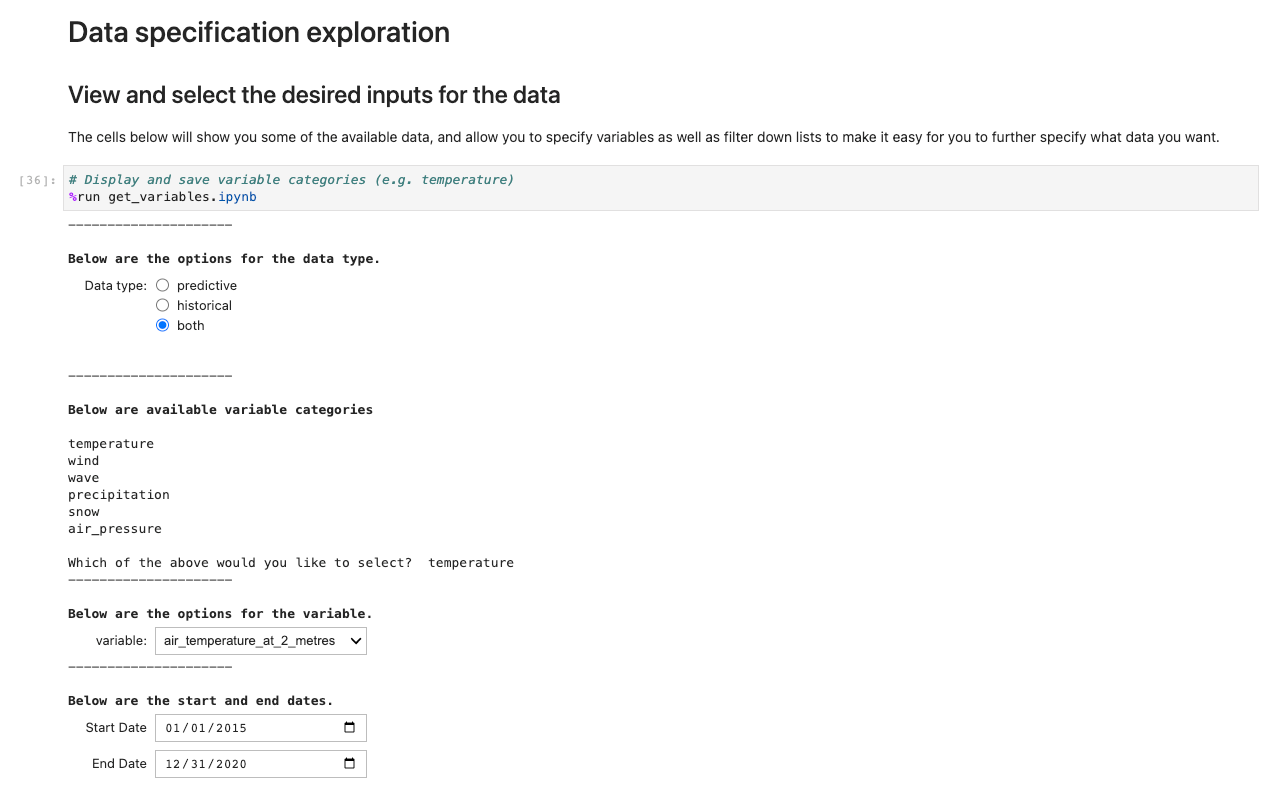

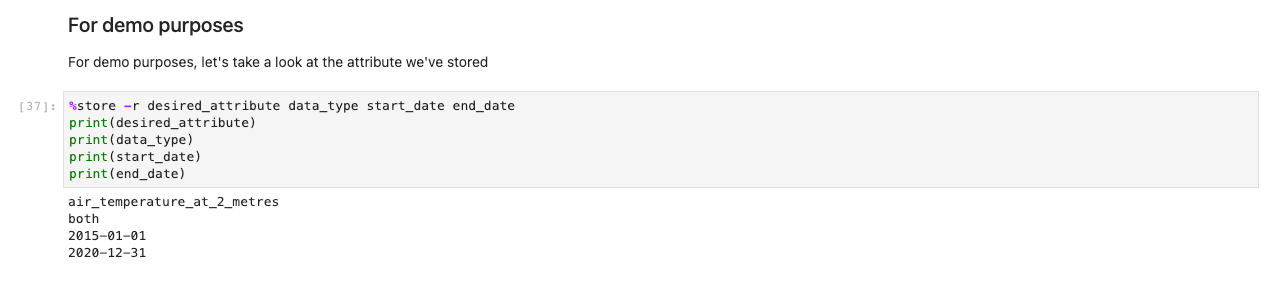

Where do I add a launch script?

If you’re deploying an instance with the Lightsail console, launch scripts can be added to an instance at deployment. They are added in the ‘deploy instance’ page:

The launch script must be added before the instance is deployed, because launch scripts can’t retroactively run after deployment.

Anatomy of a Windows Launch Script

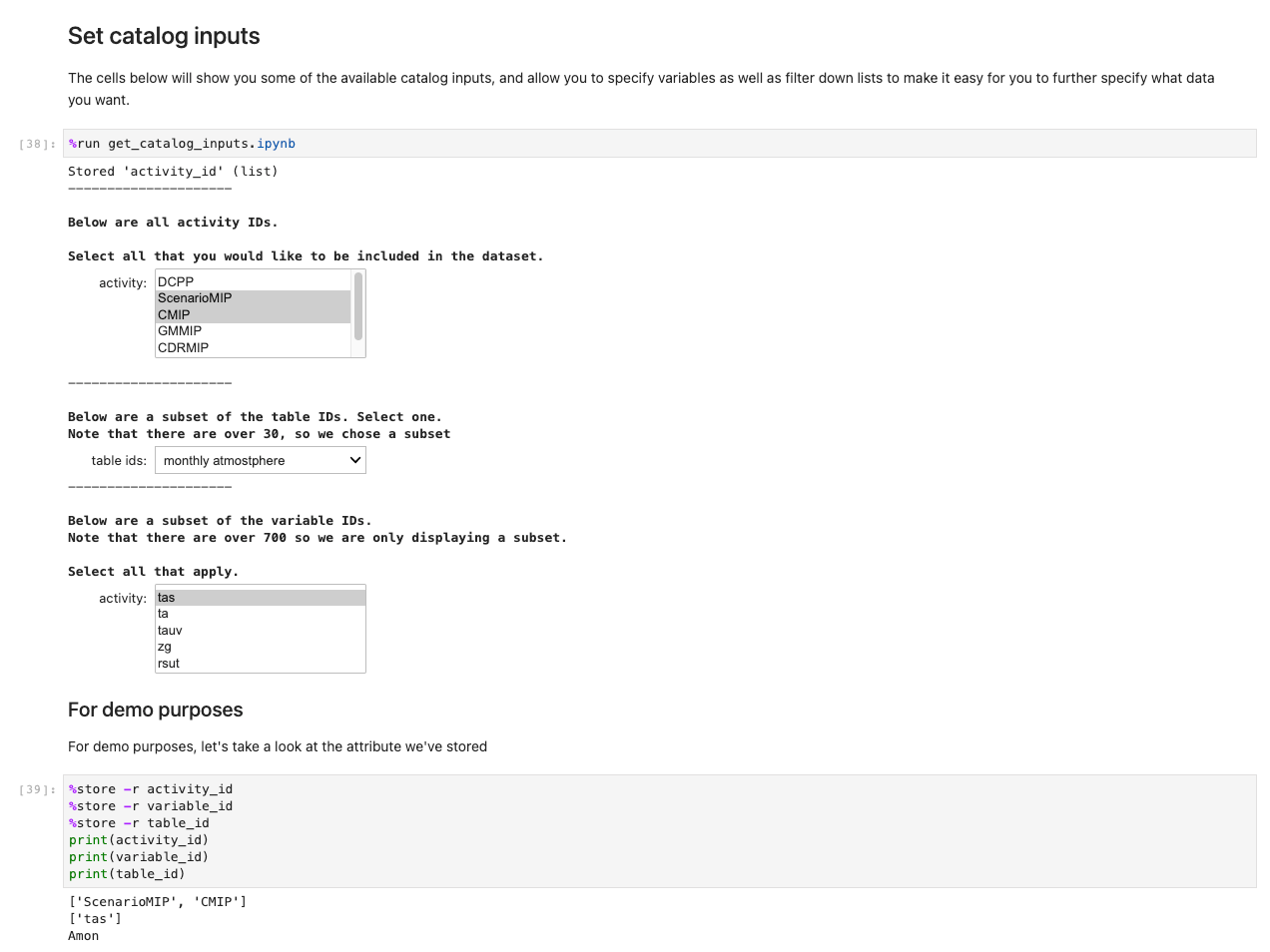

When deploying a Lightsail Windows instance, you can use a batch script or a PowerShell script in the ‘launch script’ textbox. Of the two options, PowerShell is more extensible and provides greater flexibility for configuration and control.

If you choose to write your launch script as a batch file, you must add <script> </script> tags at the beginning and end of your code respectively. Alternatively, a launch script in PowerShell, must use the <powershell></powershell> tags in a similar fashion.

After the closing </script> or </powershell> tag, you must add a <persist></persist> tag on the following line. The persist tag is used to determine if this is a run-once command or if it should run every time your instance is rebooted or changed from the ‘Stop’ to ‘Start’ state. If you want your script to run every time the instance is rebooted or started, then you must set the persist tag to ‘true’. If you want your launch script to just run once, then you would set your persist tag to ‘false’.

Anatomy of a Linux Launch Script

Like a Windows launch script, a Linux launch script requires specific code on the first row of the textbox to successfully execute during deployment. You must place ‘#!/bin/bash’ as the first line of code to set the shell that executes the rest of the script. After first line of code, you can continue adding additional commands to achieve the results you want.

How do I know if my Launch Script ran successfully?

Although running launch scripts is convenient to create a baseline instance, it’s possible that your instance doesn’t achieve the desired end-state because of an error in your script or permissions issues. You must troubleshoot to see why the launch script didn’t complete successfully. To find if the launch script ran successfully, refer to the instance logs to determine whether your launch script was successful or not.

For Windows, the launch log can be found in: C:\ProgramData\Amazon\EC2-Windows\launch\Log\UserdataExecution.log. Note that ProgramData is a hidden folder, and unless you access the file from PowerShell or Command Prompt, you must use Windows File Explorer (`View > Show > Hidden items`) folders to see it.

For Linux, the launch log can be found in: /var/log/cloud-init-output.log and can be monitored after your instance launches by tailing the log by typing the following in the terminal:

tail -f /var/log/cloud-init-output.logIf you want to see the entire log file including commands that have already run before you opened the log file, then you can type the following in the terminal:

less +F /var/log/cloud-init-output.logOn a Windows instance, an easy way to monitor the UserdataExecution.log is to add the following code in your launch script, which creates a shortcut to tail or watch the log as commands are executing:

# Create a log-monitoring script to monitor the progress of the launch script execution

$monitorlogs = @"

get-content C:\ProgramData\Amazon\EC2-Windows\launch\Log\UserdataExecution.log -wait

"@

# Save the log-monitoring script to the desktop for the user

$monitorlogs | out-file -FilePath C:\Users\Administrator\Desktop\MonitorLogs.ps1 -Encoding utf8 -Force

</powershell>

<persist>false</persist>If the script was executed, then the last line of the log should say ‘{Timestamp}: User data script completed’.

However, if you want more detail, you can build the logging into your launch script. For example, you can append a text or log file with each command so that you can read the output in an easy-to-access location:

<powershell>

# Set the location for the log file. In this case,

# it will appear on the desktop of your Lightsail instance

$loc = "c:\Users\Administrator\Desktop\mylog.txt"

# Write text to the log file

Write-Output "Starting Script" >> $loc

# Download and install Chocolatey to do unattended installations of the rest of the apps.

iex ((New-Object System.Net.WebClient).DownloadString('https://chocolatey.org/install.ps1'))

# You could run commands like this to output the progress to the log file:

# Install vscode and all dependencies

choco install -y vscode --force --force-dependencies --verbose >> $loc

# Install git and all dependencies

choco install -y git --force --force-dependencies --verbose >> $loc

# Completed

Write-Output "Completed" >> $loc

</powershell>

<persist>false</persist>This code creates a log file, outputs data, and appends it along the way. If there is an issue, then you can see where the logs stopped or errors appeared.

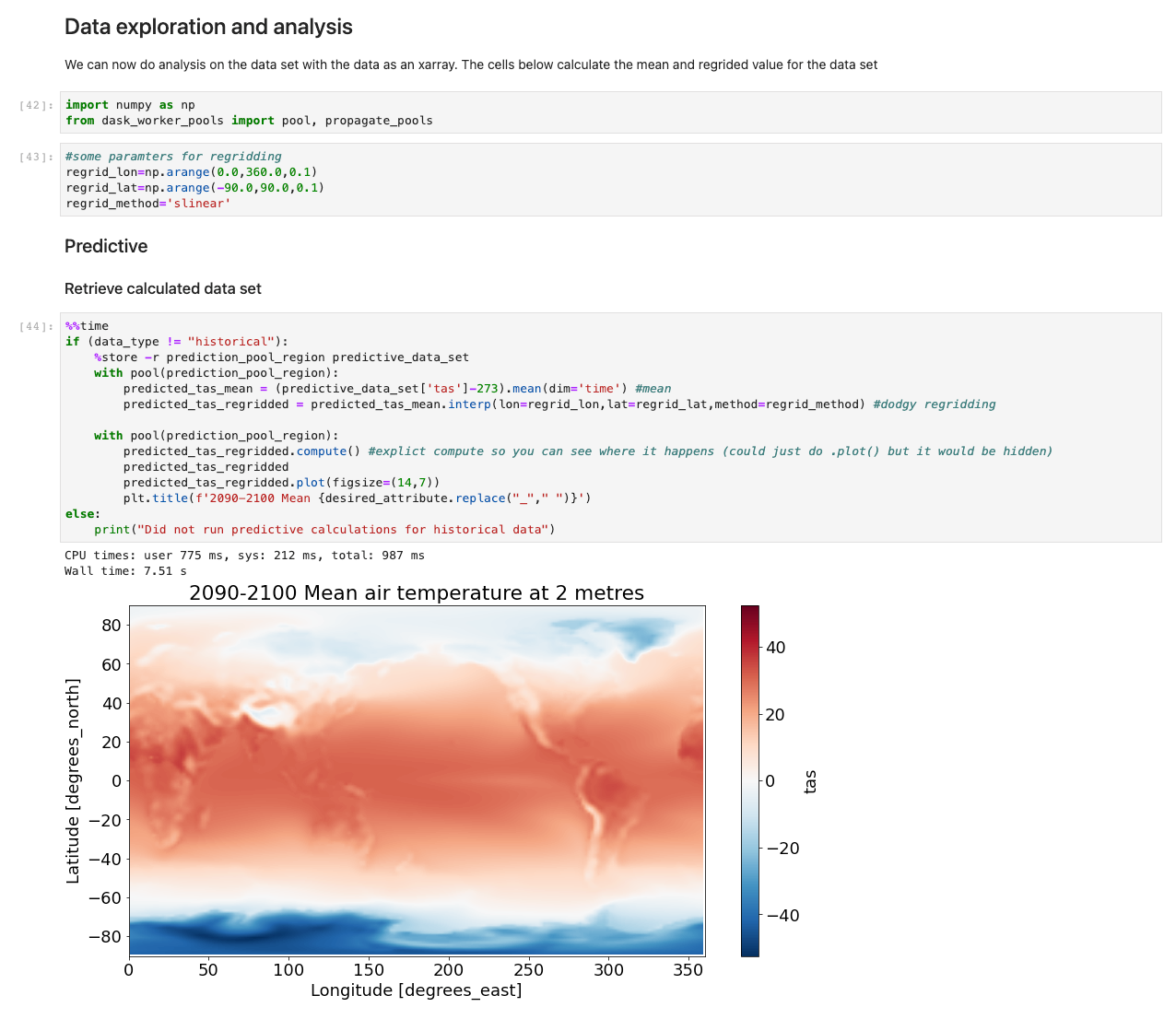

For Ubuntu and Amazon Linux 2

If the cloud-init-output.log isn’t comprehensive enough, then you can re-direct the output from your commands to a log file of your choice. In this example, we create a log file in the /tmp/ directory and push all output from our commands to this file.

# Create the log file

touch /tmp/launchscript.log

# Add text to the log file if you so choose

echo 'Starting' >> /tmp/launchscript.log

# Update package index

sudo apt update >> /tmp/launchscript.log

# Install software to manage independent software vendor sources

sudo apt -y install software-properties-common >> /tmp/launchscript.log

# Add the repository for all PHP versions

sudo add-apt-repository -y ppa:ondrej/php >> /tmp/launchscript.log

# Install Web server, mySQL client, PHP (and packages), unzip, and curl

sudo apt -y install apache2 mysql-client-core-8.0 php8.0 libapache2-mod-php8.0 php8.0-common php8.0-imap php8.0-mbstring php8.0-xmlrpc php8.0-soap php8.0-gd php8.0-xml php8.0-intl php8.0-mysql php8.0-cli php8.0-bcmath php8.0-ldap php8.0-zip php8.0-curl unzip curl >> /tmp/launchscript.log

# Any final text you want to include

echo 'Completed' >> /tmp/launchscript.logIt’s possible to check the logs before the launch script has finished executing. One way to follow along is to ‘tail’ the log file. This lets you stream all updates as they occur. You can monitor the log using:

‘tail -f /tmp/launchscript.log’. </code>Using Launch Scripts from AWS Command Line Interface (AWS CLI)

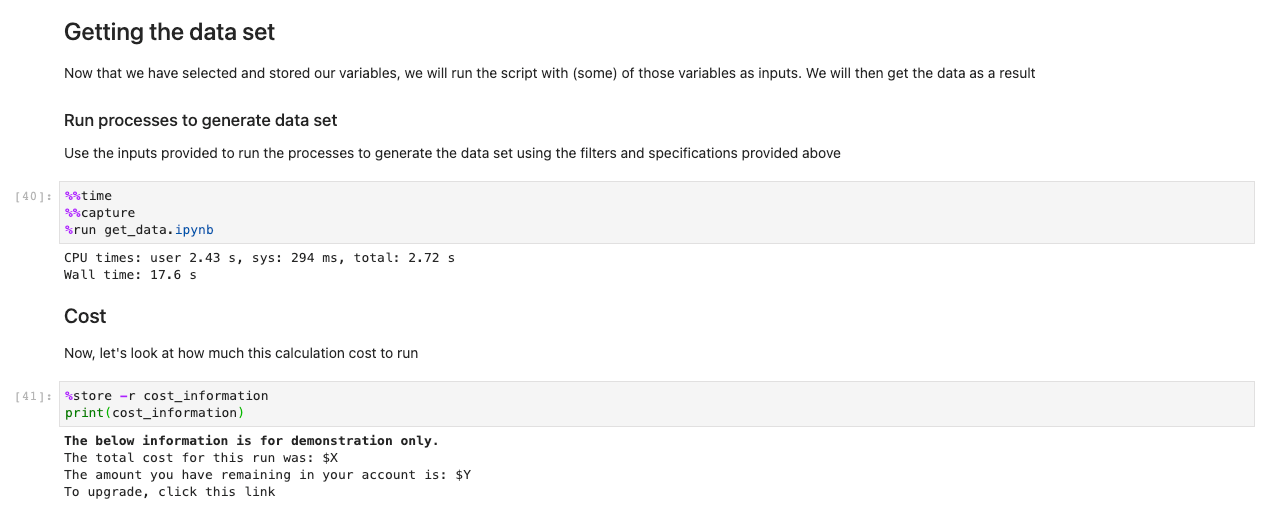

You can deploy their Lightsail instances from the AWS Command Line Interface (AWS CLI) instead of the Lightsail console. You can add launch scripts to the AWS CLI command as a parameter by creating a variable with the script and referencing the variable, or by saving the launch script as a file and referencing the local file location on your computer.

The launch script is still written the same way as the previous examples. For a Windows instance with a PowerShell launch script, you can deploy a Lightsail instance with a launch script with the following code:

# PowerShell script saved in the Downloads folder:

$loc = "c:\Users\Administrator\Desktop\mylog.txt"

# Write text to the log file

Write-Output "Starting Script" >> $loc

# Download and install Chocolatey to do unattended installations of the rest of the apps.

iex ((New-Object System.Net.WebClient).DownloadString('https://chocolatey.org/install.ps1'))

# You could run commands like this to output the progress to the log file:

# Install vscode and all dependencies

choco install -y vscode --force --force-dependencies --verbose >> $loc

# Install git and all dependencies

choco install -y git --force --force-dependencies --verbose >> $loc

# Completed

Write-Output "Completed" >> $locAWS CLI code to deploy a Windows Server 2019 medium instance in the us-west-2a Availability Zone:

aws lightsail create-instances \

--instance-names "my-windows-instance-1" \

--availability-zone us-west-2a \

--blueprint-id windows_server_2019 \

--bundle-id medium_win_2_0 \

--region us-west-2 \

--user-data file://~/Downloads/powershell_script.ps1Clean up

Remember to delete resources when you are finished using them to avoid incurring future costs.

Conclusion

You now have the understanding and examples of how to create and troubleshoot Lightsail launch scripts both through the Lightsail console and AWS CLI. As demonstrated in this blog, using launch scripts, you can increase your productivity and decrease the deployment time and configuration of your applications. For more examples of using launch scripts, check out the aws-samples GitHub repository. You now have all the foundational building blocks you need to successfully script automated instance configuration. To learn more about Lightsail, visit the Lightsail service page.