Post Syndicated from Clarke Rodgers original https://aws.amazon.com/blogs/security/building-a-security-first-mindset-three-key-themes-from-aws-reinvent-2023/

Amazon CSO Stephen Schmidt

AWS re:Invent drew 52,000 attendees from across the globe to Las Vegas, Nevada, November 27 to December 1, 2023.

Now in its 12th year, the conference featured 5 keynotes, 17 innovation talks, and over 2,250 sessions and hands-on labs offering immersive learning and networking opportunities.

With dozens of service and feature announcements—and innumerable best practices shared by AWS executives, customers, and partners—the air of excitement was palpable. We were on site to experience all of the innovations and insights, but summarizing highlights isn’t easy. This post details three key security themes that caught our attention.

Security culture

When we think about cybersecurity, it’s natural to focus on technical security measures that help protect the business. But organizations are made up of people—not technology. The best way to protect ourselves is to foster a proactive, resilient culture of cybersecurity that supports effective risk mitigation, incident detection and response, and continuous collaboration.

In Sustainable security culture: Empower builders for success, AWS Global Services Security Vice President Hart Rossman and AWS Global Services Security Organizational Excellence Leader Sarah Currey presented practical strategies for building a sustainable security culture.

Rossman noted that many customers who meet with AWS about security challenges are attempting to manage security as a project, a program, or a side workstream. To strengthen your security posture, he said, you have to embed security into your business.

| “You’ve got to understand early on that security can’t be effective if you’re running it like a project or a program. You really have to run it as an operational imperative—a core function of the business. That’s when magic can happen.” — Hart Rossman, Global Services Security Vice President at AWS |

Three best practices can help:

- Be consistently persistent. Routinely and emphatically thank employees for raising security issues. It might feel repetitive, but treating security events and escalations as learning opportunities helps create a positive culture—and it’s a practice that can spread to other teams. An empathetic leadership approach encourages your employees to see security as everyone’s responsibility, share their experiences, and feel like collaborators.

- Brief the board. Engage executive leadership in regular, business-focused meetings. By providing operational metrics that tie your security culture to the impact that it has on customers, crisply connecting data to business outcomes, and providing an opportunity to ask questions, you can help build the support of executive leadership, and advance your efforts to establish a sustainable proactive security posture.

- Have a mental model for creating a good security culture. Rossman presented a diagram (Figure 1) that highlights three elements of security culture he has observed at AWS: a student, a steward, and a builder. If you want to be a good steward of security culture, you should be a student who is constantly learning, experimenting, and passing along best practices. As your stewardship grows, you can become a builder, and progress the culture in new directions.

Figure 1: Sample mental model for building security culture

Thoughtful investment in the principles of inclusivity, empathy, and psychological safety can help your team members to confidently speak up, take risks, and express ideas or concerns. This supports an escalation-friendly culture that can reduce employee burnout, and empower your teams to champion security at scale.

In Shipping securely: How strong security can be your strategic advantage, AWS Enterprise Strategy Director Clarke Rodgers reiterated the importance of security culture to building a security-first mindset.

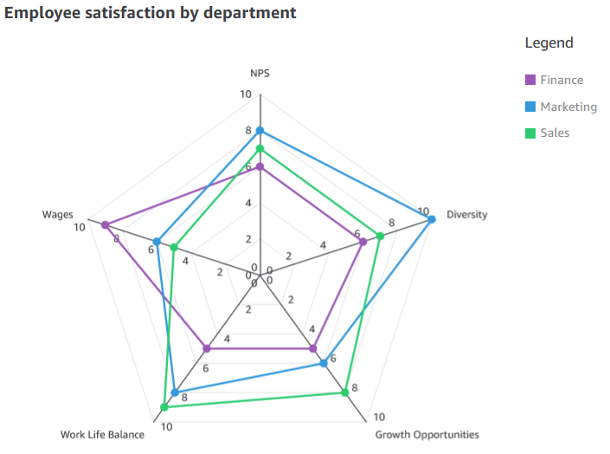

Rodgers highlighted three pillars of progression (Figure 2)—aware, bolted-on, and embedded—that are based on meetings with more than 800 customers. As organizations mature from a reactive security posture to a proactive, security-first approach, he noted, security culture becomes a true business enabler.

| “When organizations have a strong security culture and everyone sees security as their responsibility, they can move faster and achieve quicker and more secure product and service releases.” — Clarke Rodgers, Director of Enterprise Strategy at AWS |

Figure 2: Shipping with a security-first mindset

Human-centric AI

CISOs and security stakeholders are increasingly pivoting to a human-centric focus to establish effective cybersecurity, and ease the burden on employees.

According to Gartner, by 2027, 50% of large enterprise CISOs will have adopted human-centric security design practices to minimize cybersecurity-induced friction and maximize control adoption.

As Amazon CSO Stephen Schmidt noted in Move fast, stay secure: Strategies for the future of security, focusing on technology first is fundamentally wrong. Security is a people challenge for threat actors, and for defenders. To keep up with evolving changes and securely support the businesses we serve, we need to focus on dynamic problems that software can’t solve.

Maintaining that focus means providing security and development teams with the tools they need to automate and scale some of their work.

| “People are our most constrained and most valuable resource. They have an impact on every layer of security. It’s important that we provide the tools and the processes to help our people be as effective as possible.” — Stephen Schmidt, CSO at Amazon |

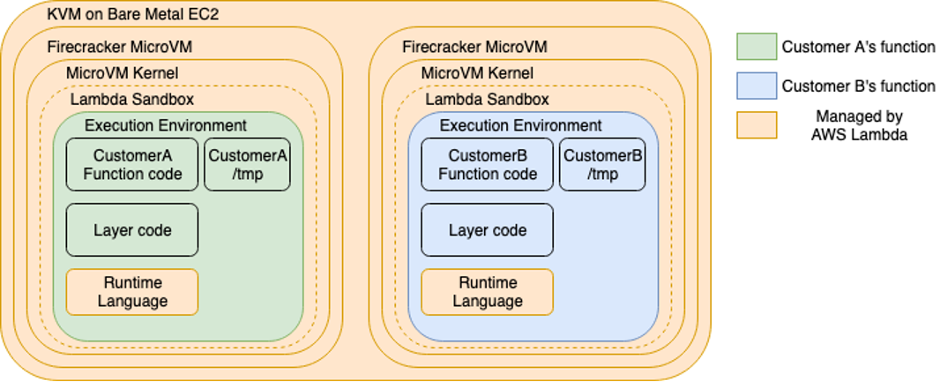

Organizations can use artificial intelligence (AI) to impact all layers of security—but AI doesn’t replace skilled engineers. When used in coordination with other tools, and with appropriate human review, it can help make your security controls more effective.

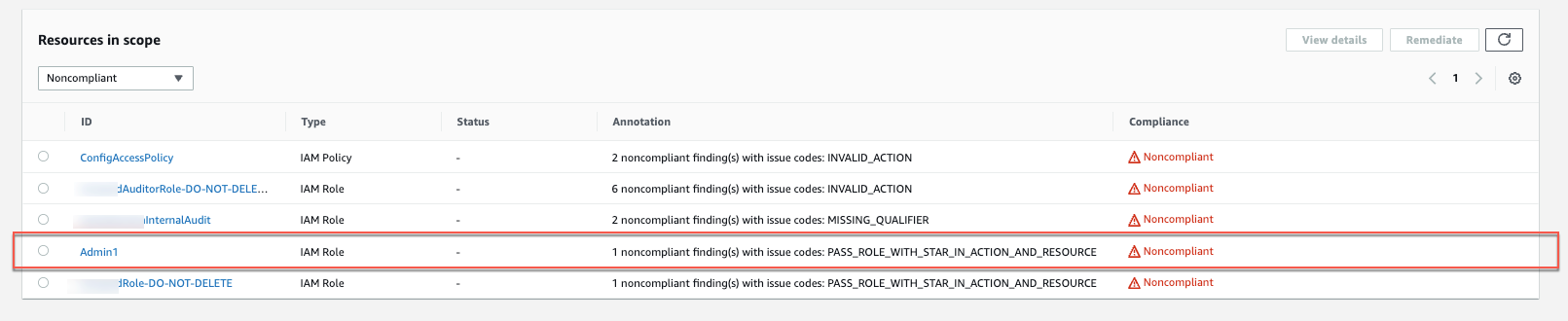

Schmidt highlighted the internal use of AI at Amazon to accelerate our software development process, as well as new generative AI-powered Amazon Inspector, Amazon Detective, AWS Config, and Amazon CodeWhisperer features that complement the human skillset by helping people make better security decisions, using a broader collection of knowledge. This pattern of combining sophisticated tooling with skilled engineers is highly effective, because it positions people to make the nuanced decisions required for effective security that AI can’t make on its own.

In How security teams can strengthen security using generative AI, AWS Senior Security Specialist Solutions Architects Anna McAbee and Marshall Jones, and Principal Consultant Fritz Kunstler featured a virtual security assistant (chatbot) that can address common security questions and use cases based on your internal knowledge bases, and trusted public sources.

Figure 3: Generative AI-powered chatbot architecture

The generative AI-powered solution depicted in Figure 3—which includes Retrieval Augmented Generation (RAG) with Amazon Kendra, Amazon Security Lake, and Amazon Bedrock—can help you automate mundane tasks, expedite security decisions, and increase your focus on novel security problems.

It’s available on Github with ready-to-use code, so you can start experimenting with a variety of large and multimodal language models, settings, and prompts in your own AWS account.

Secure collaboration

Collaboration is key to cybersecurity success, but evolving threats, flexible work models, and a growing patchwork of data protection and privacy regulations have made maintaining secure and compliant messaging a challenge.

An estimated 3.09 billion mobile phone users access messaging apps to communicate, and this figure is projected to grow to 3.51 billion users in 2025.

The use of consumer messaging apps for business-related communications makes it more difficult for organizations to verify that data is being adequately protected and retained. This can lead to increased risk, particularly in industries with unique recordkeeping requirements.

In How the U.S. Army uses AWS Wickr to deliver lifesaving telemedicine, Matt Quinn, Senior Director at The U.S. Army Telemedicine & Advanced Technology Research Center (TATRC), Laura Baker, Senior Manager at Deloitte, and Arvind Muthukrishnan, AWS Wickr Head of Product highlighted how The TATRC National Emergency Tele-Critical Care Network (NETCCN) was integrated with AWS Wickr—a HIPAA-eligible secure messaging and collaboration service—and AWS Private 5G, a managed service for deploying and scaling private cellular networks.

During the session, Quinn, Baker, and Muthukrishnan described how TATRC achieved a low-resource, cloud-enabled, virtual health solution that facilitates secure collaboration between onsite and remote medical teams for real-time patient care in austere environments. Using Wickr, medics on the ground were able to treat injuries that exceeded their previous training (Figure 4) with the help of end-to-end encrypted video calls, messaging, and file sharing with medical professionals, and securely retain communications in accordance with organizational requirements.

| “Incorporating Wickr into Military Emergency Tele-Critical Care Platform (METTC-P) not only provides the security and privacy of end-to-end encrypted communications, it gives combat medics and other frontline caregivers the ability to gain instant insight from medical experts around the world—capabilities that will be needed to address the simultaneous challenges of prolonged care, and the care of large numbers of casualties on the multi-domain operations (MDO) battlefield.” — Matt Quinn, Senior Director at TATRC |

Figure 4: Telemedicine workflows using AWS Wickr

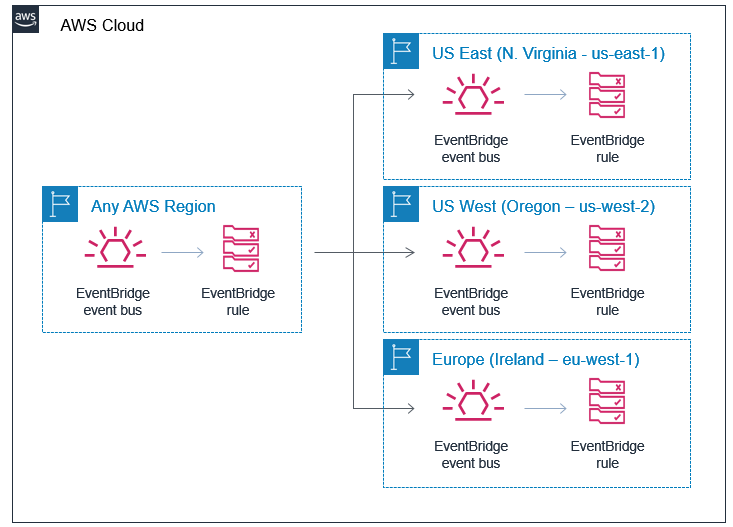

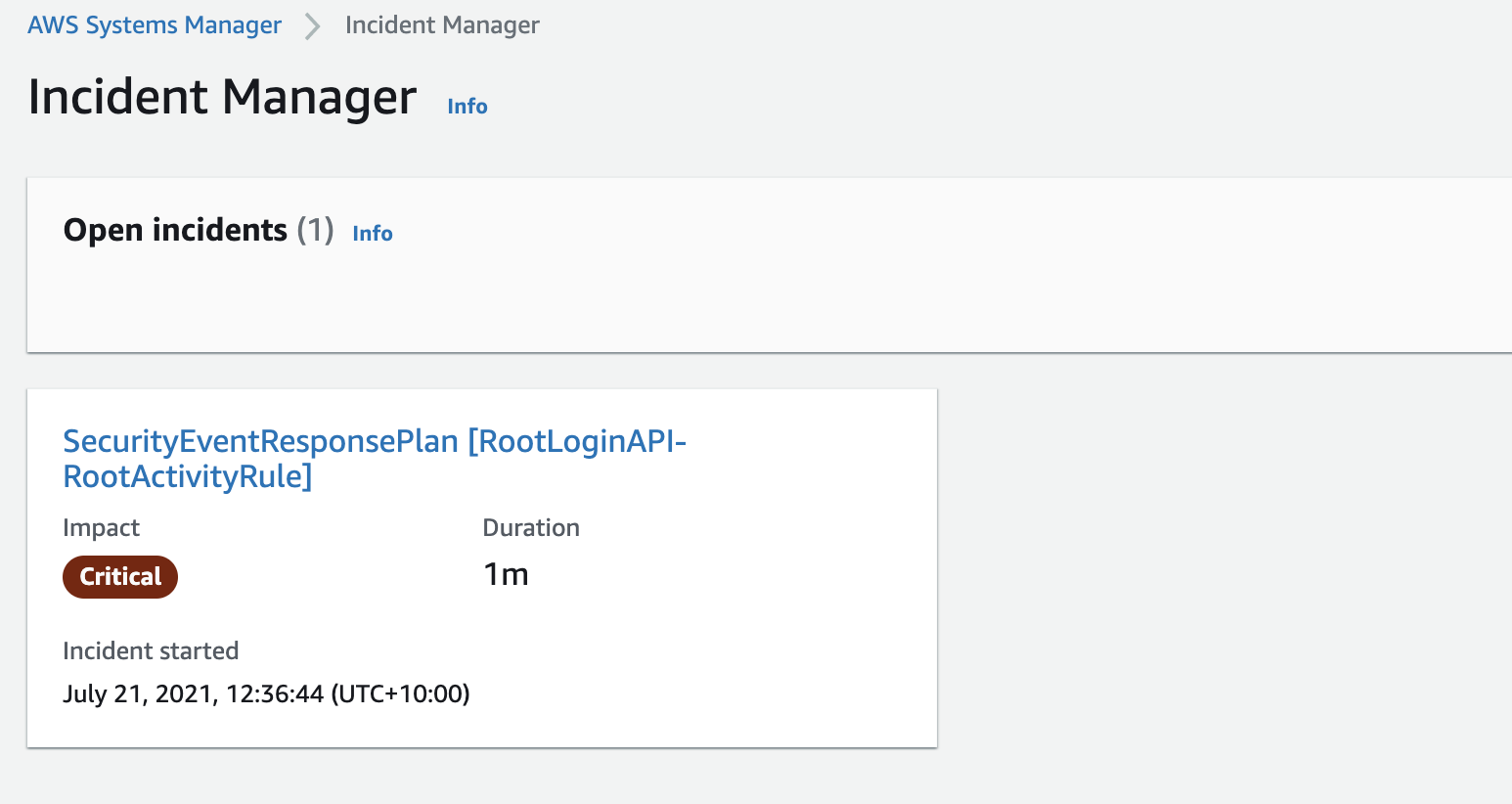

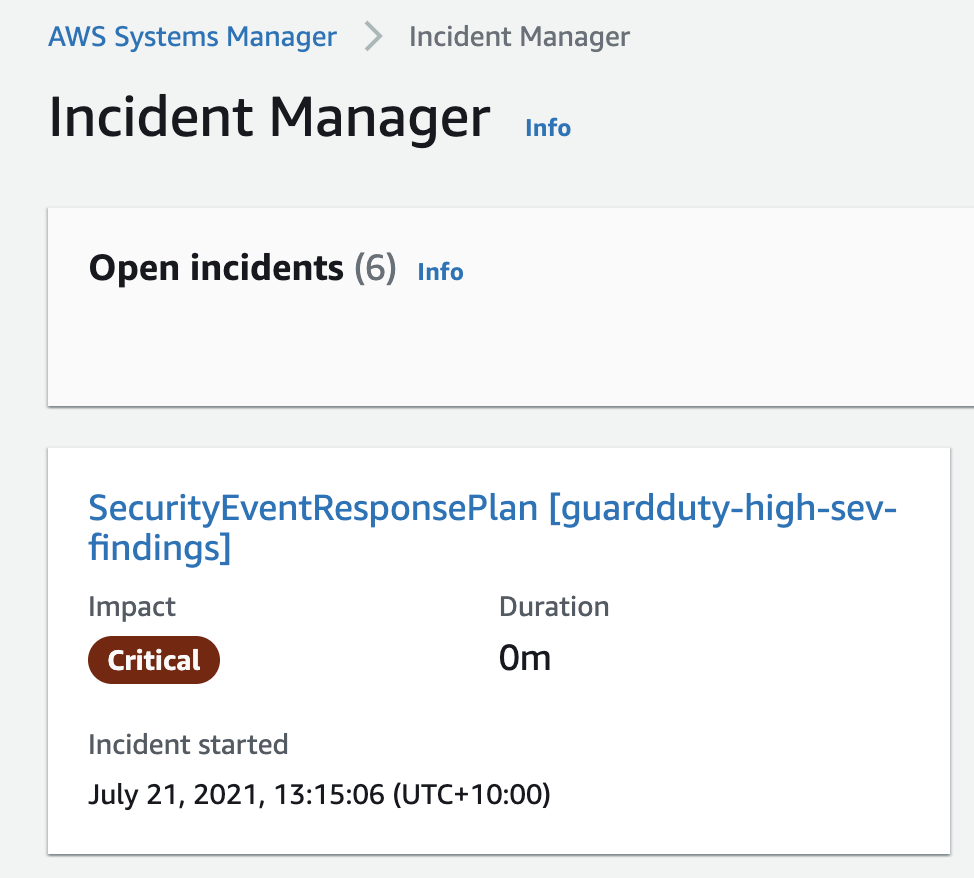

In a separate Chalk Talk titled Bolstering Incident Response with AWS Wickr and Amazon EventBridge, Senior AWS Wickr Solutions Architects Wes Wood and Charles Chowdhury-Hanscombe demonstrated how to integrate Wickr with Amazon EventBridge and Amazon GuardDuty to strengthen incident response capabilities with an integrated workflow (Figure 5) that connects your AWS resources to Wickr bots. Using this approach, you can quickly alert appropriate stakeholders to critical findings through a secure communication channel, even on a potentially compromised network.

Figure 5: AWS Wickr integration for incident response communications

Security is our top priority

AWS re:Invent featured many more highlights on a variety of topics, including adaptive access control with Zero Trust, AWS cyber insurance partners, Amazon CTO Dr. Werner Vogels’ popular keynote, and the security partnerships showcased on the Expo floor. It was a whirlwind experience, but one thing is clear: AWS is working hard to help you build a security-first mindset, so that you can meaningfully improve both technical and business outcomes.

To watch on-demand conference sessions, visit the AWS re:Invent Security, Identity, and Compliance playlist on YouTube.

If you have feedback about this post, submit comments in the Comments section below.

Want more AWS Security news? Follow us on Twitter.