Post Syndicated from Adriaan de Jonge original https://aws.amazon.com/blogs/architecture/field-notes-data-driven-risk-analysis-with-amazon-neptune-and-amazon-elasticsearch-service/

This blog post is co-authored with Charles Crouspeyre and Angad Srivastava. Charles is Director at Accenture Applied Intelligence and ASEAN AI SME (Subject Matter Expert) and Angad is Data and Analytics Consultant at AWS and NLP (Natural Language Processing) expert. Together, they are the lead architects of the solution presented in this blog.

In this blog, you learn how Amazon Neptune as a graph database, combined with Amazon Elasticsearch Service (Amazon ES) for full text indexing helps you shorten risk analysis processes from weeks to minutes. We give a walk-through of the steps involved in creating this knowledge management solution, which includes natural language processing components.

The business problem

Our Energy customer needs to do a risk assessment before acquiring raw materials that will be processed in their equipment. The process includes assessing the inventory of raw materials, the capacity of storage units, analyzing the performance of the processing units, and quality assurance of the end product. The cycle time for a comprehensive risk analysis across different teams working in silos is more than 2 weeks, while the window of opportunity for purchasing is a couple of days. So, the customer either puts their equipment and personnel at risk or misses good buying opportunities.

The solution described in this blog helps our customer improve and speed up their decision making. This is done through an automated analysis and understanding of the documents and information they have gathered over the years. They use Natural Language Processing (NLP) to analyze and better understand the documents which is discussed later on in this blog.

Our customer has accumulated years of documents that were mostly in silos across the organization: emails, SharePoint, local computer, private notes, and more.

The data is so heterogenous and widespread that it became hard for our customer to retrieve the right information in a timely manner. Our objective was to create a platform centralizing all this information, and to facilitate present and future information retrieval. Making informed decisions on time helps our customer to purchase raw materials at a better price, increasing their margins significantly.

Overview of business solution

To understand the tasks involved, let’s look at the high-level platform workflow:

Figure 1: This illustration visualizes a 4-step process consisting of Hydrate, Analyze, Search and Feedback.

We can summarize our workflow as a 4-step process:

- Hydrate: where we extract the information from multiple sources and do a first level of processing such as document scanning and natural language processing (NLP).

- Analyze: where the information extracted from the hydration step is ingested and merged with existing information.

- Search: where information is retrieved from the system based on user queries, by leveraging our knowledge graph and the concept map representation that we have created.

- Feedback: where users can rate the results for the system as good or bad. The feedback is collected and used to update the Knowledge graph, to re-train our models or to improve our query matching layer.

High-level technical architecture

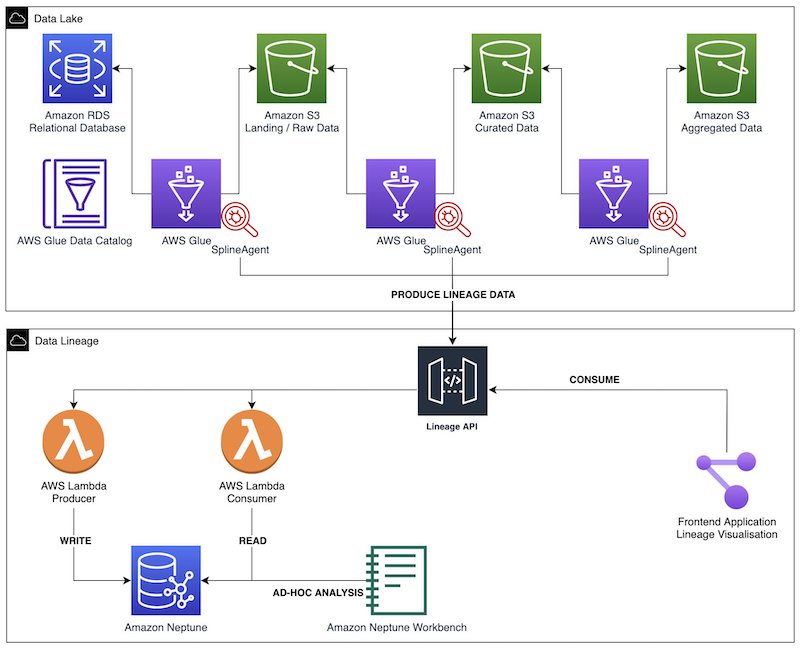

The following architecture consists of a traditional data layer, combined with a knowledge layer. The compute part of the solution is serverless. The database storage part requires long-running solutions.

Figure 2: A diagram visualizing the steps involved in data processing across two layers, the data layer and the knowledge layer and their implementations with AWS services.

The data layer of our application is similar to many common data analytics setups, and includes:

- An ingestion and normalization component, implemented with AWS Lambda, fronted by Amazon API Gateway and AWS Transfer Family

- An ETL component, implemented with AWS Glue and AWS Lambda

- A data enhancement component, implemented with Lambda

- An analytics component, implemented with Amazon Redshift

- A knowledge query component, implemented with Lambda

- A user interface, a custom implementation based on React

Where our solution really adds value, is the knowledge layer, which is what we will focus on in this blog. We created this specifically for our knowledge representation and management. This layer consists of the following:

- The knowledge extraction block, where the raw text is extracted, analyzed and classified into facts and structured data. This is implemented using Amazon SageMaker and Amazon Comprehend.

- The knowledge repository, where the raw data is saved and kept is Amazon Simple Storage Service (Amazon S3).

- The relationship, and knowledge extraction, and indexing, where the facts extracted earlier are analyzed and added to our knowledge graph. This is implemented with a combination of Neptune, Amazon S3, Amazon DocumentDB (with MongoDB compatibility) and Amazon ES. Neptune is used as a property graph, queried with the Gremlin graph traversal language.

- The knowledge aggregator, where we leverage both our knowledge graph and business representation to extract facts to associate with the user query, and rank information based on their relevance. This is implemented leveraging Amazon ES.

The last component, the knowledge aggregator, is fundamental for our infrastructure. In general, when we talk about information retrieval system – a system designed to supply the right information in the hands of users at the right time – there are two common approaches:

- Keyword-based search: take the user query and search for the presence of certain keywords from the query in the available documents.

- Concept-based search: build a business-related taxonomy to extend the keyword-based search into a business-related concept-based search.

The downside of a keyword-based search is that it does not capture the complexity and specificity of the business domain in which the query occurs. Due to this limitation, we chose to go with a concept-based search approach as it allows us to inject a layer of business understanding to our ingestion and information retrieval.

Knowledge layer deep-dive

Because the value added from our solution is in the knowledge layer, let’s dive deeper into the details of this layer.

Figure 3: An architecture diagram of the knowledge layer of the solution, classified in 3 categories: ingestion, knowledge representation and retrieval

The architecture in Figure 3 describes the technical solution architecture broken down into 3 key steps. The 3 steps are:

- Ingestion

- Knowledge representation

- Retrieval

Another way to approach the problem definition is by looking at the process flow for how raw data/information flows through the system to generate the knowledge layer. Figure 4 gives an example of how the information is broadly treated as it progresses the logical phases of the process flow.

Figure 4: An illustration of how information is extracted from an unstructured document, modeled as a graph and visualized in a business-friendly and concise format.

In this example, we can recognize a raw material of type “Champion” and detect a relationship between this entity and another entity of type “basic nitrogen”. This relationship is classified as the type “is characterized by”.

The facts in the parsed content are then classified into different categories of relevancy based on the contextual information contained in the text i.e., an important paragraph that mentions a potential issue will get classified as a key highlight with a high degree of relevancy.

This paragraph text is further analyzed to recognize and extract entities mentioned such as “Champion” and “basic nitrogen”; and to determine the semantic relationship between these entities based on the context of the paragraph i.e., “characterized by” and, “incompatibility due to low levels of basic nitrogen”.

There is a correlation between the different steps of the technical architecture versus the phases in the information analysis process. So we will present them together.

- During the Ingestion step in the technical solution architecture, the aim is to process the incoming raw data in any format as defined in the Extract Information phase of the information analysis process flow.

- Once the data ingestion has occurred, the next step is to capture the knowledge representation. The contextualize information phase of the information analysis process flow helps ensure that comprehensive and accurate knowledge representation occurs within the system.

- The last step for the solution is to then facilitate retrieval of information by providing appropriate interfaces for interacting with the knowledge representation within the system. This is facilitated by the assemble information phase of the Information Analysis process.

To further understand the proposed solution, let us review the steps and the associated process flow phases.

Technical Architecture Step 1: Ingestion

Information comes in through the ingestion pipeline from various sources, such as websites, reports, news, blogs and internal data. Raw data enters the system either through automated API-based integrations with external websites or internal systems like Microsoft SharePoint, or can be ingested manually through AWS Transfer Family. Once a new piece of data has been ingested into the solution, it initiates the process for extracting information from the raw data.

Information Analysis Phase 1: Extract information

Once the information lands in our system, the knowledge representation process starts with our Lambda functions acting as the orchestrator between other components. Amazon SageMaker was initially used to create custom models for document categorization and classification of ingested unstructured files.

For example, an unstructured file that is ingested into our system gets recognized as a new email (one of the acceptable data sources) and is classified as “compatibility highlights” based on the email contents. But with improvements in the capabilities of Amazon Comprehend managed service, the need for custom model development, maintenance, and machine learning operations (MLOps) could be reduced. The solution now uses Amazon Comprehend with custom training for the initial step of document categorization and information extraction. Additionally, Amazon Comprehend was also used to create custom named-entity recognition models, that were trained to recognize custom raw materials and properties.

In this example, an unstructured pdf document is ingested into our system as illustrated in Figure 5.

Figure 5: Phase 1 – Information Extraction

Amazon Comprehend analyzes the unstructured document, classifies its contents and extracts a specific piece of information regarding a type of raw material called “Champion”. This has an inherent property called “low basic nitrogen” associated with it.

Technical Architecture Step 2: Knowledge representation

Knowledge representation is the process of extracting semantic relationships between the various information/data elements within a piece of raw data. It then incorporates it into the existing layers of knowledge already identified and stored. Based on the categorization of the document, the raw text is pre-processed and parsed into logical units. The parsed data is then analyzed in our NLP layer for content identification and fact classification.

The facts and key relationships deduced from the results of both Amazon Comprehend are returned back to the Lambda functions, which in-turn store the detected facts to the knowledge graph.

Information Analysis Phase 2: Contextualize information

Once the information is extracted from the document; our first step is to contextualize the information using our business representation in the form of a taxonomy. The system detects different parts and entities that the paragraph is composed of, and structures the information into our knowledge graph as illustrated in Figure 6.

Figure 6: Context based Knowledge Graph Generation

This data extraction process is repeated iteratively, so that the knowledge graph grows over time through the detection of new facts and relationships. When we ingest new data into our knowledge graph, we search our knowledge graph for similar entities. If a similar entity exists, we analyze the type of relationships and properties both entities have. When we observe sufficient similarities between the entities, we associate relationships from one entity to the other.

For example, a new entity “Crude A” which has the properties – level of basic nitrogen and level of sulfur is ingested. Next, we have “Champion”, as described above, which has similar levels of basic nitrogen and a property “risk” associated to it. Based on the existing knowledge graph, we can now infer that there is a high probability that “Crude A” has a similar risk associated to it as shown in Figure 7.

Figure 7: Crude Knowledge Graph Representation

The probability calculations can take multiple factors into consideration to make the process more accurate. This makes the structure of the knowledge graph quite dynamic and evolve automatically.

The complete raw data is also stored in Amazon ES as a secondary implementation to perform free form queries. This process helps ensure that all the relevant information for any extracted factoid associated with an entity in the knowledge graph is completely represented with the system. Some of this information may not exist in the knowledge graph because the document data extraction model can’t capture all the relevant information. One reason for such a problem to occur can be poor quality of the source document making automated reading of documents and data extraction difficult. Another reason can be the sophistication of the Amazon Comprehend models.

Technical Architecture Step 3: Retrieval

To retrieve information, the user query is analyzed by the Lambda function on the right side of Figure 3. Based on the analysis, key terms are identified from the user query for which a search needs to be performed. For example, if the query provided is “What is the likelihood of damage due to processing Champion in location A”, semantic analysis of the query will indicate that we are looking for relationships between entities Champion, any type of risks, any known incidents at location A and possible mitigations to reduce identified risks.

To address the query, the information then needs to compiled together from the existing knowledge graph as well as Amazon ES to provide a complete answer.

Information Analysis Phase 3: Assemble information

Figure 8 illustrates the output of Information assembly process.

Figure 8: “Champion” crude assembled information for property Nitrogen

Based on the facts available within the knowledge graph, we have identified that for “Champion” there is a possibility of damage occurring “due to increased pressure drop and loss of heat transfer” but this can be mitigated by “blending champion to meet basic nitrogen levels”.

In addition, say there was information available about “Crude B” that has been processed at “location A”. This also originated from “Brunei” and had similar “Nitrogen” and properties such as “Kerogen3”, “napthenic” and had a processing incident causing damage. We can then conclude by looking at the information stored within the knowledge graph and Amazon ES, that there are other possibilities for damage to occur due to processing of “Champion” at “Location A” as well.

Once all the relevant pieces of information have been collected, a sorted list of information is sent back to the user interface to be displayed.

Fact reconciliation

In reality, it is possible that new information contradicts existing information, which causes conflicts during ingestion. There are various ways to handle such contradictions, for example:

Figure 9: Visualizations of four illustrative ways to deal with contradictory new facts.

- Assume the latest data is the most accurate, by looking at the timestamp of each data point. This makes it possible to update the list of facts in our knowledge graph

- Analyze how new facts alter the properties or relationships of existing facts and update them or create a relationship between nodes

- Calculate a reliability score for the available sources, to rank the fact based on who has provided them

- Ask for end user feedback through the user interface

In our solution, we have mechanism 1, 2, and 4. Mechanisms 1 and 2 are implemented within the contextualize information phase of the information analysis process.

Mechanism 4 is implemented in the search results use interface where the user has a ‘thumbs up’ and ‘thumbs down’ button to classify the different search results as relevant or not. This information is then fed back into the Amazon Comprehend model, the knowledge graph as well as captured within Amazon ES to optimize subsequent search results.

Over time, mechanism 4 can be expanded to capture more detailed feedback including corrections to the search result instead of a simple yes/no feedback mechanism. Such enhancements to mechanism 4 and the implementation of Mechanism 3 can be a possible future enhancement for the solution proposed.

Conclusion

Our customer needed help to shorten their risk analysis process to make high-impact purchase decisions for raw materials. Our knowledge management solution helped them extract knowledge from their vast set of documents and make it available in knowledge graph format, for risk analysts to analyze. Knowledge graphs are a great way to handle this “domain specificity”. It helps extract information during the ingestion phase. It also helps contextualize queries during the retrieval phase.

The possibilities are endless. One thing is certain: we’d encourage you to use graph databases with Neptune supported by Amazon ES for your use cases with highly connected data!

Field Notes provides hands-on technical guidance from AWS Solutions Architects, consultants, and technical account managers, based on their experiences in the field solving real-world business problems for customers.

Nishchai JM is an Analytics Specialist Solutions Architect at Amazon Web services. He specializes in building Big-data applications and help customer to modernize their applications on Cloud. He thinks Data is new oil and spends most of his time in deriving insights out of the Data.

Nishchai JM is an Analytics Specialist Solutions Architect at Amazon Web services. He specializes in building Big-data applications and help customer to modernize their applications on Cloud. He thinks Data is new oil and spends most of his time in deriving insights out of the Data. Varad Ram is Senior Solutions Architect in Amazon Web Services. He likes to help customers adopt to cloud technologies and is particularly interested in artificial intelligence. He believes deep learning will power future technology growth. In his spare time, he like to be outdoor with his daughter and son.

Varad Ram is Senior Solutions Architect in Amazon Web Services. He likes to help customers adopt to cloud technologies and is particularly interested in artificial intelligence. He believes deep learning will power future technology growth. In his spare time, he like to be outdoor with his daughter and son. Narendra Gupta is a Specialist Solutions Architect at AWS, helping customers on their cloud journey with a focus on AWS analytics services. Outside of work, Narendra enjoys learning new technologies, watching movies, and visiting new places

Narendra Gupta is a Specialist Solutions Architect at AWS, helping customers on their cloud journey with a focus on AWS analytics services. Outside of work, Narendra enjoys learning new technologies, watching movies, and visiting new places Arun A K is a Big Data Solutions Architect with AWS. He works with customers to provide architectural guidance for running analytics solutions on the cloud. In his free time, Arun loves to enjoy quality time with his family

Arun A K is a Big Data Solutions Architect with AWS. He works with customers to provide architectural guidance for running analytics solutions on the cloud. In his free time, Arun loves to enjoy quality time with his family

Moira Lennox is a Senior Data Strategy Technical Specialist for AWS with 27 years’ experience helping companies innovate and modernize their data strategies to achieve new heights and allow for strategic decision-making. She has experience working in large enterprises and technology providers, in both business and technical roles across multiple industries, including health care live sciences, financial services, communications, digital entertainment, energy, and manufacturing.

Moira Lennox is a Senior Data Strategy Technical Specialist for AWS with 27 years’ experience helping companies innovate and modernize their data strategies to achieve new heights and allow for strategic decision-making. She has experience working in large enterprises and technology providers, in both business and technical roles across multiple industries, including health care live sciences, financial services, communications, digital entertainment, energy, and manufacturing. Joel Farvault is Principal Specialist SA Analytics for AWS with 25 years’ experience working on enterprise architecture, data strategy, and analytics, mainly in the financial services industry. Joel has led data transformation projects on fraud analytics, claims automation, and data governance.

Joel Farvault is Principal Specialist SA Analytics for AWS with 25 years’ experience working on enterprise architecture, data strategy, and analytics, mainly in the financial services industry. Joel has led data transformation projects on fraud analytics, claims automation, and data governance. Mike Havey is a Solutions Architect for AWS with over 25 years of experience building enterprise applications. Mike is the author of two books and numerous articles. His

Mike Havey is a Solutions Architect for AWS with over 25 years of experience building enterprise applications. Mike is the author of two books and numerous articles. His

Herain Oberoi leads Product Marketing for AWS’s Databases, Analytics, BI, and Blockchain services. His team is responsible for helping customers learn about, adopt, and successfully use AWS services. Prior to AWS, he held various product management and marketing leadership roles at Microsoft and a successful startup that was later acquired by BEA Systems. When he’s not working, he enjoys spending time with his family, gardening, and exercising.

Herain Oberoi leads Product Marketing for AWS’s Databases, Analytics, BI, and Blockchain services. His team is responsible for helping customers learn about, adopt, and successfully use AWS services. Prior to AWS, he held various product management and marketing leadership roles at Microsoft and a successful startup that was later acquired by BEA Systems. When he’s not working, he enjoys spending time with his family, gardening, and exercising.