Post Syndicated from Yuchen Lin original https://aws.amazon.com/blogs/architecture/field-notes-deploying-uipath-rpa-software-on-aws/

Running UiPath RPA software on AWS leverages the elasticity of the AWS Cloud, to set up, operate, and scale robotic process automation. It provides cost-efficient and resizable capacity, and scales the robots to meet your business workload. This reduces the need for administration tasks, such as hardware provisioning, environment setup, and backups. It frees you to focus on business process optimization by automating more processes.

This blog post guides you in deploying UiPath robotic processing automation (RPA) software on AWS. RPA software uses the user interface to capture data and manipulate applications just like humans do. It runs as a software robot to interpret, and trigger responses, as well as communicate with other systems to perform a variety of repetitive tasks.

UiPath Enterprise RPA Platform provides the full automation lifecycle including discover, build, manage, run, engage, and measure with different products. This blog post focuses on the Platform’s core products: build with UiPath Studio, manage with UiPath Orchestrator and run with UiPath Robots.

About UiPath software

UiPath Enterprise RPA Platform’s core products are:

UiPath Studio and UiPath Robot are individual products, you can deploy each on a standalone machine.

UiPath Orchestrator contains Web Servers, SQL Server and Indexer Server (Elasticsearch), you can use Single Machine deployment, or Multi-Node deployment, depends on the workload capacity and availability requirements.

For information on UiPath platform offerings, review UiPath platform products.

UiPath on AWS

You can deploy all UiPath products on AWS.

- UiPath Studio is needed for automation design jobs and runs on single machine. You deploy it with Amazon EC2.

- UiPath Robots are needed for automation tasks, runs on a single machine, and scales with the business workload. You deploy it with Amazon EC2 and scale with Amazon EC2 Auto Scaling.

- UiPath Orchestrator is needed for automation administration jobs and contains three logical components that run on multiple machines. You deploy Web Server with Amazon EC2, SQL Server with Amazon RDS, and Indexer Server with Amazon Elasticsearch Service. For Multi-Node deployment, you deploy High Availability Add-On with Amazon EC2.

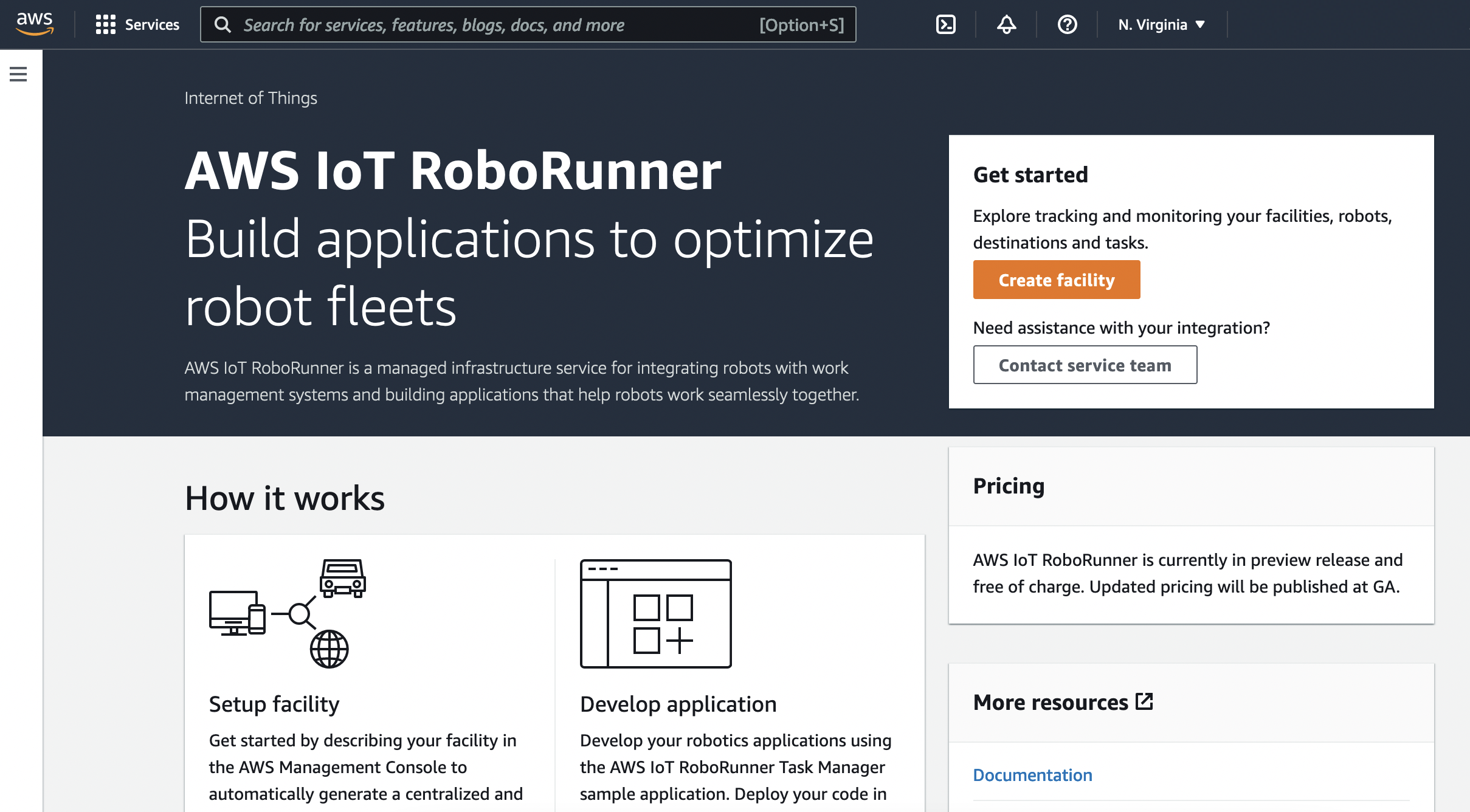

The architecture of UiPath Enterprise RPA Platform on AWS looks like the following diagram:

Figure 1 – UiPath Enterprise RPA Platform on AWS

By deploying the UiPath Enterprise RPA Platform on AWS, you can set up, operate, and scale workloads. This controls the infrastructure cost to meet process automation workloads.

Prerequisites

For this walkthrough, you should have the following prerequisites:

- An AWS account

- AWS resources

- UiPath Enterprise RPA Platform software

- Basic knowledge of Amazon EC2, EC2 Auto Scaling, Amazon RDS, Amazon Elasticsearch Service.

- Basic knowledge to set up Windows Server, IIS, SQL Server, Elasticsearch.

- Basic knowledge of Redis Enterprise to set up High Availability Add-on.

- Basic knowledge of UiPath Studio, UiPath Robot, UiPath Orchestrator.

Deployment Steps

Deploy UiPath Studio

UiPath Studio deploys on a single machine. Amazon EC2 instances provide secure and resizable compute capacity in the cloud, and the ability to launch applications when needed without upfront commitments.

- Download the UiPath Enterprise RPA Platform. UiPath Studio is integrated in the installation package.

- Launch an EC2 instance with a Windows OS-based Amazon Machine Image (AMI) that meets the UiPath Studio hardware requirements and software requirements.

- Install the UiPath Studio software. For UiPath Studio installation steps, review the UiPath Studio Guide.

Optionally, you can save the installation and pre-configuration work completed for UiPath Studio as a custom Amazon Machine Image (AMI). Then, you can launch more UiPath Studio instances from this AMI. For details, visit Launch an EC2 instance from a custom AMI tutorial.

UiPath Robot Deployment

Each UiPath Robot deploys one single machine with Amazon EC2. Amazon EC2 Auto Scaling helps you add or remove Robots to meet automation workload changes in demand.

- Download the UiPath Enterprise RPA Platform. The UiPath Robot is integrated in the installation package.

- Launch an EC2 instance with a Windows OS based Amazon Machine Image (AMI) that meets the UiPath Robot hardware requirements and software requirements.

- Install the business application (Microsoft Office, SAP, etc.) required for your business processes. Alternatively, select the business application AMI from the AWS Marketplace.

- Install the UiPath Robot software. For UiPath Robot installation steps, review Installing the Robot.

Optionally, you can save the installation and pre-configuration work completed for UiPath Robot as a custom Amazon Machine Image (AMI). Then you can create Launch templates with instance configuration information. With launch template, you can create Auto Scaling groups from launch templates and scale the Robots.

Scale the Robots’ Capacity

Amazon EC2 Auto Scaling groups help you use scaling policies to scale compute capacity based on resource use. By monitoring the process queue and creating a customized scaling policy, the UiPath Robot can automatically scale based on the workload. For details, review Scaling the size of your Auto Scaling group.

Use the Robot Logs

UiPath Robot generates multiple diagnostic and execution logs. Amazon CloudWatch provides the log collection, storage, and analysis, and enables the complete visibility of the Robots and automation tasks. For CloudWatch agent setup on Robot, review Quick Start: Enable Your Amazon EC2 Instances Running Windows Server to Send logs to CloudWatch Logs.

Monitor the Automation Jobs

UiPath Robot uses the user interface to capture data and manipulate applications. When UiPath Robot runs, it is important to capture processing screens for troubleshooting and auditing usage. This screen capture activity can be integrated with process in conjunction with UiPath Studio.

Amazon S3 provides cost-effective storage for retaining all Robot logs and processing screen captures. Amazon S3 Object Lifecycle Management automates the transition between different storage classes, and helps you manage the screenshots so that they are stored cost effectively throughout their lifecycle. For lifecycle policy creation, review How Do I Create a Lifecycle Policy for an S3 Bucket?.

UiPath Orchestrator Deployment

Deployment Components

UiPath Orchestrator Server Platform has many logical components, grouped in three layers:

- presentation layer

- web service layer

- persistence layer

The presentation layer and web service layer are built into one ASP.NET website. The persistence layer contains SQL Server and Elasticsearch. There are three deployment components to be set up:

- web application

- SQL Server

- Elasticsearch

The Web Server, SQL Server, and Elasticsearch Server require multiple different environments. Review the hardware requirements and software requirements for more details.

Note: set up the Web Server, SQL Server, Elasticsearch Server environments before running the UiPath Enterprise Platform installation wizard.

Set up Web Server with Amazon EC2

UiPath Orchestrator Web Server deploys on Windows Server with IIS 7.5 or later. For details, review the software requirements.

AWS provides various AMIs for Windows Server that can help you set up the environment required for the Web Server.

The Microsoft Windows Server 2019 Base AMI includes most prerequisites for installation except some features of Web Server (IIS) to be enabled. For configuration steps, review Server Roles and Features.

The Web Server should be put in correct subnet (Public or Private) and have proper security group (HTTPS visits) according to the business requirements. Review Allow user to connect EC2 on HTTP or HTTPS.

Set up SQL Server with Amazon RDS

Amazon Relational Database Service (Amazon RDS) provides you with a managed database service. With a few clicks, you can set up, operate, and scale a relational database in the AWS Cloud.

Amazon RDS support SQL Server Engine. For UiPath Orchestrator, both Standard Edition and Enterprise Edition are supported. For details, review software requirements.

Amazon RDS can be set up in multiple Available Zones to meet requirements for high availability.

UiPath Orchestrator can connect to the created Amazon RDS database with SQL Server Authentication.

Set up Elasticsearch Server with Amazon Elasticsearch Service (Amazon ES)

Amazon ES is a fully managed service for you to deploy, secure, and operate Elasticsearch at scale with generally zero down time.

Elasticsearch Service provides a managed ELS stack, with no upfront costs or usage requirements, and without the operational overhead.

All messages logged by UiPath Robots are sent through the Logging REST endpoint to the Indexer Server where they are indexed for future utilization.

Install UiPath Orchestrator on the Web Server

After Web Server, SQL Server, Elasticsearch Server environment are ready, download the UiPath Enterprise RPA Platform, and install it on the Web Server.

The UiPath Enterprise Platform installation wizard guides you in configuring and setting up each environment, including connecting to SQL Server and configuring the Elasticsearch API URL.

After you complete setup, the UiPath Orchestrator Portal is available for you to visit and manage processes, jobs, and robots.

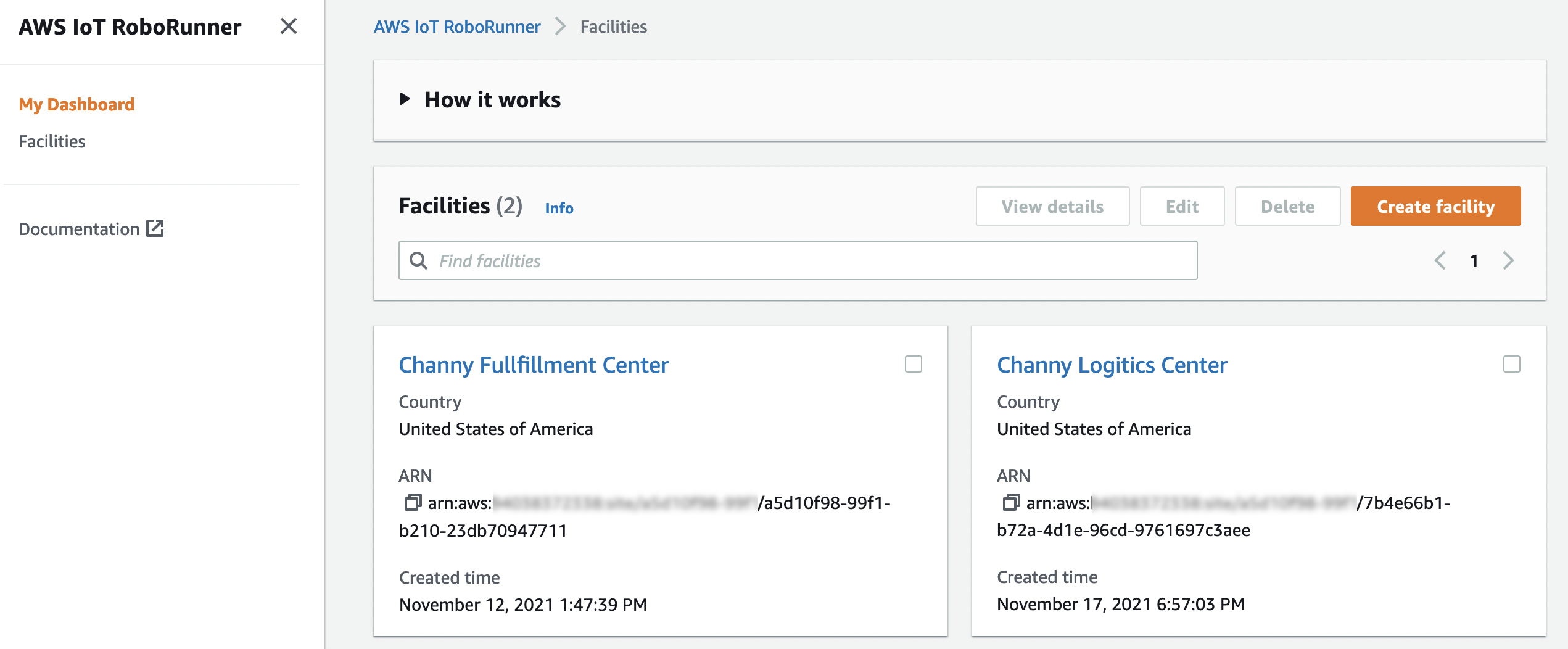

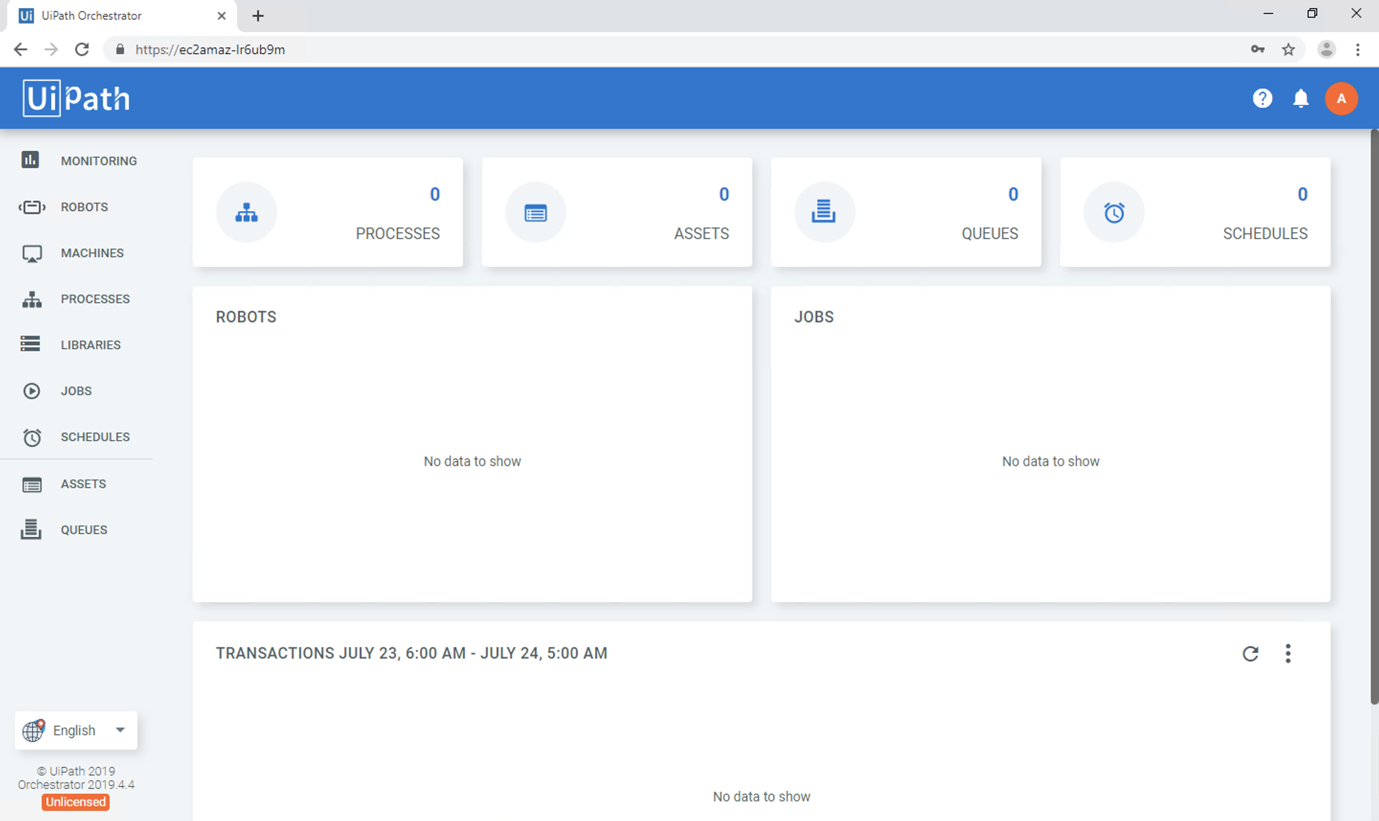

The UiPath Orchestrator dashboard appears like in the following screenshot:

Figure 2- UiPath Orchestrator Portal

Set up Orchestrator High Availability Architecture

One Orchestrator can handle many robots in a typical configuration, but any product running on a single server is vulnerable to failure if something happens to that server.

The High Availability add-on (HAA) enables you to add a second Orchestrator server to your environment that is generally fully synchronized with the first server.

To set up multi-node deployment, launch Amazon EC2 instances with a Linux OS-based Amazon Machine Image (AMI) that meets the HAA hardware and software requirements. Follow the installation guide to set up HAA.

Elastic Load Balancing automatically distributes incoming application traffic across multiple targets. Network Load Balancer should be set up to allow Robots to communicate with multi-node Orchestrators.

Cleaning up

To avoid incurring future charges, delete all the resources.

Conclusion

In this post, I showed you how to deploy the UiPath Enterprise RPA Platform on AWS to further optimize and automate your business processes. AWS Managed Services like Amazon EC2, Amazon RDS, and Amazon Elasticsearch Service help you set up the environment with high availability. This reduces the maintenance effort of backend services, as well as scaling Orchestrator capabilities. Amazon EC2 Auto Scaling helps you add or remove robots to meet automation workload changes in demand.

Learn more about how to integrate UiPath with AWS services, check out The UiPath and AWS partnership.

Field Notes provides hands-on technical guidance from AWS Solutions Architects, consultants, and technical account managers, based on their experiences in the field solving real-world business problems for customers.