Post Syndicated from LGR original https://www.youtube.com/watch?v=e1pehz1hNtk

Three Stories of the Dreaded "88."

Post Syndicated from The History Guy: History Deserves to Be Remembered original https://www.youtube.com/watch?v=BpNYTA6Ax5o

Threat Model Humor

Post Syndicated from Bruce Schneier original https://www.schneier.com/blog/archives/2021/03/threat-model-humor.html

At a hospital.

The Teams Dashboard: Finding a Product Voice

Post Syndicated from Alice Bracchi original https://blog.cloudflare.com/the-teams-dashboard-finding-a-product-voice/

My name is Alice Bracchi, and I’m the technical and UX writer for Cloudflare for Teams, Cloudflare’s Zero Trust and Secure Web Gateway solution.

Today I want to talk about product voice — what it is, why it matters, and how I set out to find a product voice for Cloudflare for Teams.

On the Cloudflare for Teams Dashboard (or as we informally call it, “the Teams Dash”), our customers have full control over the security of their network. Administrators can replace their VPN with a solution that runs on Zero Trust rules, turning Cloudflare’s network into their secure corporate network. Customers can secure all traffic by configuring L7 firewall rules and DNS filtering policies, and organizations have the ability to isolate web browsing to suspicious sites.

All in one place.

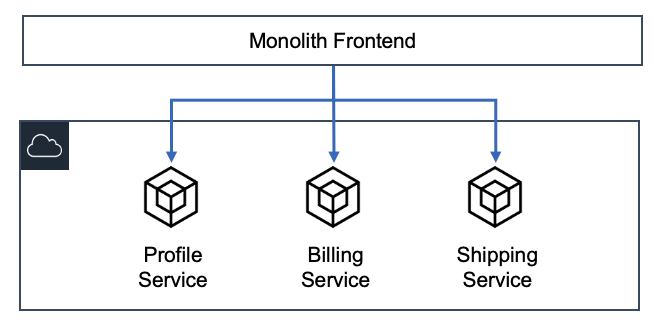

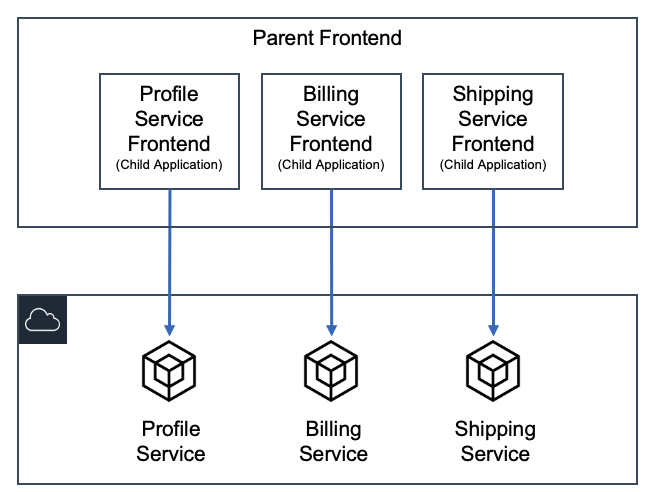

As you can see, a lot of action takes place on the Teams Dash. As an interface, it grows and changes at a rapid pace. This poses a lot of interesting challenges from a design point of view — in our early days, because we were focused on solving problems fast, many of our experiences ended up feeling a bit disjointed. Sure, users were able to follow paths within any given feature, but those features did not always work across the Dash in a seamless way.

Early this week we talked about how we’re leaving our “solution pollution” days behind and moving towards a design-led approach. To me, as the writer on the team, this means it’s time to step up our UX writing game and find our own product voice — a unique voice that reflects our product identity and speaks to our users in a recognizable “Teams way”.

But what exactly is a product voice?

As users, we love experiences and products we recognize. We’re loyal to them. It’s all about consistency, and the sense of familiarity that comes with it. When design and copy work hand in hand to convey a consistent feel, we soon learn to recognize the personality of an interface. Because every little detail has been curated for us, we’re rarely caught by surprise — our experience just feels smooth.

Think about it in terms of human interactions. When picking up a call from a friend, we immediately recognize their voice. We don’t think about the why or how — we just unconsciously do, and start chatting away. However, imagine that friend suddenly uttered a sentence in a completely different voiceprint (spooky, right?). Imagine they started using words or expressions that never belonged in their vocabulary. We would notice right away.

Interactions through UX writing work in a similar way. Users notice right away when a piece of copy doesn’t sound as it should. So when working on copy for our interface, we need a consistent, recognizable product voice. A product voice is a set of principles and guidelines that standardize how we sound to our users. It will determine whether we put exclamation marks in our greetings (“Welcome!”), whether we include interjections in our error messages (“Uh-oh!”), whether we address the user with “you” or prefer a more impersonal approach. It will show our personality and shape what users can expect from us.

And the Teams dashboard needed just that — to find its own voice.

Hundreds of sticky notes

A voice isn’t going to be very successful for a product if it only sounds right to the writer crafting it, I reasoned. It needs to ring true to the people who build and breathe the product every day — our product managers, our designers, our engineers. In the end, a product voice will truly shine only if it’s aligned with product principles. And as a product team, we’d been so caught up shipping features and solving problems that we’d never sat down to brainstorm on our principles.

So the path was clear to me.

- First, we needed to define our product principles.

- From our principles, we would derive a product voice that matched our core values.

- Last but not least, we would draft UX writing guidelines on how to write in our newly found product voice.

My idea was for this process to be as collaborative as possible, so I set up a series of brainstorming sessions with my teammates. I met with the product managers first, then with designers, engineers, and finally the marketing/go-to-market team. Each group gathered around a virtual board, and received the same prompts from me. I asked participants to focus on the ideal product they wanted Teams to grow into. Everyone worked independently on their own corner of the board — I was interested in every participant’s uninfluenced inputs.

Here are the prompts I gave:

- List all the words you associate with Teams.

We called this question the “brain dump.” I gave people two minutes and a half to be instinctive, creative, and give me all the words they could think of. - Teams helps users by _______.

With this question, I wanted people to focus on our everyday life. What do we do for our customers? Which problems are we trying to solve? - In terms of experience, I’d love users to associate Teams with ____ (brand).

Again, I was after instinctive associations. Ideally, I wanted a list of websites I could later explore to see whether we could draw inspiration from them in terms of content. - Teams is unique because [it’s] ________.

I asked people to focus on the qualities that set us apart in the market. What makes the product stand out?

Once I had all the answers, I classified sticky notes by lexical and conceptual association. Some patterns emerged. We had sticky notes describing who we are, who we’re not, what we do, our features, our technology, and what we care about. Once every sticky note had been grouped, I had a pretty good idea of the themes I could work with to draft our product principles.

The words behind our product principles

I labeled each theme/principle with an adjective that could represent it and that could answer the question: what kind of product do we want to be for our users?

- Reassuring. This was the first principle I worked on. Semantically, it reflects the core purpose of Teams — we’re a network security product, so our job is to protect. Under this principle I gathered all the words pertaining to the concepts of security, protection, and reassurance. People even used metaphors to express this concept: we’re a bodyguard. An armored truck.

- Transparent. Another popular theme was our extensive analytics features, and the visibility they give to our admin users. This principle groups words whose root is in one way or another connected to the sense of sight: observing, monitoring, visibility, keeping an eye on. Interestingly enough, other words were more oriented towards the semantics of forensics: investigate, find, detect. For the main descriptor, I finally settled on transparent, because our product is a pane of glass (another metaphor that was used) that the admin can see through and know instantly whether something needs investigating.

- Easy to use. This is a very ambitious principle for us. Network security is not an easy topic — it is our job to make it easy. All groups I brainstormed with gave huge importance to simplicity in one shape or another. Many stated our interface needs to be clean, accessible, approachable, digestible, direct. But we also vow to be inclusive, helpful and guiding, and never to assume knowledge.

- Trailblazing. There was a clear theme around Teams being new on the market, but already showing the way. Modern recurred in most brainstorming sessions. Closely related descriptors, but stronger, were visionary and trailblazing, which I ended up choosing as the title of this principle, because it conveys the energy of a product that’s energetic and fresh.

- Frictionless. This principle is all about a product that just works. Some words I’ve grouped under this principle describe two ways in which Teams aims at removing friction. First, Teams should aim at integrating with other systems. Second, Teams should be invisible. Our product is designed to be hardly noticeable by end users, and works behind the scenes.

- Adaptive. This principle has two sides to it. The first is represented by resilience and Teams’ ability to adapt to circumstances (think concepts like adaptable, ready to change, and built in 2020). The second side is more about our ability to adapt to user needs. Here’s where our user-centered nature comes out: we let user needs shape our evolution as a product.

What about our voice?

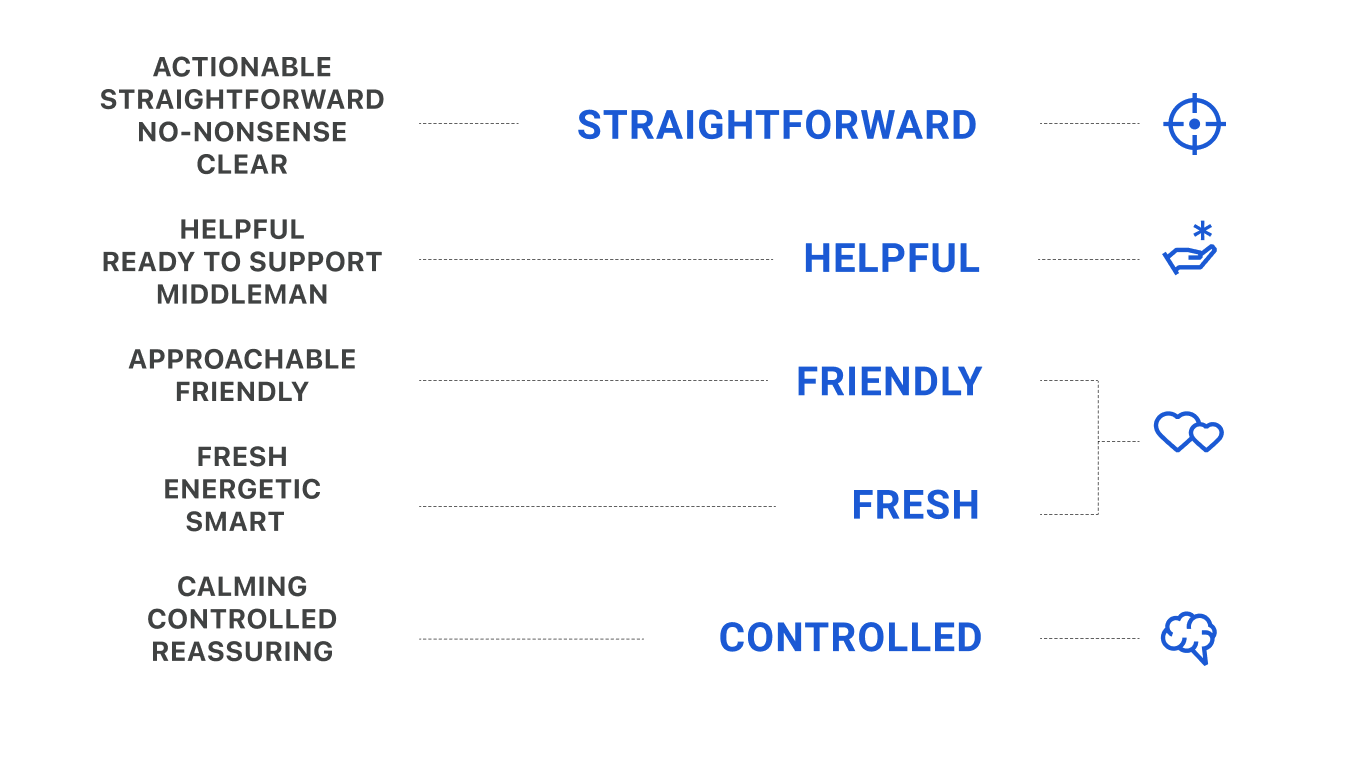

I went back to my sticky notes, this time to find and group words that could help us define the product’s personality, or more specifically, its attitude towards communication. Out of those groups, I chose five descriptors:

- Straightforward. We know the value of effective and concise language. We give the right amount of information at the right time.

- Helpful. We offer tips and guidance, and we ensure users are never left to figure things out by themselves.

- Friendly. We’re happy our users are around. We empathize with them. We’re the warm and welcoming ones.

- Fresh. We’re a new, informal, geeky product. We address the user as if they were sitting beside us. We’re like a nerdy friend offering to fix your computer.

- Controlled. We’re in control. No panic, no crazy excitement. We do not overreact.

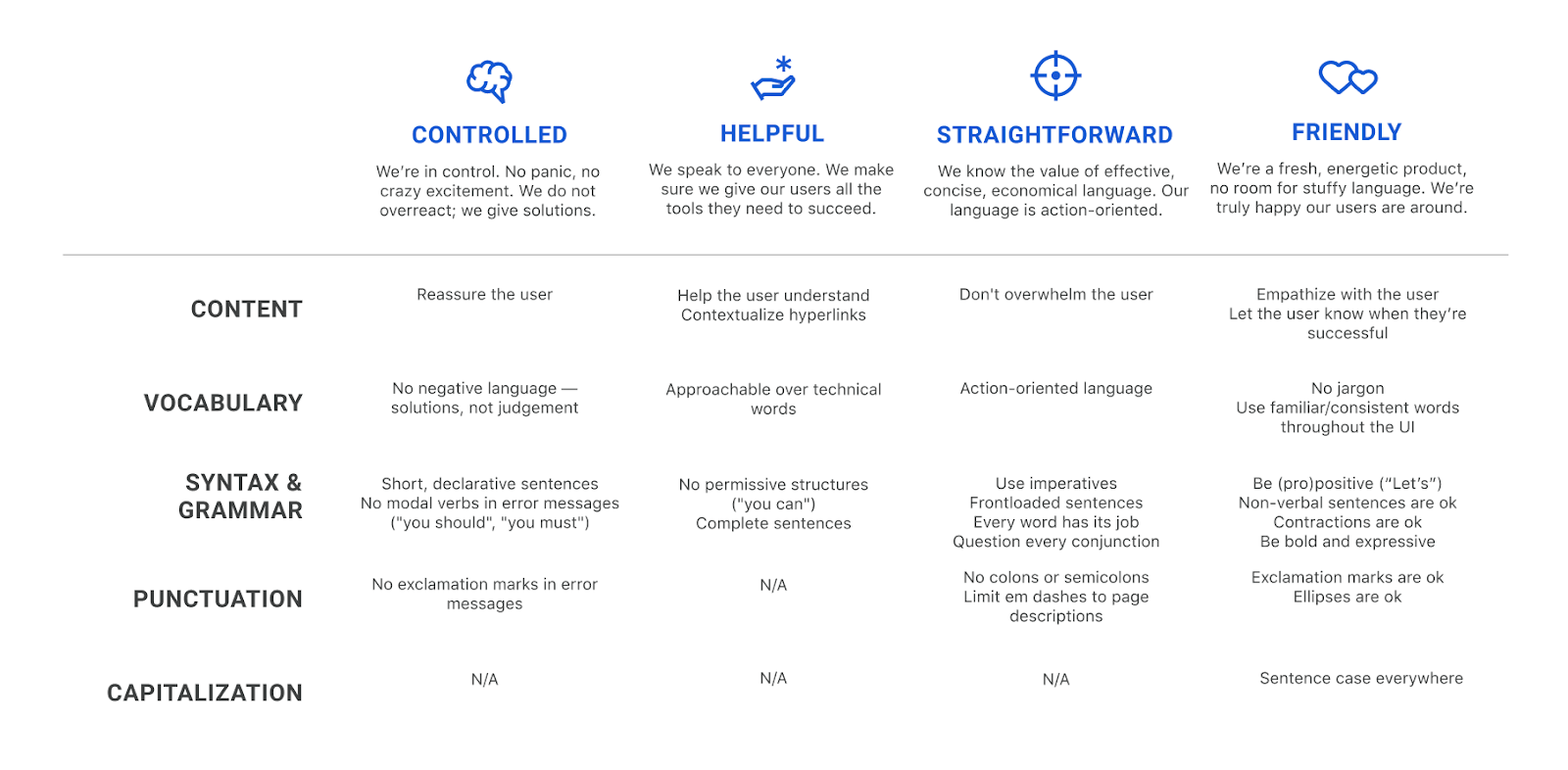

As a next step, I crafted a voice matrix, slightly adapting Torrey Podmajersky’s approach in Strategic Writing for UX. I assigned a column to each voice trait and defined what each of them entails in terms of content, vocabulary, syntax, grammar, punctuation, and capitalization choices. This voice matrix summarizes the dos and don’ts of UX writing for the Teams Dashboard.

As I was filling out this chart, I noticed that most guidelines I came up with for the friendly trait also worked well for the fresh voice trait. Ultimately, I thought, it all boils down to a certain feeling of warmth in our communication — a feeling made possible both by our friendly nature and by our fresh, informal approach. In the end, I decided to merge those traits into the friendly principle.

What I learned

This project has been an incredible journey to the heart of the product. I cherish the many creative conversations I had with my teammates about Teams. It was a chance for us to hit pause for a second, forget about deadlines and our everyday tasks, take a step back and focus on why we’re building what we’re building. It feels really good to have our principles written down, and we want to publish them soon on our product page for you to explore them.

Naturally, the project has also helped my writing tremendously. Every time I sit down to write a line of UX copy, I don’t just refer back to these four voice descriptors and their guidelines — I also write with the six product principles firmly in the back of my mind.

I’ve bookmarked the board with our sticky notes in my browser. It’s always there for me, and it contains the raw material I fall back on whenever I need inspiration.

What’s next

This is just the beginning and the high-level structure of our strategy. In time and with iteration, we’ll build out these principles to become full-fledged UX writing guidelines, as well as a set of patterns that will allow us to achieve true consistency throughout the Teams Dashboard. Keep an eye on copy changes and see if you can hear our new voice take shape.

Next week we’ll introduce our Design team and their vision. Stay tuned!

Raspberry Pi thermal camera

Post Syndicated from Ashley Whittaker original https://www.raspberrypi.org/blog/raspberry-pi-thermal-camera/

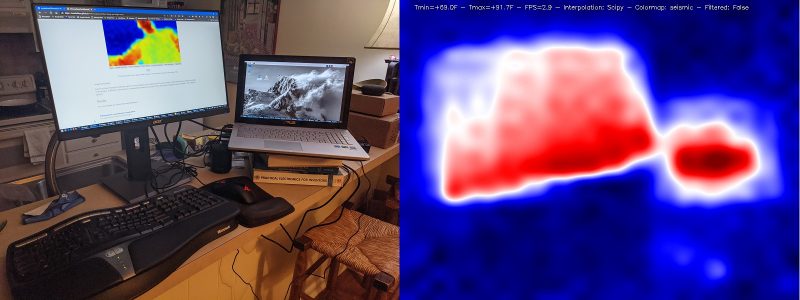

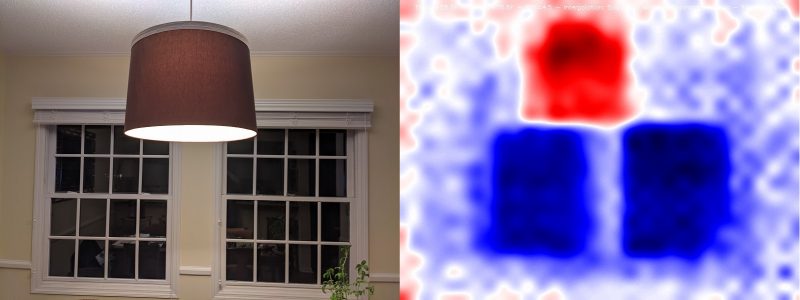

It has been a cold winter for Tom Shaffner, and since he is working from home and leaving the heating on all day, he decided it was finally time to see where his house’s insulation could be improved.

An affordable solution

His first thought was to get a thermal IR (infrared) camera, but he found the price hasn’t yet come down as much as he’d hoped. They range from several thousand dollars down to a few hundred, with a $50 option just to rent one from a hardware store for 24 hours.

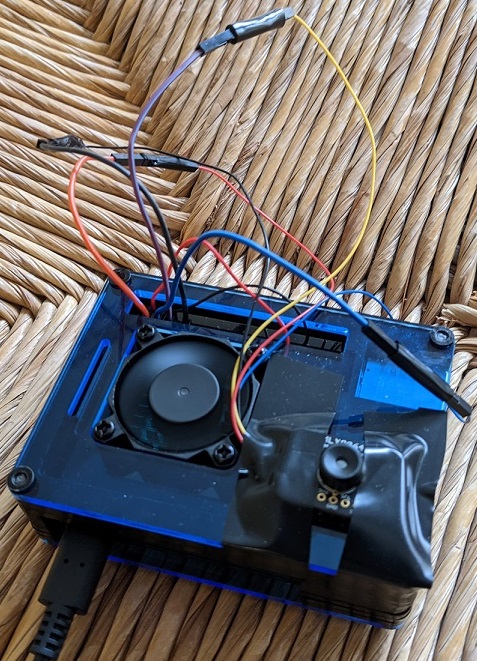

When he saw the $50 option, he realised he could just buy the $60 (£54) MLX90640 Thermal Camera from Pimoroni and attach it to a Raspberry Pi. Tom used a Raspberry Pi 4 for this project. Problem affordably solved.

A joint open source effort

Once Tom’s hardware arrived, he took advantage of the opportunity to combine elements of several other projects that had caught his eye into a single, consolidated Python library that can be downloaded via pip and run both locally and as a web server. Tom thanks Валерий Курышев, Joshua Hrisko, and Adrian Rosebrock for their work, on which this solution was partly based.

Tom has also published everything on GitHub for further open source development by any enterprising individuals who are interested in taking this even further.

Quality images

The big question, though, was whether the image quality would be good enough to be of real use. A few years back, the best cheap thermal IR camera had only an 8×8 resolution – not great. The magic of the MLX90640 Thermal Camera is that for the same price the resolution jumps to 24×32, giving each frame 768 different temperature readings.

Add a bit of interpolation and image enlargement and the end result gets the job done nicely. Stream the video over your local wireless network, and you can hold the camera in one hand and your phone in the other to use as a screen.

Bonus security feature

Bonus: If you leave the web server running when you’re finished thermal imaging, you’ve got yourself an affordable infrared security camera.

Documentation on the setup, installation, and results are all available on Tom’s GitHub, along with more pictures of what you can expect.

And you can connect with Tom on LinkedIn if you’d like to learn more about this “technically savvy mathematical modeller”.

The post Raspberry Pi thermal camera appeared first on Raspberry Pi.

Comic for 2021.03.05

Post Syndicated from Explosm.net original http://explosm.net/comics/5811/

New Cyanide and Happiness Comic

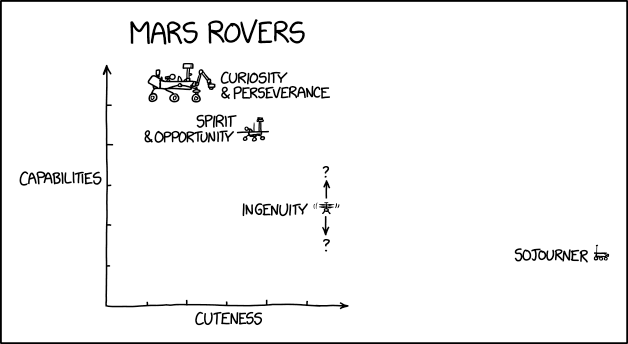

Mars Rovers

Post Syndicated from original https://xkcd.com/2433/

Using API destinations with Amazon EventBridge

Post Syndicated from James Beswick original https://aws.amazon.com/blogs/compute/using-api-destinations-with-amazon-eventbridge/

Amazon EventBridge enables developers to route events between AWS services, integrated software as a service (SaaS) applications, and your own applications. It can help decouple applications and produce more extensible, maintainable architectures. With the new API destinations feature, EventBridge can now integrate with services outside of AWS using REST API calls.

This feature enables developers to route events to existing SaaS providers that integrate with EventBridge, like Zendesk, PagerDuty, TriggerMesh, or MongoDB. Additionally, you can use other SaaS endpoints for applications like Slack or Contentful, or any other type of API or webhook. It can also provide an easier way to ingest data from serverless workloads into Splunk without needing to modify application code or install agents.

This blog post explains how to use API destinations and walks through integration examples you can use in your workloads.

How it works

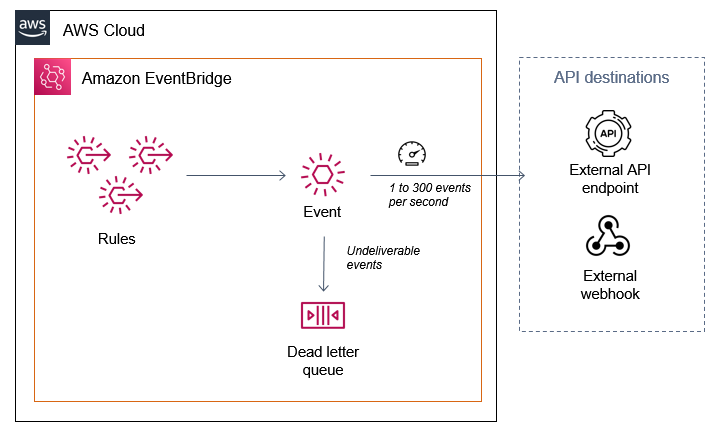

API destinations are third-party targets outside of AWS that you can invoke with an HTTP request. EventBridge invokes the HTTP endpoint and delivers the event as a payload within the request. You can use any preferred HTTP method, such as GET or POST. You can use input transformers to change the payload format to match your target.

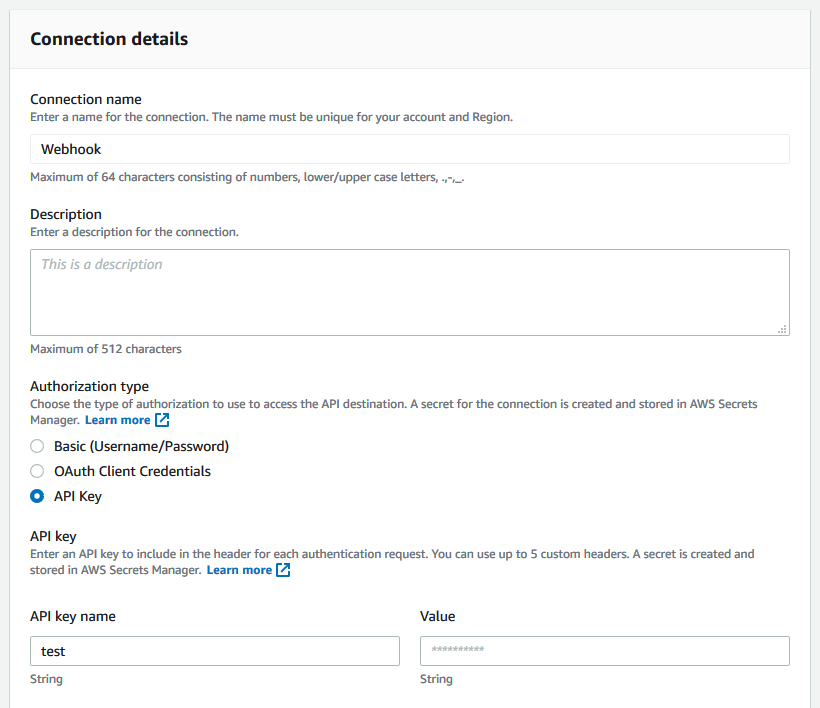

An API destination uses a Connection to manage the authentication credentials for a target. This defines the authorization type used, which can be an API key, OAuth client credentials grant, or a basic user name and password.

The service manages the secret in AWS Secrets Manager and the cost of storing the secret is included in the pricing for API destinations.

You create a connection to each different external API endpoint and share the connection with multiple endpoints. The API destinations console shows all configured connections, together with their authorization status. Any connections that cannot be established are shown here:

To create an API destination, you provide a name, the HTTP endpoint and method, and the connection:

When you configure the destination, you must also set an invocation rate limit between 1 and 300 events per second. This helps protect the downstream endpoint from surges in traffic. If the number of arriving events exceeds the limit, the EventBridge service queues up events. It delivers to the endpoint as quickly as possible within the rate limit.

It continues to do this for 24 hours. To make sure you retain any events that cannot be delivered, set up a dead-letter queue on the event bus. This ensures that if the event is not delivered within this timeframe, it is stored durably in an Amazon SQS queue for further processing. This can also be useful if the downstream API experiences an outage for extended periods of time.

Once you have configured the API destination, it becomes available in the list of targets for rules. Matching events are sent to the HTTP endpoint with the event serialized as part of the payload.

As with API Gateway targets in EventBridge, the maximum timeout for API destination is 5 seconds. If an API call exceeds this timeout, it is retried.

Debugging the payload from API destinations

You can send an event via API Destinations to debugging tools like Webhook.site to view the headers and payload of the API call:

- Create a connection with the credential, such as an API key.

- Create an API destination with the webhook URL endpoint and then create a rule to match and route events.

- The Webhook.site testing service shows the headers and payload once the webhook is triggered. This can help you test rules if you are adding headers or manipulating the payload using an input transformer.

Customizing the payload

Third-party APIs often require custom headers or payload formats when accepting data. EventBridge rules allow you to customize header parameters, query strings, and payload formats without the need for custom code. Header parameters and query strings can be configured with static values or attributes from the event:

To customize the payload, configure an input transformer, which consists of an Input Path and Input template. You use an Input Path to define variables and use JSONPath query syntax to identify the variable source in the event. For example, to extract two attributes from an Amazon S3 PutObject event, the Input Path is:

{

"key" : "$.[0].s3.object.key",

"bucket" : " $.[0].s3.bucket.name "

}

Next, the Input template defines the structure of the data passed to the target, which references the variables. With this release you can now use variables inside quotes in the input transformer. As a result, you can pass these values as a string or JSON, for example:

{

"filename" : "<key>",

"container" : "mycontainer-<bucket>"

}

Sending AWS events to DataDog

Using API destinations, you can send any AWS-sourced event to third-party services like DataDog. This approach uses the DataDog API to put data into the service. To do this, you must sign up for an account and create an API key. In this example, I send S3 events via CloudTrail to DataDog for further analysis.

- Navigate to the EventBridge console, select API destinations from the menu.

- Select the Connections tab and choose Create connection:

- Enter a connection name, then select API Key for Authorization Type. Enter the API key name DD-API-KEY and paste your secret API key as the value. Choose Create.

- In the API destinations tab, choose Create API destination.

- Enter a name, set the API destination endpoint to

https://http-intake.logs.datadoghq.com/v1/input, and the HTTP method to POST. Enter 300 for Invocation rate limit and select the DataDog connection from the dropdown. Choose Create.

- From the EventBridge console, select Rules and choose Create rule. Enter a name, select the default bus, and enter this event pattern:

{ "source": ["aws.s3"] } - In Select targets, choose API destination and select DataDog for the API destination. Expand, Configure Input.

- In the Input transformer section, enter

{"detail":"$.detail"}in the Input Path field and enter{"message": <detail>}in the Input Template.

- You can optionally add a dead letter queue. To do this, open the Retry policy and dead-letter queue section. Under Dead-letter queue, select an existing SQS queue.

- Choose Create.

- Open AWS CloudShell and upload an object to an S3 bucket in your account to trigger an event:

echo "test" > testfile.txt aws s3 cp testfile.txt s3://YOUR_BUCKET_NAME - The logs appear in the DataDog Logs console, where you can process the raw data for further analysis:

Sending AWS events to Zendesk

Zendesk is a SaaS provider that provides customer support solutions. It can already send events to EventBridge using a partner integration. This post shows how you can consume ticket events from Zendesk and run a sentiment analysis using Amazon Comprehend.

With API destinations, you can now use events to call the Zendesk API to create and modify tickets and interact with chats and customer profiles.

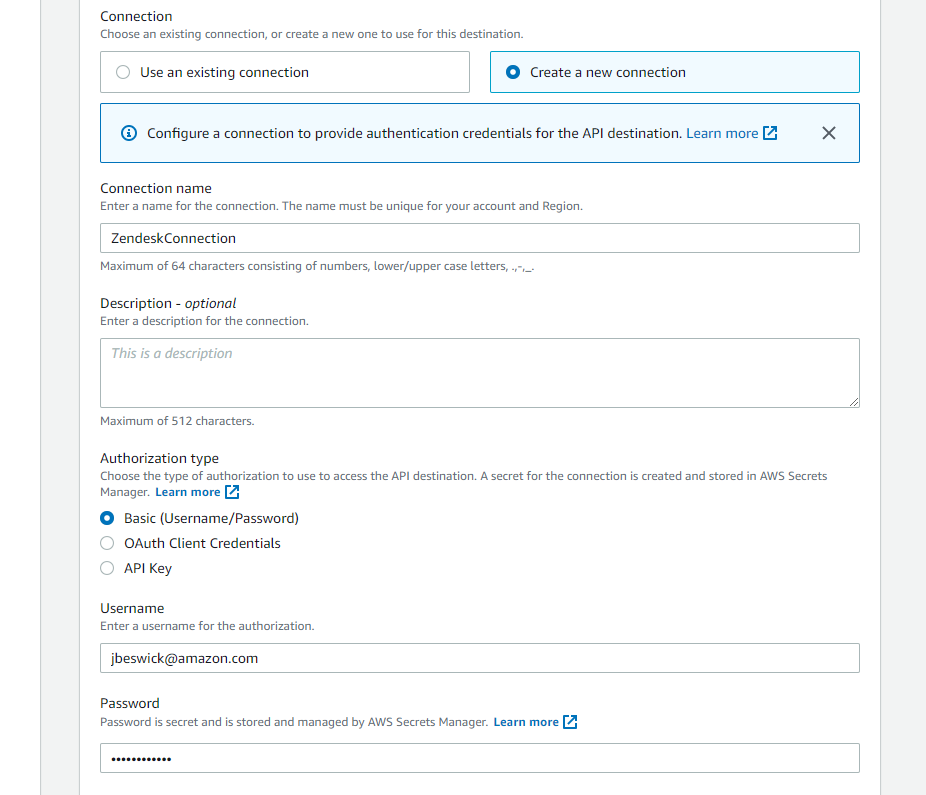

To create an API destination for Zendesk:

- Log in with an existing Zendesk account or register for a trial account.

- Navigate to the EventBridge console, select API destinations from the menu and choose Create API destination.

- On the Create API destination, page:

- Enter a name for the destination (e.g. “SendToZendesk”).

- For API destination endpoint, enter

https://<<your-subdomain>>.zendesk.com/api/v2/tickets.json. - For HTTP method, select POST.

- For Invocation rate, enter 10.

- In the connection section:

- Select the Create a new connection radio button.

- For Connection name, enter ZendeskConnection.

- For Authorization type, select Basic (Username/Password).

- Enter your Zendesk username and password.

- Choose Create.

When you create a rule to route to this API destination, use the Input transformer to build the defined JSON payload, as shown in the previous DataDog example. When an event matches the rule, EventBridge calls the Zendesk Create Ticket API. The new ticket appears in the Zendesk dashboard:

For more information on the Zendesk API, visit the Zendesk Developer Portal.

Building an integration with AWS CloudFormation and AWS SAM

To support this new feature, there are two new AWS CloudFormation resources available. These can also be used in AWS Serverless Application Model (AWS SAM) templates:

- The API destination resource is represented by AWS::Events::ApiDestination.

- The connection resource is represented by AWS::Events::Connection.

The connection resource defines the connection credential and optional invocation HTTP parameters:

Resources:

TestConnection:

Type: AWS::Events::Connection

Properties:

AuthorizationType: API_KEY

Description: 'My connection with an API key'

AuthParameters:

ApiKeyAuthParameters:

ApiKeyName: VHS

ApiKeyValue: Testing

InvocationHttpParameters:

BodyParameters:

- Key: 'my-integration-key'

Value: 'ABCDEFGH0123456'

Outputs:

TestConnectionName:

Value: !Ref TestConnection

TestConnectionArn:

Value: !GetAtt TestConnection.Arn

The API destination resource provides the connection, endpoint, HTTP method, and invocation limit:

Resources:

TestApiDestination:

Type: AWS::Events::ApiDestination

Properties:

Name: 'datadog-target'

ConnectionArn: arn:aws:events:us-east-1:123456789012:connection/datadogConnection/2

InvocationEndpoint: 'https://http-intake.logs.datadoghq.com/v1/input'

HttpMethod: POST

InvocationRateLimitPerSecond: 300

Outputs:

TestApiDestinationName:

Value: !Ref TestApiDestination

TestApiDestinationArn:

Value: !GetAtt TestApiDestination.Arn

TestApiDestinationSecretArn:

Value: !GetAtt TestApiDestination.SecretArn

You can use the existing AWS::Events::Rule resource to configure an input transformer for API destination targets:

ApiDestinationDeliveryRule:

Type: AWS::Events::Rule

Properties:

EventPattern:

source:

- "EventsForMyAPIdestination"

State: "ENABLED"

Targets:

-

Arn: !Ref TestApiDestinationArn

InputTransformer:

InputPathsMap:

detail: $.detail

InputTemplate: >

{

"message": <detail>

}

Conclusion

The API destinations feature of EventBridge enables developers to integrate workloads with third-party applications using REST API calls. This provides an easier way to build decoupled, extensible applications that work with applications outside of the AWS Cloud.

To use this feature, you configure a connection and an API destination. You can use API destinations in the same way as existing targets for rules, and also customize headers, query strings, and payloads in the API call.

Learn more about using API destinations with the following SaaS providers: Datadog, Freshworks, MongoDB, TriggerMesh, and Zendesk.

For more serverless learning resources, visit Serverless Land.

Build a data lake using Amazon Kinesis Data Streams for Amazon DynamoDB and Apache Hudi

Post Syndicated from Dhiraj Thakur original https://aws.amazon.com/blogs/big-data/build-a-data-lake-using-amazon-kinesis-data-streams-for-amazon-dynamodb-and-apache-hudi/

Amazon DynamoDB helps you capture high-velocity data such as clickstream data to form customized user profiles and online order transaction data to develop customer order fulfillment applications, improve customer satisfaction, and get insights into sales revenue to create a promotional offer for the customer. It’s essential to store these data points in a centralized data lake, which can be transformed, analyzed, and combined with diverse organizational datasets to derive meaningful insights and make predictions.

A popular use case in order management is receiving, tracking, and fulfilling customer orders. The order management process begins when an order is placed and ends when the customer receives their package. When storing high-velocity order transaction data in DynamoDB, you can use Amazon Kinesis streaming to extract data and store it in a centralized data lake built on Amazon Simple Storage Service (Amazon S3).

Amazon Kinesis Data Streams for DynamoDB helps you to publish item-level changes in any DynamoDB table to a Kinesis data stream of your choice. Additionally, you can take advantage of this feature for use cases that require longer data retention on the stream and fan out to multiple concurrent stream readers. You also can integrate with Amazon Kinesis Data Analytics or Amazon Kinesis Data Firehose to publish data to downstream destinations such as Amazon Elasticsearch Service (Amazon ES), Amazon Redshift, or Amazon S3.

In this post, you use Kinesis Data Streams for DynamoDB and take advantage of managed streaming delivery of DynamoDB data to other Kinesis Data Stream by simply enabling Kinesis streaming connection from Amazon DynamoDB console. To process DynamoDB events from Kinesis, you have multiple options: Amazon Kinesis Client Library (KCL) applications, Lambda, Kinesis Data Analytics for Apache Flink, and Kinesis Data Firehose. In this post, you use Kinesis Data Firehose to save the raw data in the S3 data lake and Apache Hudi to batch process the data.

Architecture

The following diagram illustrates the order processing system architecture.

In this architecture, users buy products in online retail shops and internally create an order transaction stored in DynamoDB. The order transaction data is ingested to the data lake and stored in the raw data layer. To achieve this, you enable Kinesis Data Streams for DynamoDB and use Kinesis Data Firehose to store data in Amazon S3. You use Lambda to transform the data from the delivery stream to remove unwanted data and finally store it in Parquet format. Next, you batch process the raw data and store it back in the Hudi dataset in the S3 data lake. You can then use Amazon Athena to do sales analysis. You build this entire data pipeline in a serverless manner.

Prerequisites

Complete the following steps to create AWS resources to build a data pipeline as mentioned in the architecture. For this post, we use the AWS Region us-west-1.

- On the Amazon Elastic Compute Cloud (Amazon EC2) console, create a keypair.

- Download the data files, Amazon EMR cluster, and Athena DDL code from GitHub.

- Deploy the necessary Amazon resources using the provided AWS CloudFormation template.

- For Stack name, enter a stack name of your choice.

- For Keypair name, choose a key pair.

A key pair is required to connect to the EMR cluster nodes. For more information, see Use an Amazon EC2 Key Pair for SSH Credentials.

- Keep the remaining default parameters.

- Acknowledge that AWS CloudFormation might create AWS Identity and Access Management (IAM) resources.

For more information about IAM, see Resources to learn more about IAM.

- Choose Create stack.

You can check the Resources tab for the stack after the stack is created.

The following table summarizes the resources that you created, which you use to build the data pipeline and analysis.

| Logical ID | Physical ID | Type |

| DeliveryPolicy | kines-Deli-* | AWS::IAM::Policy |

| DeliveryRole | kinesis-hudi-DeliveryRole-* | AWS::IAM::Role |

| Deliverystream | kinesis-hudi-Deliverystream-* | AWS::KinesisFirehose::DeliveryStream |

| DynamoDBTable | order_transaction_* | AWS::DynamoDB::Table |

| EMRClusterServiceRole | kinesis-hudi-EMRClusterServiceRole-* | AWS::IAM::Role |

| EmrInstanceProfile | kinesis-hudi-EmrInstanceProfile-* | AWS::IAM::InstanceProfile |

| EmrInstanceRole | kinesis-hudi-EmrInstanceRole-* | AWS::IAM::Role |

| GlueDatabase | gluedatabase-* | AWS::Glue::Database |

| GlueTable | gluetable-* | AWS::Glue::Table |

| InputKinesisStream | order-data-stream-* | AWS::Kinesis::Stream |

| InternetGateway | igw-* | AWS::EC2::InternetGateway |

| InternetGatewayAttachment | kines-Inter-* | AWS::EC2::VPCGatewayAttachment |

| MyEmrCluster | AWS::EMR::Cluster | |

| ProcessLambdaExecutionRole | kinesis-hudi-ProcessLambdaExecutionRole-* | AWS::IAM::Role |

| ProcessLambdaFunction | kinesis-hudi-ProcessLambdaFunction-* | AWS::Lambda::Function |

| ProcessedS3Bucket | kinesis-hudi-processeds3bucket-* | AWS::S3::Bucket |

| PublicRouteTable | AWS::EC2::RouteTable | |

| PublicSubnet1 | AWS::EC2::Subnet | |

| PublicSubnet1RouteTableAssociation | AWS::EC2::SubnetRouteTableAssociation | |

| PublicSubnet2 | AWS::EC2::Subnet | |

| PublicSubnet2RouteTableAssociation | AWS::EC2::SubnetRouteTableAssociation | |

| RawS3Bucket | kinesis-hudi-raws3bucket-* | AWS::S3::Bucket |

| S3Bucket | kinesis-hudi-s3bucket-* | AWS::S3::Bucket |

| SourceS3Bucket | kinesis-hudi-sources3bucket-* | AWS::S3::Bucket |

| VPC | vpc-* | AWS::EC2::VPC |

Enable Kinesis streaming for DynamoDB

AWS recently launched Kinesis Data Streams for DynamoDB so you can send data from DynamoDB to Kinesis data streams. You can use the AWS Command Line Interface (AWS CLI) or the AWS Management Console to enable this feature.

To enable this feature from the console, complete the following steps:

- On the DynamoDB console, choose the table you created in the CloudFormation stack earlier (it begins with the prefix

order_transaction_). - On the Overview tab, choose Manage streaming to Kinesis.

- Choose your input stream (it starts with

order-data-stream-).

- Choose Enable.

- Choose Close.

- Make sure that stream enabled is set to Yes.

Populate the sales order transaction dataset

To replicate a real-life use case, you need an online retail application. For this post, you upload raw data files in the S3 bucket and use a Lambda function to upload the data in DynamoDB. You can download the order data CSV files from the AWS Sample GitHub repository. Complete the following steps to upload the data in DynamoDB:

- On the Amazon S3 console, choose the bucket

<stack-name>-sourcess3bucket-*. - Choose Upload.

- Choose Add files.

- Choose the

order_data_09_02_2020.csvandorder_data_10_02_2020.csvfiles. - Choose Upload.

- On the Lambda console, choose the function

<stack-name>-CsvToDDBLambdaFunction-*.

- Choose Test.

- For Event template, enter an event name.

- Choose Create.

- Choose Test.

This runs the Lambda function and loads the CSV file order_data_09_02_2020.csv to the DynamoDB table.

- Wait until the message appears that the function ran successfully.

You can now view the data on the DynamoDB console, in the details page for your table.

Because you enabled the Kinesis data stream in the DynamoDB table, it starts streaming the data to Amazon S3. You can check the data by viewing the bucket on the Amazon S3 console. The following screenshot shows that a Parquet file is under the prefix in the bucket.

Use Apache Hudi with Amazon EMR

Now it’s time to process the streaming data using Hudi.

You can use the key pair you chose in the security options to SSH into the leader node.

- Use the following bash command to start the Spark shell to use it with Apache Hudi:

The Amazon EMR instance looks like the following screenshot.

- You can use the following Scala code to import the order transaction data from the S3 data lake to a Hudi dataset using the copy-on-write storage type. Change inputDataPath as per file path in

<stack-name>-raws3bucket-*in your environment, and replace the bucket name inhudiTablePathas<stack-name>- processeds3bucket-*.

For more information about DataSourceWriteOptions, see Work with a Hudi Dataset.

- In the Spark shell, you can now count the total number of records in the Apache Hudi dataset:

You can check the processed Apache Hudi dataset in the S3 data lake via the Amazon S3 console. The following screenshot shows the prefix order_hudi_cow is in <stack-name>- processeds3bucket-*.

When navigating into the order_hudi_cow prefix, you can find a list of Hudi datasets that are partitioned using the transaction_date key—one for each date in our dataset.

Let’s analyze the data stored in Amazon S3 using Athena.

Analyze the data with Athena

To analyze your data, complete the following steps:

- On the Athena console, create the database

order_dbusing the following command:

You use this database to create all the Athena tables.

- Create your table using the following command (replace the S3 bucket name with

<stack-name>- processeds3bucket*created in your environment):

- Add partitions by running the following query on the Athena console:

- Check the total number of records in the Hudi dataset with the following query:

It should return a single row with a count of 1,000.

Now check the record that you want to update.

- Run the following query on the Athena console:

The output should look like the following screenshot. Note down the value of product and amount.

Analyze the change data capture

Now let’s test the change data capture (CDC) in streaming. Let’s take an example where the customer changed an existing order. We load the order_data_10_02_2020.csv file, where order_id 3801 has a different product and amount.

To test the CDC feature, complete the following steps:

- On the Lambda console, choose the stack

<stack-name>-CsvToDDBLambdaFunction-*. - In the Environment variables section, choose Edit.

- For key, enter

order_data_10_02_2020.csv. - Choose Save.

You can see another prefix has been created in <stack-name>-raws3bucket-*.

- In Amazon EMR, run the following code in the Scala shell prompt to update the data (change

inputDataPathto the file path in<stack-name>-raws3bucket-*andhudiTablePathto<stack-name>- processeds3bucket-*): - Run the following query on the Athena console to check for the change to the total number of records as 1,000:

- Run the following query on the Athena console to test for the update:

The following screenshot shows that the product and amount values for the same order are updated.

In a production workload, you can trigger the updates on a schedule or by S3 modification events. A fully automated data lake makes sure your business analysts are always viewing the latest available data.

Clean up the resources

To avoid incurring future charges, follow these steps to remove the example resources:

- Delete the resources you created earlier in the pre-requisite section by deleting the stack instances from your stack set, if you created the EMR cluster with the CloudFormation template,.

- Stop the cluster via the Amazon EMR console, if you launched the EMR cluster manually.

- Empty all the relevant buckets via the Amazon S3 console.

Conclusion

You can build an end-to-end serverless data lake to get real-time insights from DynamoDB by using Kinesis Data Streams—all without writing any complex code. It allows your team to focus on solving business problems by getting useful insights immediately. Application developers have various use cases for moving data quickly through an analytics pipeline, and you can make this happen by enabling Kinesis Data Streams for DynamoDB.

If this post helps you or inspires you to solve a problem, we would love to hear about it! The code for this solution is available in the GitHub repository for you to use and extend. Contributions are always welcome!

About the Authors

Dhiraj Thakur is a Solutions Architect with Amazon Web Services. He works with AWS customers and partners to guide enterprise cloud adoption, migration, and strategy. He is passionate about technology and enjoys building and experimenting in the analytics and AI/ML space.

Dhiraj Thakur is a Solutions Architect with Amazon Web Services. He works with AWS customers and partners to guide enterprise cloud adoption, migration, and strategy. He is passionate about technology and enjoys building and experimenting in the analytics and AI/ML space.

Saurabh Shrivastava is a solutions architect leader and analytics/ML specialist working with global systems integrators. He works with AWS Partners and customers to provide them with architectural guidance for building scalable architecture in hybrid and AWS environments. He enjoys spending time with his family outdoors and traveling to new destinations to discover new cultures.

Saurabh Shrivastava is a solutions architect leader and analytics/ML specialist working with global systems integrators. He works with AWS Partners and customers to provide them with architectural guidance for building scalable architecture in hybrid and AWS environments. He enjoys spending time with his family outdoors and traveling to new destinations to discover new cultures.

Dylan Qu is an AWS solutions architect responsible for providing architectural guidance across the full AWS stack with a focus on data analytics, AI/ML, and DevOps.

Dylan Qu is an AWS solutions architect responsible for providing architectural guidance across the full AWS stack with a focus on data analytics, AI/ML, and DevOps.

Four Microsoft Exchange Zero-Days Exploited by China

Post Syndicated from Bruce Schneier original https://www.schneier.com/blog/archives/2021/03/four-microsoft-exchange-zero-days-exploited-by-china.html

Microsoft has issued an emergency Microsoft Exchange patch to fix four zero-day vulnerabilities currently being exploited by China.

How to replicate secrets in AWS Secrets Manager to multiple Regions

Post Syndicated from Fatima Ahmed original https://aws.amazon.com/blogs/security/how-to-replicate-secrets-aws-secrets-manager-multiple-regions/

On March 3, 2021, we launched a new feature for AWS Secrets Manager that makes it possible for you to replicate secrets across multiple AWS Regions. You can give your multi-Region applications access to replicated secrets in the required Regions and rely on Secrets Manager to keep the replicas in sync with the primary secret. In scenarios such as disaster recovery, you can read replicated secrets from your recovery Regions, even if your primary Region is unavailable. In this blog post, I show you how to automatically replicate a secret and access it from the recovery Region to support a disaster recovery plan.

With Secrets Manager, you can store, retrieve, manage, and rotate your secrets, including database credentials, API keys, and other secrets. When you create a secret using Secrets Manager, it’s created and managed in a Region of your choosing. Although scoping secrets to a Region is a security best practice, there are scenarios such as disaster recovery and cross-Regional redundancy that require replication of secrets across Regions. Secrets Manager now makes it possible for you to easily replicate your secrets to one or more Regions to support these scenarios.

With this new feature, you can create Regional read replicas for your secrets. When you create a new secret or edit an existing secret, you can specify the Regions where your secrets need to be replicated. Secrets Manager will securely create the read replicas for each secret and its associated metadata, eliminating the need to maintain a complex solution for this functionality. Any update made to the primary secret, such as a secret value updated through automatic rotation, will be automatically propagated by Secrets Manager to the replica secrets, making it easier to manage the life cycle of multi-Region secrets.

Note: Each replica secret is billed as a separate secret. For more details on pricing, see the AWS Secrets Manager pricing page.

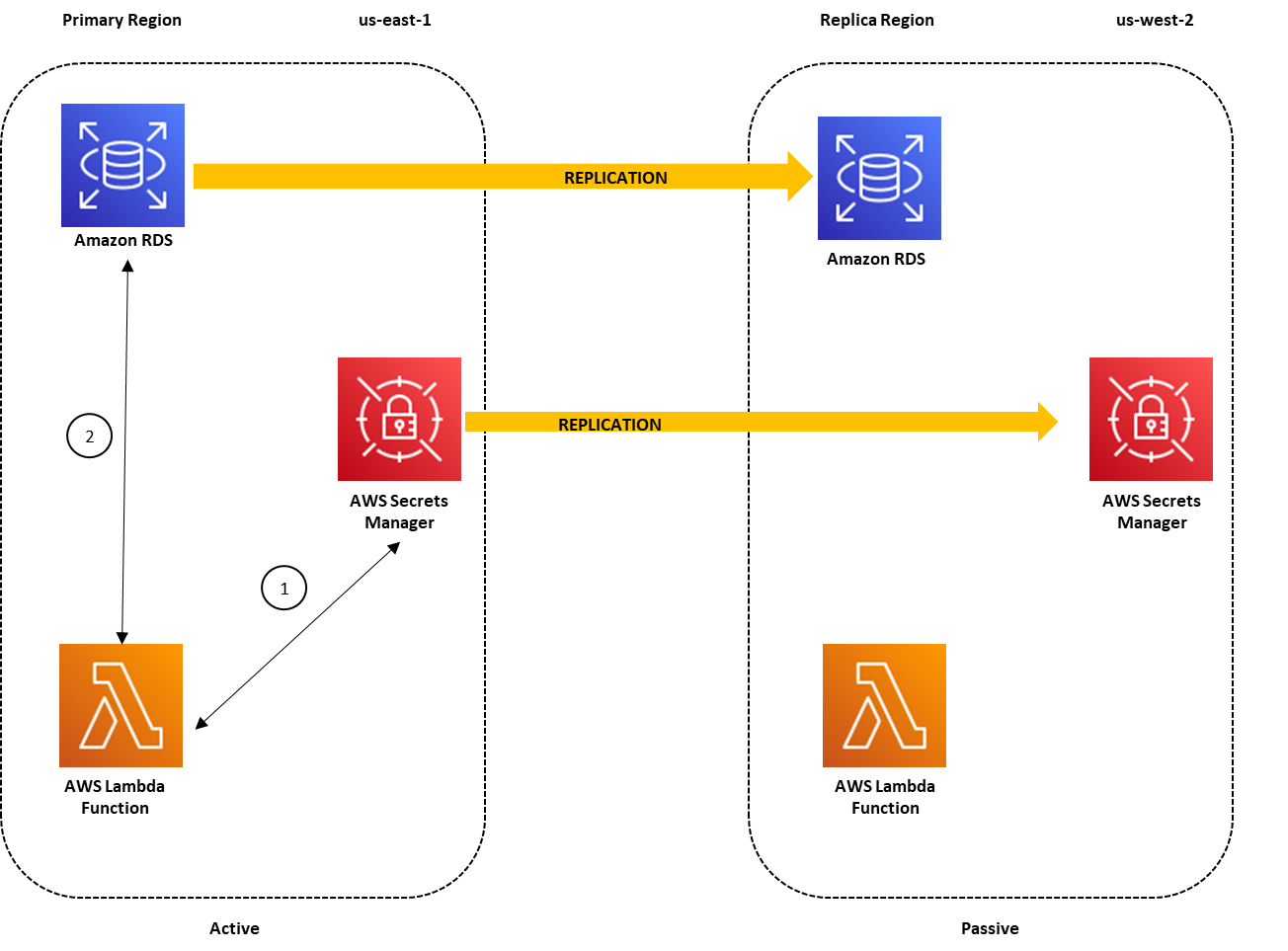

Architecture overview

Suppose that your organization has a requirement to set up a disaster recovery plan. In this example, us-east-1 is the designated primary Region, where you have an application running on a simple AWS Lambda function (for the example in this blog post, I’m using Python 3). You also have an Amazon Relational Database Service (Amazon RDS) – MySQL DB instance running in the us-east-1 Region, and you’re using Secrets Manager to store the database credentials as a secret. Your application retrieves the secret from Secrets Manager to access the database. As part of the disaster recovery strategy, you set up us-west-2 as the designated recovery Region, where you’ve replicated your application, the DB instance, and the database secret.

To elaborate, the solution architecture consists of:

- A primary Region for creating the secret, in this case us-east-1 (N. Virginia).

- A replica Region for replicating the secret, in this case us-west-2 (Oregon).

- An Amazon RDS – MySQL DB instance that is running in the primary Region and configured for replication to the replica Region. To set up read replicas or cross-Region replicas for Amazon RDS, see Working with read replicas.

- A secret created in Secrets Manager and configured for replication for the replica Region.

- AWS Lambda functions (running on Python 3) deployed in the primary and replica Regions acting as clients to the MySQL DBs.

This architecture is illustrated in Figure 1.

Figure 1: Architecture overview for a multi-Region secret replication with the primary Region active

In the primary region us-east-1, the Lambda function uses the credentials stored in the secret to access the database, as indicated by the following steps in Figure 1:

- The Lambda function sends a request to Secrets Manager to retrieve the secret value by using the GetSecretValue API call. Secrets Manager retrieves the secret value for the Lambda function.

- The Lambda function uses the secret value to connect to the database in order to read/write data.

The replicated secret in us-west-2 points to the primary DB instance in us-east-1. This is because when Secrets Manager replicates the secret, it replicates the secret value and all the associated metadata, such as the database endpoint. The database endpoint details are stored within the secret because Secrets Manager uses this information to connect to the database and rotate the secret if it is configured for automatic rotation. The Lambda function can also use the database endpoint details in the secret to connect to the database.

To simplify database failover during disaster recovery, as I’ll cover later in the post, you can configure an Amazon Route 53 CNAME record for the database endpoint in the primary Region. The database host associated with the secret is configured with the database CNAME record. When the primary Region is operating normally, the CNAME record points to the database endpoint in the primary Region. The requests to the database CNAME are routed to the DB instance in the primary Region, as shown in Figure 1.

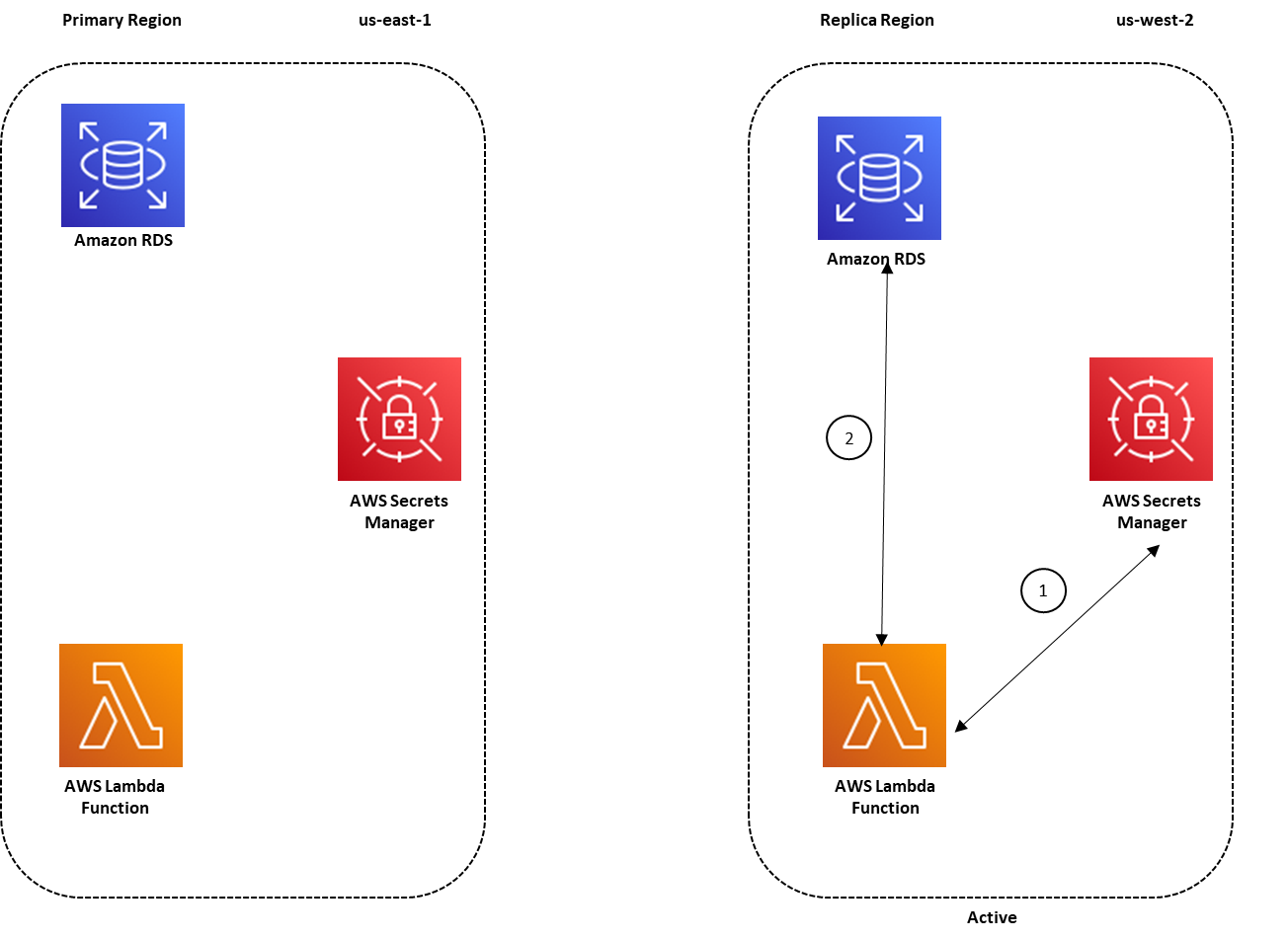

During disaster recovery, you can failover to the replica Region, us-west-2, to make it possible for your application running in this Region to access the Amazon RDS read replica in us-west-2 by using the secret stored in the same Region. As part of your failover script, the database CNAME record should also be updated to point to the database endpoint in us-west-2. Because the database CNAME is used to point to the database endpoint within the secret, your application in us-west-2 can now use the replicated secret to access the database read replica in this Region. Figure 2 illustrates this disaster recovery scenario.

Figure 2: Architecture overview for a multi-Region secret replication with the replica Region active

Prerequisites

The procedure described in this blog post requires that you complete the following steps before starting the procedure:

- Configure an Amazon RDS DB instance in the primary Region, with replication configured in the replica Region.

- Configure a Route 53 CNAME record for the database endpoint in the primary Region.

- Configure the Lambda function to connect with the Amazon RDS database and Secrets Manager by following the procedure in this blog post.

- Sign in to the AWS Management Console using a role that has SecretsManagerReadWrite permissions in the primary and replica Regions.

Enable replication for secrets stored in Secrets Manager

In this section, I walk you through the process of enabling replication in Secrets Manager for:

- A new secret that is created for your Amazon RDS database credentials

- An existing secret that is not configured for replication

For the first scenario, I show you the steps to create a secret in Secrets Manager in the primary Region (us-east-1) and enable replication for the replica Region (us-west-2).

To create a secret with replication enabled

- In the AWS Management Console, navigate to the Secrets Manager console in the primary Region (N. Virginia).

- Choose Store a new secret.

- On the Store a new secret screen, enter the Amazon RDS database credentials that will be used to connect with the Amazon RDS DB instance. Select the encryption key and the Amazon RDS DB instance, and then choose Next.

- Enter the secret name of your choice, and then enter a description. You can also optionally add tags and resource permissions to the secret.

- Under Replicate Secret – optional, choose Replicate secret to other regions.

Figure 3: Replicate a secret to other Regions

- For AWS Region, choose the replica Region, US West (Oregon) us-west-2. For Encryption Key, choose Default to store your secret in the replica Region. Then choose Next.

Figure 4: Configure secret replication

- In the Configure Rotation section, you can choose whether to enable rotation. For this example, I chose not to enable rotation, so I selected Disable automatic rotation. However, if you want to enable rotation, you can do so by following the steps in Enabling rotation for an Amazon RDS database secret in the Secrets Manager User Guide. When you enable rotation in the primary Region, any changes to the secret from the rotation process are also replicated to the replica Region. After you’ve configured the rotation settings, choose Next.

- On the Review screen, you can see the summary of the secret configuration, including the secret replication configuration.

Figure 5: Review the secret before storing

- At the bottom of the screen, choose Store.

- At the top of the next screen, you’ll see two banners that provide status on:

- The creation of the secret in the primary Region

- The replication of the secret in the Secondary Region

After the creation and replication of the secret is successful, the banners will provide you with confirmation details.

At this point, you’ve created a secret in the primary Region (us-east-1) and enabled replication in a replica Region (us-west-2). You can now use this secret in the replica Region as well as the primary Region.

Now suppose that you have a secret created in the primary Region (us-east-1) that hasn’t been configured for replication. You can also configure replication for this existing secret by using the following procedure.

To enable multi-Region replication for existing secrets

- In the Secrets Manager console, choose the secret name. At the top of the screen, choose Replicate secret to other regions.

Figure 6: Enable replication for existing secrets

This opens a pop-up screen where you can configure the replica Region and the encryption key for encrypting the secret in the replica Region.

- Choose the AWS Region and encryption key for the replica Region, and then choose Complete adding region(s).

Figure 7: Configure replication for existing secrets

This starts the process of replicating the secret from the primary Region to the replica Region.

- Scroll down to the Replicate Secret section. You can see that the replication to the us-west-2 Region is in progress.

Figure 8: Review progress for secret replication

After the replication is successful, you can look under Replication status to review the replication details that you’ve configured for your secret. You can also choose to replicate your secret to more Regions by choosing Add more regions.

Figure 9: Successful secret replication to a replica Region

Update the secret with the CNAME record

Next, you can update the host value in your secret to the CNAME record of the DB instance endpoint. This will make it possible for you to use the secret in the replica Region without making changes to the replica secret. In the event of a failover to the replica Region, you can simply update the CNAME record to the DB instance endpoint in the replica Region as a part of your failover script

To update the secret with the CNAME record

- Navigate to the Secrets Manager console, and choose the secret that you have set up for replication

- In the Secret value section, choose Retrieve secret value, and then choose Edit.

- Update the secret value for the host with the CNAME record, and then choose Save.

Figure 10: Edit the secret value

- After you choose Save, you’ll see a banner at the top of the screen with a message that indicates that the secret was successfully edited.Because the secret is set up for replication, you can also review the status of the synchronization of your secret to the replica Region after you updated the secret. To do so, scroll down to the Replicate Secret section and look under Region Replication Status.

Figure 11: Successful secret replication for a modified secret

Access replicated secrets from the replica Region

Now that you’ve configured the secret for replication in the primary Region, you can access the secret from the replica Region. Here I demonstrate how to access a replicated secret from a simple Lambda function that is deployed in the replica Region (us-west-2).

To access the secret from the replica Region

- From the AWS Management Console, navigate to the Secrets Manager console in the replica Region (Oregon) and view the secret that you configured for replication in the primary Region (N. Virginia).

Figure 12: View secrets that are configured for replication in the replica Region

- Choose the secret name and review the details that were replicated from the primary Region. A secret that is configured for replication will display a banner at the top of the screen stating the replication details.

Figure 13: The replication status banner

- Under Secret Details, you can see the secret’s ARN. You can use the secret’s ARN to retrieve the secret value from the Lambda function or application that is deployed in your replica Region (Oregon). Make a note of the ARN.

Figure 14: View secret details

During a disaster recovery scenario when the primary Region isn’t available, you can update the CNAME record to point to the DB instance endpoint in us-west-2 as part of your failover script. For this example, my application that is deployed in the replica Region is configured to use the replicated secret’s ARN.

Let’s suppose your sample Lambda function defines the secret name and the Region in the environment variables. The REGION_NAME environment variable contains the name of the replica Region; in this example, us-west-2. The SECRET_NAME environment variable is the ARN of your replicated secret in the replica Region, which you noted earlier.

Figure 15: Environment variables for the Lambda function

In the replica Region, you can now refer to the secret’s ARN and Region in your Lambda function code to retrieve the secret value for connecting to the database. The following sample Lambda function code snippet uses the secret_name and region_name variables to retrieve the secret’s ARN and the replica Region values stored in the environment variables.

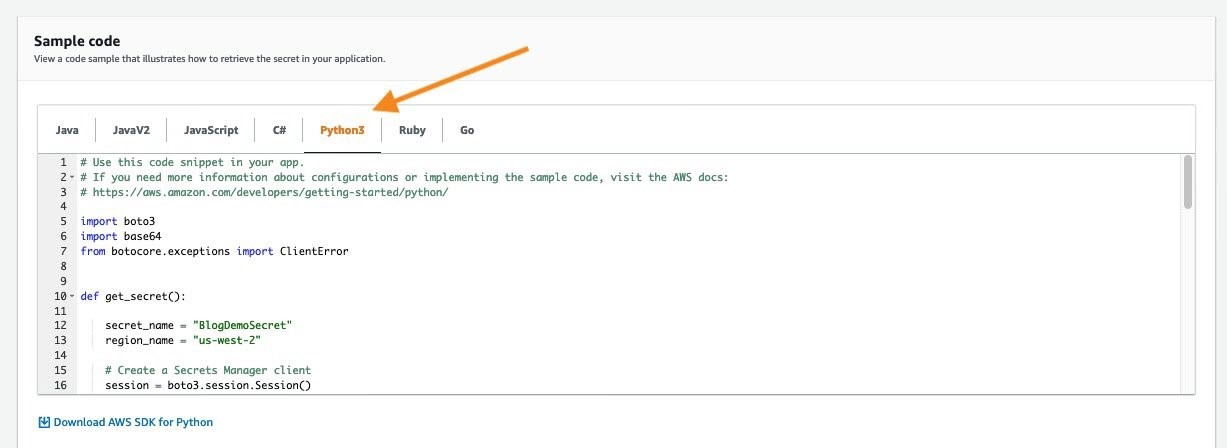

Alternately, you can simply use the Python 3 sample code for the replicated secret to retrieve the secret value from the Lambda function in the replica Region. You can review the provided sample codes by navigating to the secret details in the console, as shown in Figure 16.

Figure 16: Python 3 sample code for the replicated secret

Summary

When you plan for disaster recovery, you can configure replication of your secrets in Secrets Manager to provide redundancy for your secrets. This feature reduces the overhead of deploying and maintaining additional configuration for secret replication and retrieval across AWS Regions. In this post, I showed you how to create a secret and configure it for multi-Region replication. I also demonstrated how you can configure replication for existing secrets across multiple Regions.

I showed you how to use secrets from the replica Region and configure a sample Lambda function to retrieve a secret value. When replication is configured for secrets, you can use this technique to retrieve the secrets in the replica Region in a similar way as you would in the primary Region.

You can start using this feature through the AWS Secrets Manager console, AWS Command Line Interface (AWS CLI), AWS SDK, or AWS CloudFormation. To learn more about this feature, see the AWS Secrets Manager documentation. If you have feedback about this blog post, submit comments in the Comments section below. If you have questions about this blog post, start a new thread on the AWS Secrets Manager forum.

Want more AWS Security how-to content, news, and feature announcements? Follow us on Twitter.

[$] BPF meets io_uring

Post Syndicated from original https://lwn.net/Articles/847951/rss

Over the last couple of years, a lot of development effort has gone into

two kernel subsystems:

BPF and

io_uring. The BPF virtual machine allows

programs from user space to be safely run within the context of the kernel,

while io_uring addresses the longstanding problem of running system calls

asynchronously. As the two subsystems expand, it was inevitable that the

two would eventually meet; the first encounter happened in mid-February

with this patch

set from Pavel Begunkov adding the ability to run BPF programs from

within io_uring.

A warning about 5.12-rc1

Post Syndicated from original https://lwn.net/Articles/848265/rss

Linus Torvalds has sent out a note telling people not to install the recent

5.12-rc1 development kernel; this is especially true for anybody running

with swap files. “But I want everybody to be aware of because _if_

it bites you, it bites you hard, and you can end up with a filesystem that

is essentially overwritten by random swap data. This is what we in the

industry call ‘double ungood’.” Additionally, he is asking

maintainers to not start branches from 5.12-rc1 to avoid future situations where

people land in the buggy code while bisecting problems.

Amazon EMR 2020 year in review

Post Syndicated from Abhishek Sinha original https://aws.amazon.com/blogs/big-data/amazon-emr-2020-year-in-review/

Tens of thousands of customers use Amazon EMR to run big data analytics applications on Apache Spark, Apache Hive, Apache HBase, Apache Flink, Apache Hudi, and Presto at scale. Amazon EMR automates the provisioning and scaling of these frameworks, and delivers high performance at low cost with optimized runtimes and support for a wide range of Amazon Elastic Compute Cloud (Amazon EC2) instance types and Amazon Elastic Kubernetes Service (Amazon EKS) clusters. Amazon EMR makes it easy for data engineers and data scientists to develop, visualize, and debug data science applications with Amazon EMR Studio (preview) and Amazon EMR Notebooks.

You can hear customers describe how they use Amazon EMR in the following 2020 AWS re:Invent sessions:

- How Nielsen built a multi-petabyte data platform using Amazon EMR

- Contextual targeting and ad tech migration best practices

- The right tool for the job: Enabling analytics at scale at Intuit

You can also find more information in the following posts:

- How the Allen Institute uses Amazon EMR and AWS Step Functions to process extremely wide transcriptomic datasets

- How the ZS COVID-19 Intelligence Engine helps Pharma & Med device manufacturers understand local healthcare needs & gaps at scale

- Dream11’s journey to building their Data Highway on AWS

- Enhancing customer safety by leveraging the scalable, secure, and cost-optimized Toyota Connected Data Lake

Throughout 2020, we worked to deliver better Amazon EMR performance at a lower price, and to make Amazon EMR easier to manage and use for big data analytics within your Lake House Architecture. This post summarizes the key improvements during the year and provides links to additional information.

Differentiated engine performance

Amazon EMR simplifies building and operating big data environments and applications. You can launch an EMR cluster in minutes. You don’t need to worry about infrastructure provisioning, cluster setup, configuration, or tuning. Amazon EMR takes care of these tasks, allowing you to focus your teams on developing differentiated big data applications. In addition to eliminating the need for you to build and manage your own infrastructure to run big data applications, Amazon EMR gives you better performance than simply using open-source distributions, and provides 100% API compatibility. This means you can run your workloads faster without changing any code.

Amazon EMR runtime for Apache Spark is a performance-optimized runtime environment for Spark that is active by default. We first introduced the EMR runtime for Apache Spark in Amazon EMR release 5.28.0 in November 2019, and used queries based on the TPC-DS benchmark to measure the performance improvement over open-source Spark 2.4. Those results showed considerable improvement: the geometric mean in query execution time was 2.4 times faster and the total query runtime was 3.2 times faster. As discussed in Turbocharging Query Execution on Amazon EMR at AWS re:Invent 2020, we’ve continued to improve the runtime, and our latest results show that Amazon EMR 5.30 is three times faster than without the runtime, which means you can run petabyte-scale analysis at less than half the cost of traditional on-premises solutions. For more information, see How Drop used the EMR runtime for Apache Spark to halve costs and get results 5.4 times faster.

We’ve also improved Hive and PrestoDB performance. In April 2020, we announced support for Hive Low Latency Analytical Processing (LLAP) as a YARN service starting with Amazon EMR 6.0. Our tests show that Apache Hive is two times faster with Hive LLAP on Amazon EMR 6.0. You can choose to use Hive LLAP or dynamically allocated containers. In May 2020, we introduced the Amazon EMR runtime for PrestoDB in Amazon EMR 5.30. Our most recent tests based on TPC-DS benchmark queries compare Amazon EMR 5.31, which uses the runtime, to Amazon EMR 5.29, which does not. The geometric mean in query execution time is 2.6 times faster with Amazon EMR 5.31 and the runtime for PrestoDB.

Simpler incremental data processing

Apache Hudi (Hadoop Upserts, Deletes, and Incrementals) is an open-source data management framework used for simplifying incremental data processing and data pipeline development. You can use it to perform record-level inserts, updates, and deletes in Amazon Simple Storage Service (Amazon S3) data lakes, thereby simplifying building change data capture (CDC) pipelines. With this capability, you can comply with data privacy regulations and simplify data ingestion pipelines that deal with late-arriving or updated records from sources like streaming inputs and CDC from transactional systems. Apache Hudi integrates with open-source big data analytics frameworks like Apache Spark, Apache Hive, and Presto, and allows you to maintain data in Amazon S3 or HDFS in open formats like Apache Parquet and Apache Avro.

We first supported Apache Hudi starting with Amazon EMR release 5.28 in November 2019. In June 2020, Apache Hudi graduated from incubator with release 0.6.0, which we support with Amazon EMR releases 5.31.0, 6.2.0, and higher. The Amazon EMR team collaborated with the Apache Hudi community to create a new bootstrap operation, which allows you to use Hudi with your existing Parquet datasets without needing to rewrite the dataset. This bootstrap operation accelerates the process of creating a new Apache Hudi dataset from existing datasets—in our tests using a 1 TB Parquet dataset on Amazon S3, the bootstrap performed five times faster than bulk insert.

Also in June 2020, starting with Amazon EMR release 5.30.0, we added support for the HoodieDeltaStreamer utility, which provides an easy way to ingest data from many sources, including AWS Data Migration Services (AWS DMS). With this integration, you can now ingest data from upstream relational databases to your S3 data lakes in a seamless, efficient, and continuous manner. For more information, see Apply record level changes from relational databases to Amazon S3 data lake using Apache Hudi on Amazon EMR and AWS Database Migration Service.

Amazon Athena and Amazon Redshift Spectrum added support for querying Apache Hudi datasets in S3-based data lakes—Athena announcing in July 2020 and Redshift Spectrum announcing in September. Now, you can query the latest snapshot of Apache Hudi Copy-on-Write (CoW) datasets from both Athena and Redshift Spectrum, even while you continue to use Apache Hudi support in Amazon EMR to make changes to the dataset.

Differentiated instance performance

In addition to providing better software performance with Amazon EMR runtimes, we offer more instance options than any other cloud provider, allowing you to choose the instance that gives you the best performance and cost for your workload. You choose what types of EC2 instances to provision in your cluster (standard, high memory, high CPU, high I/O) based on your application’s requirements, and fully customize your cluster to suit your requirements.

In December 2020, we announced that Amazon EMR now supports M6g, C6g, and R6g instances with versions 6.1.0, 5.31.0 and later, which enables you to use instances powered by AWS Graviton2 processors. Graviton2 processors are custom designed by AWS using 64-bit Arm Neoverse cores to deliver the best price performance for cloud workloads running in Amazon EC2. Although your performance benefit will vary based on the unique characteristics of your workloads, our tests based on the TPC-DS 3 TB benchmark showed that the EMR runtime for Apache Spark provides up to 15% improved performance and up to 30% lower costs on Graviton2 instances relative to equivalent previous generation instances.

Easier cluster optimization

We’ve also made it easier to optimize your EMR clusters. In July 2020, we introduced Amazon EMR Managed Scaling, a new feature that automatically resizes your EMR clusters for best performance at the lowest possible cost. EMR Managed Scaling eliminates the need to predict workload patterns in advance or write custom automatic scaling rules that depend on an in-depth understanding of the application framework (for example, Apache Spark or Apache Hive). Instead, you specify the minimum and maximum compute resource limits for your clusters, and Amazon EMR constantly monitors key metrics based on the workload and optimizes the cluster size for best resource utilization. Amazon EMR can scale the cluster up during peaks and scale it down gracefully during idle periods, reducing your costs by 20–60% and optimizing cluster capacity for best performance.

EMR Managed Scaling is supported for Apache Spark, Apache Hive, and YARN-based workloads on Amazon EMR versions 5.30.1 and above. EMR Managed Scaling supports EMR instance fleets, enabling you to seamlessly scale Spot Instances, On-Demand Instances, and instances that are part of a Savings Plan, all within the same cluster. You can take advantage of Managed Scaling and instance fleets to provision the cluster capacity that has the lowest chance of getting interrupted, for the lowest cost.

In October 2020, we announced Amazon EMR support for the capacity-optimized allocation strategy for provisioning EC2 Spot Instances. The capacity-optimized allocation strategy automatically makes the most efficient use of available spare capacity while still taking advantage of the steep discounts offered by Spot Instances. You can now specify up to 15 instance types in your EMR task instance fleet configuration. This provides Amazon EMR with more options in choosing the optimal pools to launch Spot Instances from in order to decrease chances of Spot interruptions, and increases the ability to relaunch capacity using other instance types in case Spot Instances are interrupted when Amazon EC2 needs the capacity back.

For more information, see How Nielsen built a multi-petabyte data platform using Amazon EMR and Contextual targeting and ad tech migration best practices.

Workload consolidation

Previously, you had to choose between using fully managed Amazon EMR on Amazon EC2 or self-managing Apache Spark on Amazon EKS. When you use Amazon EMR on Amazon EC2, you can choose from a wide range of EC2 instance types to meet price and performance requirements, but you can’t run multiple versions of Apache Spark or other applications on a cluster, and you can’t use unused capacity for non-Amazon EMR applications. When you self-manage Apache Spark on Amazon EKS, you have to do the heavy lifting of installing, managing, and optimizing Apache Spark to run on Kubernetes, and you don’t get the benefit of optimized runtimes in Amazon EMR.

You no longer have to choose. In December 2020, we announced the general availability of Amazon EMR on Amazon EKS, a new deployment option for Amazon EMR that allows you to run fully managed open-source big data frameworks on Amazon EKS. If you already use Amazon EMR, you can now consolidate Amazon EMR-based applications with other Kubernetes-based applications on the same Amazon EKS cluster to improve resource utilization and simplify infrastructure management using common Amazon EKS tools. If you currently self-manage big data frameworks on Amazon EKS, you can now use Amazon EMR to automate provisioning and management, and take advantage of the optimized Amazon EMR runtimes to deliver better performance at lower cost.

Amazon EMR on EKS enables your team to collaborate more efficiently. You can run applications on a common pool of resources without having to provision infrastructure, and co-locate multiple Amazon EMR versions on a single Amazon EKS cluster to rapidly test and verify new Amazon EMR versions and the included open-source frameworks. You can improve developer productivity with faster cluster startup times because Amazon EMR application containers on existing Amazon EKS cluster instances start within 15 seconds, whereas creating new clusters of EC2 instances can take several minutes. You can use Amazon Managed Workflows for Apache Airflow (Amazon MWAA) to programmatically author, schedule, and monitor workflows, and use EMR Studio (preview) to develop, visualize, and debug applications. We discuss Amazon MWAA and EMR Studio more in the next section.

For more information, see Run Spark on Kubernetes with Amazon EMR on Amazon EKS and Amazon EMR on EKS Development Guide.

Higher developer productivity

Of course, your goal with Amazon EMR is not only to achieve the best price performance for your big data analytics workloads, but also to deliver new insights that help you run your business.

In November 2020, we announced Amazon MWAA, a fully managed service that makes it easy to run open-source versions of Apache Airflow on AWS, and to build workflows to run your extract, transform, and load (ETL) jobs and data pipelines. Airflow workflows retrieve input from sources like Amazon S3 using Athena queries, perform transformations on EMR clusters, and can use the resulting data to train machine learning (ML) models on Amazon SageMaker. Workflows in Airflow are authored as Directed Acyclic Graphs (DAGs) using the Python programming language.

At AWS re:Invent 2020, we introduced the preview of EMR Studio, a new notebook-first integrated development environment (IDE) experience with Amazon EMR. EMR Studio makes it easy for data scientists to develop, visualize, and debug applications written in R, Python, Scala, and PySpark. It provides fully managed Jupyter notebooks and tools like Spark UI and YARN Timeline Service to simplify debugging. You can install custom Python libraries or Jupyter kernels required for your applications directly to your EMR clusters, and can connect to code repositories such as AWS CodeCommit, GitHub, and Bitbucket to collaborate with peers. EMR Studio uses AWS Single Sign-On (AWS SSO), enabling you to log in directly with your corporate credentials without signing in to the AWS Management Console.

EMR Studio kernels and applications run on EMR clusters, so you get the benefit of distributed data processing using the performance-optimized EMR runtime for Apache Spark. You can create cluster templates in AWS Service Catalog to simplify running jobs for your data scientists and data engineers, and can take advantage of EMR clusters running on Amazon EC2, Amazon EKS, or both. For example, you might reuse existing EC2 instances in your shared Kubernetes cluster to enable fast startup time for development work and ad hoc analysis, and use EMR clusters on Amazon EC2 to ensure the best performance for frequently run, long-running workloads.

To learn more, see Introducing a new notebook-first IDE experience with Amazon EMR and Amazon EMR Studio.

Unified governance

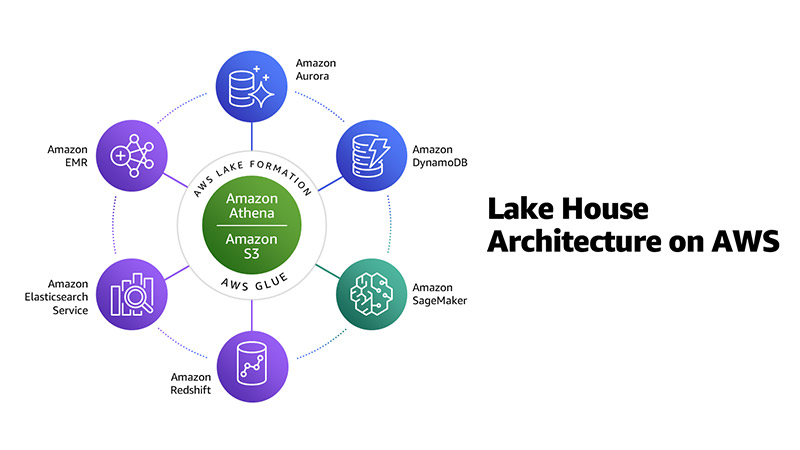

At AWS, we recommend you use a Lake House Architecture to modernize your data and analytics infrastructure in the cloud. A Lake House Architecture acknowledges the idea that taking a one-size-fits-all approach to analytics eventually leads to compromises. It’s not simply about integrating a data lake with a data warehouse, but rather about integrating a data lake, data warehouse, and purpose-built analytics services, and enabling unified governance and easy data movement. For more information about this approach, see Harness the power of your data with AWS Analytics by Rahul Pathak, and his AWS re:Invent 2020 analytics leadership session.

As shown in the following diagram, Amazon EMR is one element in a Lake House Architecture on AWS, along with Amazon S3, Amazon Redshift, and more.

One of the most important pieces of a modern analytics architecture is the ability for you to authorize, manage, and audit access to data. AWS gives you the fine-grained access control and governance you need to manage access to data across a data lake and purpose-built data stores and analytics services from a single point of control.

In October 2020, we announced the general availability of Amazon EMR integration with AWS Lake Formation. By integrating Amazon EMR with AWS Lake Formation, you can enhance data access control on multi-tenant EMR clusters by managing Amazon S3 data access at the level of databases, tables, and columns. This feature also enables SAML-based single sign-on to EMR Notebooks and Apache Zeppelin, and simplifies the authentication for organizations using Active Directory Federation Services (ADFS). With this integration, you have a single place to manage data access for Amazon EMR, along with the other AWS analytics services shown in the preceding diagram. At AWS re:Invent 2020, we announced the preview of row-level security for Lake Formation, which makes it even easier to control access for all the people and applications that need to share data.

In January 2021, we introduced Amazon EMR integration with Apache Ranger. Apache Ranger is an open-source project that provides authorization and audit capabilities for Hadoop and related big data applications like Apache Hive, Apache HBase, and Apache Kafka. Starting with Amazon EMR 5.32, we’re including plugins to integrate with Apache Ranger 2.0 that enable authorization and audit capabilities for Apache SparkSQL, Amazon S3, and Apache Hive. You can set up a multi-tenant EMR cluster, use Kerberos for user authentication, use Apache Ranger 2.0 (managed separately outside the EMR cluster) for authorization, and configure fine-grained data access policies for databases, tables, columns, and S3 objects.

With this native integration, you use the Amazon EMR security configuration to specify Apache Ranger details, without the need for custom bootstrap scripts. You can reuse existing Apache Hive Ranger policies, including support for row-level filters and column masking.

To learn more, see Integrate Amazon EMR with AWS Lake Formation and Integrate Amazon EMR with Apache Ranger.

Jumpstart your migration to Amazon EMR

Building a modern data platform using the Lake House Architecture enables you to collect data of all types, store it in a central, secure repository, and analyze it with purpose-built tools like Amazon EMR. Migrating your big data and ML to AWS and Amazon EMR offers many advantages over on-premises deployments. These include separation of compute and storage, increased agility, resilient and persistent storage, and managed services that provide up-to-date, familiar environments to develop and operate big data applications. We can help you design, deploy, and architect your analytics application workloads in AWS and help you migrate your big data and applications.

The AWS Well-Architected Framework helps you understand the pros and cons of decisions you make while building systems on AWS. By using the framework, you learn architectural best practices for designing and operating reliable, secure, efficient, and cost-effective systems in the cloud, and ways to consistently measure your architectures against best practices and identify areas for improvement. In May 2020, we announced the Analytics Lens for the AWS Well-Architected Framework, which offers comprehensive guidance to make sure that your analytics applications are designed in accordance with AWS best practices. We believe that having well-architected systems greatly increases the likelihood of business success.

To move to Amazon EMR, you can download the Amazon EMR migration guide to follow step-by-step instructions, get guidance on key design decisions, and learn best practices. You can also request an Amazon EMR Migration Workshop, a virtual workshop to jumpstart your Apache Hadoop/Spark migration to Amazon EMR. You can also learn how AWS partners have helped customers migrate to Amazon EMR in Mactores’s Seagate case study, Cloudwick’s on-premises to AWS Cloud migration to drive cost efficiency, and DNM’s global analytics platform for the cinema industry.

About the Authors

Abhishek Sinha is a Principal Product Manager at Amazon Web Services.

Abhishek Sinha is a Principal Product Manager at Amazon Web Services.

Al MS is a product manager for Amazon EMR at Amazon Web Services.

Al MS is a product manager for Amazon EMR at Amazon Web Services.

BJ Haberkorn is principal product marketing manager for analytics at Amazon Web Services. BJ has worked previously on voice technology including Amazon Alexa, real time communications systems, and processor design. He holds BS and MS degrees in electrical engineering from the University of Virginia.