Post Syndicated from maceneff original https://aws.amazon.com/blogs/compute/validating-attestation-documents-produced-by-aws-nitro-enclaves/

This blog post is written by Paco Gonzalez Senior EMEA IoT Specialist SA.

AWS Nitro Enclaves offers an isolated, hardened, and highly constrained environment to host security-critical applications. Think of AWS Nitro Enclaves as regular Amazon Elastic Compute Cloud (Amazon EC2) virtual machines (VMs) but with the added benefit of the environment being highly constrained.

A great benefit of using AWS Nitro Enclaves is that you can run your software as if it was a regular EC2 instance, but with no persistent storage and limited access to external systems. The only way to communicate with AWS Nitro Enclaves is using a VSOCK socket. This special type of communication mechanism acts as an isolated communication channel between the parent EC2 instance and AWS Nitro Enclaves.

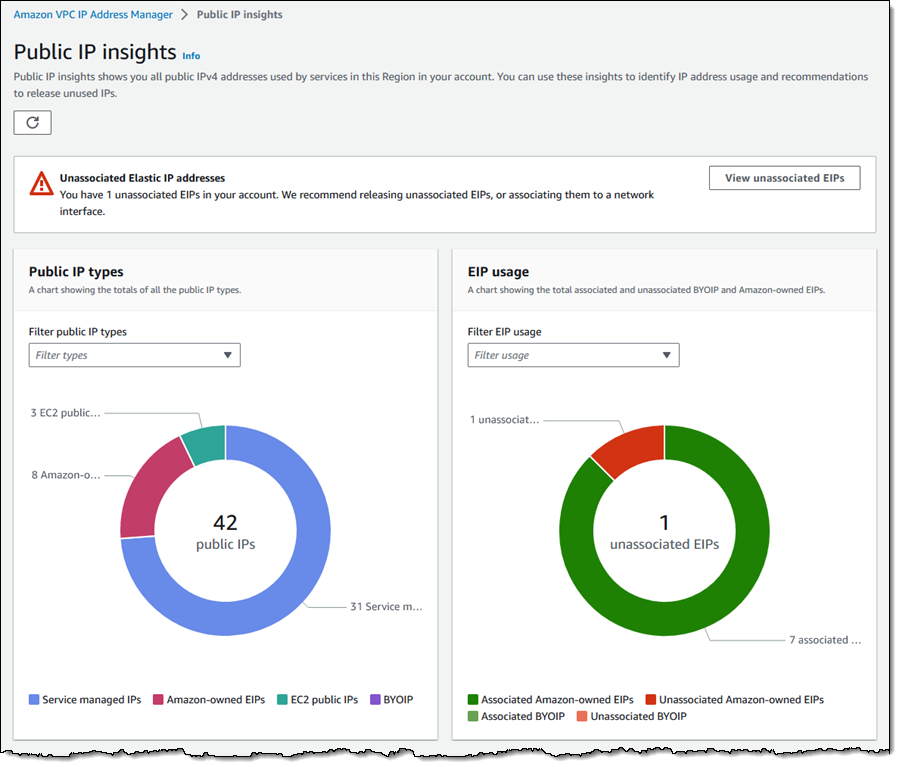

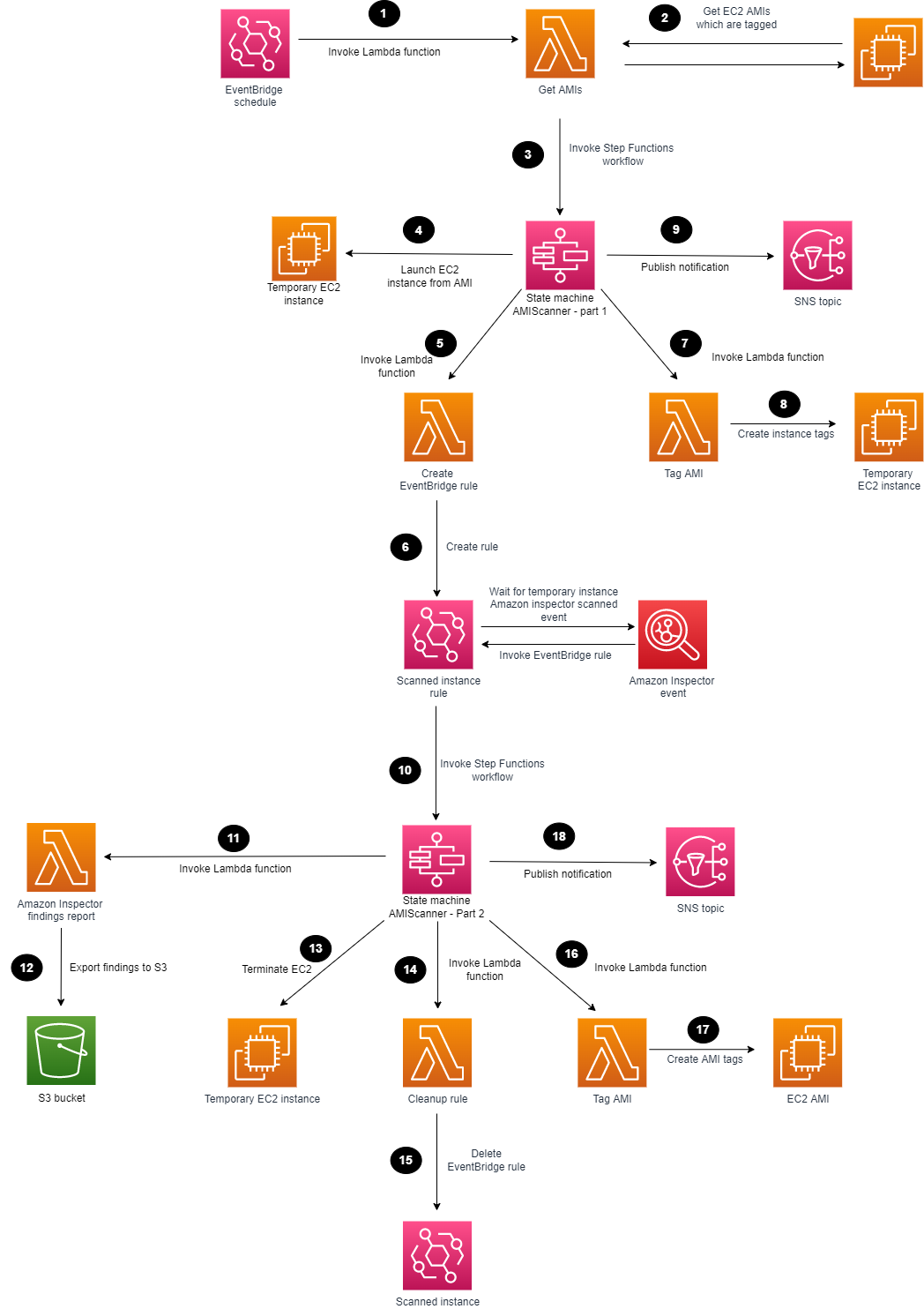

Fig 1 – AWS Nitro Enclaves uses the proven isolation of the Nitro Hypervisor to further isolate the CPU and memory of the Nitro Enclaves from users, applications, and libraries on the parent instance.

AWS Nitro Enclaves comes with a custom Linux device called the Nitro Security Module (NSM), which is accessible via /dev/nsm. This device provides attestation capability to the Nitro Enclaves. The attestation comes in the form of an attestation document. The attestation document makes it easy and safe to build trust between systems that interact with the Nitro Enclaves. The external system must have a mechanism to process the attestation document to determine the validity of the attestation document.

In this post, I go through the anatomy of an attestation document produced by the NSM API. I then show you an example of how to perform different validations that help determine the accuracy of an attestation document produced by the AWS Nitro Enclaves Security Module. I use syntactic and semantic validations to check for the attestation document’s correctness before proceeding with a cryptographic validation of the contents of the document’s payload. The examples used in this post use the C language. Look at the companion repository available in GitHub for access to the all source code used in this post.

Anatomy of an attestation document produced by AWS Nitro Enclaves

The attestation document uses the Concise Binary Object Representation (CBOR) format to encode the data. The CBOR object is wrapped using the CBOR Object Signing and Encryption (COSE) protocol. The COSE format used is a single-signer data structure called “COSE_Sign1”. The object is comprised of headers, the payload, and a signature.

For more information about COSE, see RFC 8152: CBOR Object Signing and Encryption (COSE). For more information about CBOR, see RFC 8949 Concise Binary Object Representation (CBOR).

We published a library to make it easy to interact with the NSM. The library contains helpers which your application, running on the Nitro Enclaves, can use to communicate with the NSM device.

Here is the minimum code needed to generate an attestation document:

#include <stdlib.h>

#include <stdio.h>

#include <nsm.h>

#define NSM_MAX_ATTESTATION_DOC_SIZE (16 * 1024)

int main(void) {

/// NSM library initialization function.

/// *Returns*: A descriptor for the opened device file.

int nsm_fd = nsm_lib_init();

if (nsm_fd < 0) {

exit(1);

}

/// NSM `GetAttestationDoc` operation for non-Rust callers.

/// *Argument 1 (input)*: The descriptor to the NSM device file.

/// *Argument 2 (input)*: User data.

/// *Argument 3 (input)*: The size of the user data buffer.

/// *Argument 4 (input)*: Nonce data.

/// *Argument 5 (input)*: The size of the nonce data buffer.

/// *Argument 6 (input)*: Public key data.

/// *Argument 7 (input)*: The size of the public key data buffer.

/// *Argument 8 (output)*: The obtained attestation document.

/// *Argument 9 (input / output)*: The document buffer capacity (as input)

/// and the size of the received document (as output).

/// *Returns*: The status of the operation.

int status;

uint8_t att_doc_buff[NSM_MAX_ATTESTATION_DOC_SIZE];

uint32_t att_doc_cap_and_size = NSM_MAX_ATTESTATION_DOC_SIZE;

status = nsm_get_attestation_doc(nsm_fd, NULL, 0, NULL, 0, NULL, 0, att_doc_buff,

&att_doc_cap_and_size);

if (status != ERROR_CODE_SUCCESS) {

printf("[Error] Request::Attestation got invalid response: %s\n",status);

exit(1);

}

printf("########## attestation_document_buff ##########\r\n");

for(int i=0; i<att_doc_cap_and_size; i++)

fprintf(stdout, "%02X", att_doc_buff[i]);

exit(0);

}

To produce a sample attestation document, initialize the device, call the function ‘nsm_get_attestation_doc’ inside the AWS Nitro Enclaves, and dump the contents. The library is written using Rust, but it contains

bindings for C. You can read more about the library and some of the other relevant capabilities

here.

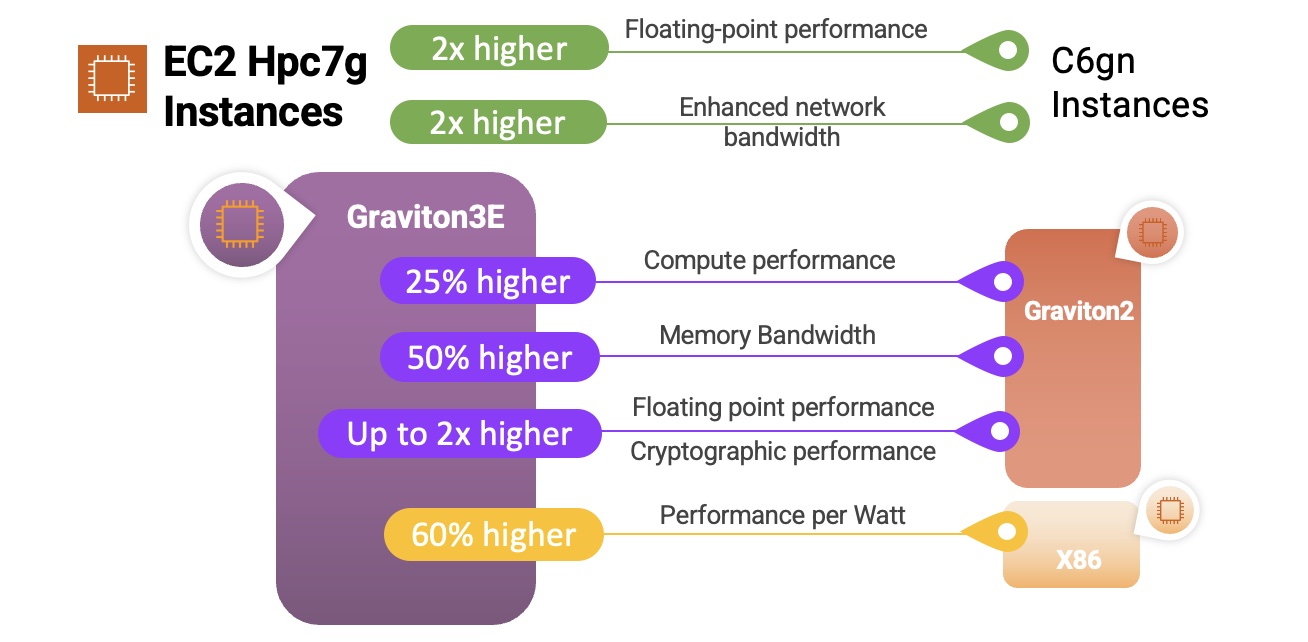

The COSE headers contain a protected and an un-protected data section. The cryptographic algorithm used for the signature is specified inside the protected area. AWS Nitro Enclaves use a 384-bit elliptic curve algorithm (P-384) to sign attestation documents. AWS Nitro Enclaves do not use the unprotected data field so it is always left blank.

The payload contains fixed parameters that include the following: information about the issuing NSM, a timestamp of the issuing event, a map of all the locked Platform Configuration Registers (PCRs) at the moment the attestation document was generated, the hashing algorithm used to produce the digest that was used to calculate the PCR values – AWS Nitro Enclaves use a 384 bit secure hashing algorithm (SHA384), a x509 certificate signed by AWS Nitro Enclaves’ Private Public Key Infrastructure (PKI). An AWS Nitro Enclaves certificate expires three hours after it has been issued. The common name (CN) contains information about the issuing NSM – and finally the issuing Certificate Authority (CA) bundle. The payload also contains optional parameters that a third-party application can use to create custom authentication and authorization workflows. The optional parameters are: a public key, a cryptographic nonce, and additional arbitrary data.

Finally, the signature is the result of a signing operation using the private key related to the public key contained inside the certificate that is part of the payload.

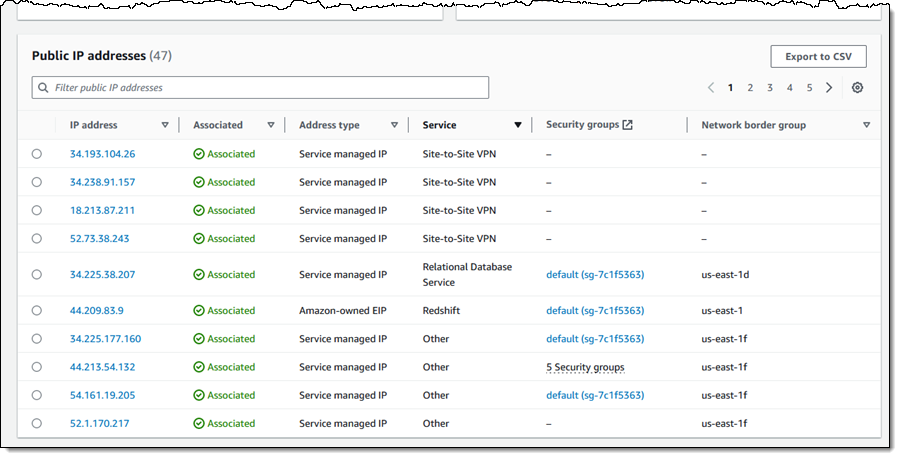

Fig 2. An attestation document is generated and signed by the Nitro Hypervisor. It contains information about the Nitro Enclaves and it can be used by an external service to verify the identity of Nitro Enclaves and to establish trust. You can use the attestation document to build your own cryptographic attestation mechanisms.

Syntactical validation

Early validation of the attestation document format makes sure that only documents that conform to the expected structure are processed in subsequent steps.

I start by attempting to decode the CBOR object and testing to see if it corresponds to a COSE object signed with one signer or ‘COSE_Sign1’ structure. This can be easily done by looking at the most significant first three bits (MSB) of the first byte – I am expecting a stream of CBOR bytes (decimal 6). Then, I take the least significant (LSB) remaining five bits of the first byte – I am expecting a tag that tells me it is a COSE_Sign1 object (decimal 18).

assert(att_doc_buff[0*] == 6 <<5 | 18); // 0xD2

* Note that the time of writing, the NSM does not include the COSE tag and thus this validation cannot be made and is mentioned in this post for informational purposes only. However, it is important to keep this in mind, as the tag is part of the standard, and the NSM device or library could include it in the future.*

The next step is to parse the actual CBOR object. A COSE_Sign1 object is an array of size 4 (protected headers, un protected headers, payload, and signature). Therefore, I must check that the next three MSB correspond to Type 4 (array) and that the size is exactly 4.

assert(att_doc_buff[0] == 4 <<5 | 4); // 0x84

The next byte determines what the first CBOR item of the array looks like. I am expecting the protected COSE header as the first item of the array. The CBOR field should indicate that the contents of the item are of a Type 2 (raw bytes) and the size should be exactly 4.

assert(att_doc_buff[1] == 2 <<5 | 4); // 0x44

The next four bytes represent the protected header. The contents of this item is a regular CBOR object. The object should contain a Type 5 (map) with a single item (1). The item first key is expected to be the number 1. The first three MSB of the first byte should be a Type 1 (negative integer). The remaining five LSB should indicate that the value is an 8-bit number (decimal 24). The last byte should be negative 35 as it maps to the P-384 curve that Nitro Enclaves use. Note that CBOR negative numbers are stored minus 1.

assert(att_doc_buff[2] == 5 <<5 | 1); // 0xA1

assert(att_doc_buff[3] == 0x01); // 0x01

assert(att_doc_buff[4] == 1 <<5 | 24); // 0x38

assert(att_doc_buff[4] == 35-1); // 0x22

The next byte corresponds to the unprotected header. AWS Nitro Enclaves do not use unprotected headers. Therefore, the expected is a Type 5 (map) with zero items.

assert(att_doc_buff[6] == 5 <<5 | 0); // 0xA0

Now that I am done inspecting the headers, I can move onto the payload. The CBOR object used for the payload is Type 2 (raw bytes). This time we are expecting a large steam of bytes. The remaining five LSB are used to indicate the data type used to indicate the size of the byte stream (i.e. 8-bit, 16-bit). AWS Nitro Enclaves attestation documents are about 5 KiB without using any of the three optional parameters. The optional parameters have a size limit of 1 KiB each. This means that it would be highly unlikely for the buffer to be larger than a 16-bit number (CBOR short count: 25).

assert(att_doc_buff[8] == 2 <<5 | 25); // 0x59

The next two bytes represent the size of the payload which I am going to skip those for now, as the contents of the payload are validated in subsequent steps. I’ll move onto the final portion of the attestation document: the signature. The signature has to be a Type 2 (raw bytes) of exactly 96 bytes.

uint16_t payload_size = att_doc_buff[8] << 8 | att_doc_buff[9];

assert(att_doc_buff[9+payload_size+1] == (2<<5 | 24)); // 0x58

assert(att_doc_buff[9+payload_size+1+1] == 96); // 0x60

At this point, I have validated that the data produced by the NSM looks the way it should. My application is ready to start looking into the contents of the attestation document.

I want to make sure that the document contains all mandatory fields and I can check that the fields have the right structure and their sizes are within the expected boundaries. I have evidence that the data looks the way it should, so I am ready to use an off-the-shelf CBOR library to make the validation process easier instead of doing it by hand.

Here is an example of how to load a CBOR object using libcbor and standard C libraries to check the contents. I am showing just one example to illustrate the process. Refer to the section ‘Verifying the root of trust’ in the AWS Nitro Enclaves User Guide for a detailed description of each parameter and the validations that your application should perform to make sure that the document is valid.

#include <stdlib.h>

#include <stdio.h>

#include <string.h>

#include <assert.h>

#include <cbor.h>

#include <openssl/ssl.h>

#define APP_X509_BUFF_LEN (1024*2)

#define APP_ATTDOC_BUFF_LEN (1024*10)

void output_handler(char * msg){

fprintf(stdout, "\r\n%s\r\n", msg);

}

void output_handler_bytes(uint8_t * buffer, int buffer_size){

for(int i=0; i<buffer_size; i++)

fprintf(stdout, "%02X", buffer[i]);

fprintf(stdout, "\r\n");

}

int read_file( unsigned char * file, char * file_name, size_t elements) {

FILE * fp; size_t file_len = 0;

fp = fopen(file_name, "r");

file_len = fread(file, sizeof(char), elements, fp);

if (ferror(fp) != 0 ) {

fputs("Error reading file", stderr);

}

fclose(fp);

return file_len;

}

int main(int argc, char* argv[]) {

// STEP 0 - LOAD ATTESTATION DOCUMENT

// Check inputs, expect two

if (argc != 3) {

fprintf(stderr, "%s\r\n", "ERROR: usage: ./main {att_doc_sample.bin} {AWS_NitroEnclaves_Root-G1.pem}"); exit(1);

}

// Load file into buffer, use 1st argument

unsigned char * att_doc_buff = malloc(APP_ATTDOC_BUFF_LEN);

int att_doc_len = read_file(att_doc_buff, argv[1], APP_ATTDOC_BUFF_LEN );

// STEP 1 - SYTANCTIC VALIDATON

// Check COSE TAG (skipping - not currently implemented by AWS Nitro Enclaves)

// assert(att_doc_buff[0] == 6 <<5 | 18); // 0xD2

// Check if this is an array of exactly 4 items

assert(att_doc_buff[0] == (4<<5 | 4)); // 0x84

// Check if next item is a byte stream of 4 bytes

assert(att_doc_buff[1] == (2<<5 | 4)); // 0x44

// Check is fist item if byte stream is a map with 1 item

assert(att_doc_buff[2] == (5<<5 | 1)); // 0xA1

// Check that the first key of the map is 0x01

assert(att_doc_buff[3] == 0x01); // 0x01

// Check that value of the the first key of the map is -35 (P-384 curve)

assert(att_doc_buff[4] == (1 <<5 | 24)); // 0x38

assert(att_doc_buff[5] == 35-1); // 0x22

// Check that next item is a map of 0 items

assert(att_doc_buff[6] == (5<<5 | 0)); // 0xA0

// Check that the next item is a byte stream and the size is a 16-bit number (dec. 25)

assert(att_doc_buff[7] == (2<<5 | 25)); // 0x59

// Cast the 16-bit number

uint16_t payload_size = att_doc_buff[8] << 8 | att_doc_buff[9];

// Check that the item after the payload is a byte stream and the size is 8-bit number (dec. 24)

assert(att_doc_buff[9+payload_size+1] == (2<<5 | 24)); // 0x58

// Check that the size of the signature is exactly 96 bytes

assert(att_doc_buff[9+payload_size+1+1] == 96); // 0x60

// Parse buffer using library

struct cbor_load_result ad_result;

cbor_item_t * ad_item = cbor_load(att_doc_buff, att_doc_len, &ad_result);

free(att_doc_buff); // not needed anymore

// Parse protected header -> item 0

cbor_item_t * ad_pheader = cbor_array_get(ad_item, 0);

size_t ad_pheader_len = cbor_bytestring_length(ad_pheader);

// Parse signed bytes -> item 2 (skip un-protected headers as they are always empty)

cbor_item_t * ad_signed = cbor_array_get(ad_item, 2);

size_t ad_signed_len = cbor_bytestring_length(ad_signed);

// Load signed bytes as a new CBOR object

unsigned char * ad_signed_d = cbor_bytestring_handle(ad_signed);

struct cbor_load_result ad_signed_result;

cbor_item_t * ad_signed_item = cbor_load(ad_signed_d, ad_signed_len, &ad_signed_result);

// Create the pair structure

struct cbor_pair * ad_signed_item_pairs = cbor_map_handle(ad_signed_item);

// Parse signature -> item 3

cbor_item_t * ad_sig = cbor_array_get(ad_item, 3);

size_t ad_sig_len = cbor_bytestring_length(ad_sig);

unsigned char * ad_sig_d = cbor_bytestring_handle(ad_sig);

// Example 01: Check that the first item's key is the string "module_id" and that is not empty

size_t module_k_len = cbor_string_length(ad_signed_item_pairs[0].key);

unsigned char * module_k_str = realloc(cbor_string_handle(ad_signed_item_pairs[0].key), module_k_len+1); //null char

module_k_str[module_k_len] = '\0';

size_t module_v_len = cbor_string_length(ad_signed_item_pairs[0].value);

unsigned char * module_v_str = realloc(cbor_string_handle(ad_signed_item_pairs[0].value), module_v_len+1); //null char

module_v_str[module_v_len] = '\0';

assert(module_k_len != 0);

assert(module_v_len != 0);

// Example 02: Check that the module id key is actually the string "module_id"

assert(!strcmp("module_id",(const char *)module_k_str));

// Example 03: Check that the signature is exactly 96 bytes long

assert(ad_sig_len == 96);

// Example 04: Check that the protected header is exactly 4 bytes long

assert(ad_pheader_len == 4);

Semantic validation

The next step is to look at the data contained in the attestation document and check if it conforms to pre-defined business rules. The attestation document contains a certificate that was signed by the AWS Nitro Enclaves’ PKI. This validation it is important, as it proves that the document was signed by the AWS Nitro Enclaves’ PKI.

The signature of an x509 certificate is based on the certificate’s payload digest. Validating this signature means that I trust the information contained within the certificate, including the public key which I can later use to validate the attestation document itself. Furthermore, the information in the document contains details about the NSM module and a timestamp. Passing this check provides the assurances I need to trust that the document originated from my software running on AWS Nitro Enclaves at a specific time.

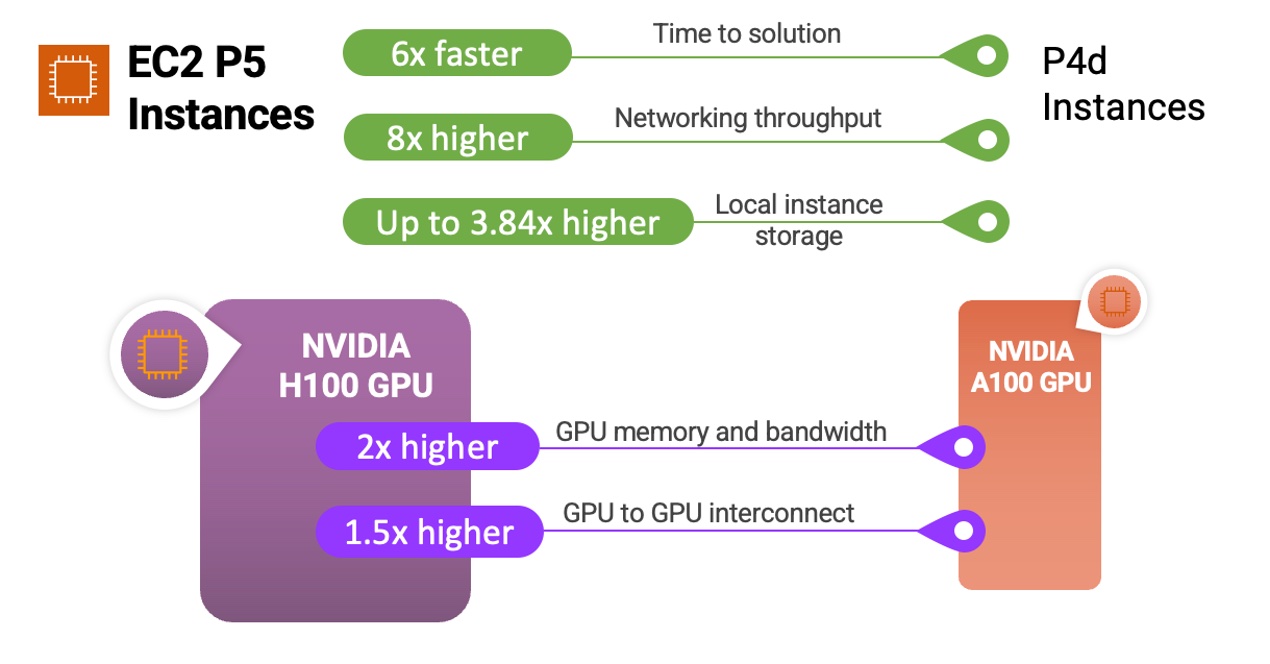

Fig 3. The attestation document contains a x.509 certificate that was signed by the AWS Nitro Enclaves’ PKI.

Here is an example of how I use the AWS Nitro Enclaves’ Private PKI root certificate from an external file. Then, use the CA bundle contained in the attestation document to validate the authenticity of the certificate contained in the document. In this example, I am using the OpenSSL library.

// STEP 2 - SEMANTIC VALIDATION

// Load AWS Nitro Enclave's Private PKI root certificate

unsigned char * x509_root_ca = malloc(APP_X509_BUFF_LEN);

int x509_root_ca_len = read_file(x509_root_ca, argv[2], APP_X509_BUFF_LEN );

BIO * bio = BIO_new_mem_buf((void*)x509_root_ca, x509_root_ca_len);

X509 * caX509 = PEM_read_bio_X509(bio, NULL, NULL, NULL);

if (caX509 == NULL) {

fprintf(stderr, "%s\r\n", "ERROR: PEM_read_bio_X509 failed"); exit(1);

}

free(x509_root_ca); free(bio);

// Create CA_STORE

X509_STORE * ca_store = NULL;

ca_store = X509_STORE_new();

/* ADD X509_V_FLAG_NO_CHECK_TIME FOR TESTING! TODO REMOVE */

X509_STORE_set_flags (ca_store, X509_V_FLAG_NO_CHECK_TIME);

if (X509_STORE_add_cert(ca_store, caX509) != 1) {

fprintf(stderr, "%s\r\n", "ERROR: X509_STORE_add_cert failed"); exit(1);

}

// Add certificates to CA_STORE from cabundle

// Skip the first one [0] as that is the Root CA and we want to read it from an external source

for (int i = 1; i < cbor_array_size(ad_signed_item_pairs[5].value); ++i){

cbor_item_t * ad_cabundle = cbor_array_get(ad_signed_item_pairs[5].value, i);

size_t ad_cabundle_len = cbor_bytestring_length(ad_cabundle);

unsigned char * ad_cabundle_d = cbor_bytestring_handle(ad_cabundle);

X509 * cabnX509 = X509_new();

cabnX509 = d2i_X509(&cabnX509, (const unsigned char **)&ad_cabundle_d, ad_cabundle_len);

if (cabnX509 == NULL) {

fprintf(stderr, "%s\r\n", "ERROR: d2i_X509 failed"); exit(1);

}

if (X509_STORE_add_cert(ca_store, cabnX509) != 1) {

fprintf(stderr, "%s\r\n", "ERROR: X509_STORE_add_cert failed"); exit(1);

}

}

// Load certificate from attestation dcoument - this a certificate that we don't trust (yet)

size_t ad_signed_cert_len = cbor_bytestring_length(ad_signed_item_pairs[4].value);

unsigned char * ad_signed_cert_d = realloc(cbor_bytestring_handle(ad_signed_item_pairs[4].value), ad_signed_cert_len);

X509 * pX509 = X509_new();

pX509 = d2i_X509(&pX509, (const unsigned char **)&ad_signed_cert_d, ad_signed_cert_len);

if (pX509 == NULL) {

fprintf(stderr, "%s\r\n", "ERROR: d2i_X509 failed"); exit(1);

}

// Initialize X509 store context and veryfy untrusted certificate

STACK_OF(X509) * ca_stack = NULL;

X509_STORE_CTX * store_ctx = X509_STORE_CTX_new();

if (X509_STORE_CTX_init(store_ctx, ca_store, pX509, ca_stack) != 1) {

fprintf(stderr, "%s\r\n", "ERROR: X509_STORE_CTX_init failed"); exit(1);

}

if (X509_verify_cert(store_ctx) != 1) {

fprintf(stderr, "%s\r\n", "ERROR: X509_verify_cert failed"); exit(1);

}

fprintf(stdout, "%s\r\n", "OK: ########## Root of Trust Verified! ##########");

Having proof that the certificate was signed by the expected CA is just the beginning. I also want to make sure that the contents of the certificate are correct. This involves checking that the certificate has not expired, as well as making sure that the critical extensions contain correct information to name a few.

Cryptographic validation

The syntactic validation helped me determine that the attestation document has the right shape, and the sematic validation helped me determine if the document meets my business rules. However, I still don’t know for sure if the document is valid.

The attestation document contains critical information, such as PCRs and the AWS Identity Access and Management (IAM) role among other details. I can safely use these two values in my authentication or authorization workflows if I can prove that they are trustworthy.

The attestation document was signed using a private key that is never exposed. However, the corresponding public key is contained within the certificate that was issued and stored within the attestation document. I know I can trust the contents of this certificate because I have proof that the certificate was signed by an entity that I trust.

Here is an example where I cryptographically prove that all the protected contents of the attestation document are related to the public key contained in the certificate. To validate the COSE signature, I must first recreate the original message that was used during the signature operation – COSE uses a specific format. Then, I use OpenSSL to check if there is a match between the message, signature, and public key. If the signature checks, then I can trust the contents of the already semantically-verified payload.

// STEP 3 - CRYPTOGRAPHIC VALIDATION

#define SIG_STRUCTURE_BUFFER_S (1024*10)

// Create new empty key

EVP_PKEY * pkey = EVP_PKEY_new();

// Create a new eliptic curve object using P-384 curve

EC_KEY * ec_key = EC_KEY_new_by_curve_name(NID_secp384r1);

// Reference the public key stucture and eliptic curve object with each other

EVP_PKEY_assign_EC_KEY(pkey, ec_key);

// Load the public key from the attestation document (we trust it now)

pkey = X509_get_pubkey(pX509);

if (pkey == NULL) {

fprintf(stderr, "%s\r\n", "ERROR: X509_get_pubkey failed"); exit(1);

}

// Allocate, initialize and return a digest context

EVP_MD_CTX * ctx = EVP_MD_CTX_create();

// Set up verification context

if (EVP_DigestVerifyInit(ctx, NULL, EVP_sha384(), NULL, pkey) <= 0) {

fprintf(stderr, "%s\r\n", "ERROR: EVP_DigestVerifyInit failed"); exit(1);

}

// Recreate COSE_Sign1 structure, and serilise it into a buffer

cbor_item_t * cose_sig_arr = cbor_new_definite_array(4);

cbor_item_t * cose_sig_arr_0_sig1 = cbor_build_string("Signature1");

cbor_item_t * cose_sig_arr_2_empty = cbor_build_bytestring(NULL, 0);

assert(cbor_array_push(cose_sig_arr, cose_sig_arr_0_sig1));

assert(cbor_array_push(cose_sig_arr, ad_pheader));

assert(cbor_array_push(cose_sig_arr, cose_sig_arr_2_empty));

assert(cbor_array_push(cose_sig_arr, ad_signed));

unsigned char sig_struct_buffer[SIG_STRUCTURE_BUFFER_S];

size_t sig_struct_buffer_len = cbor_serialize(cose_sig_arr, sig_struct_buffer, SIG_STRUCTURE_BUFFER_S);

// Hash message and load it into the verificaiton context

if (EVP_DigestVerifyUpdate(ctx, sig_struct_buffer, sig_struct_buffer_len) <= 0) {

fprintf(stderr, "%s\r\n", "ERROR: nEVP_DigestVerifyUpdate failed"); exit(1);

}

// Create R and V BIGNUM structures

BIGNUM * sig_r = BN_new(); BIGNUM * sig_v = BN_new();

BN_bin2bn(ad_sig_d, 48, sig_r); BN_bin2bn(ad_sig_d + 48, 48, sig_v);

// Allocate an empty ECDSA_SIG structure

ECDSA_SIG * ec_sig = ECDSA_SIG_new();

// Set R and V values

ECDSA_SIG_set0(ec_sig, sig_r, sig_v);

// Convert R and V values into DER format

int sig_size = i2d_ECDSA_SIG(ec_sig, NULL);

unsigned char * sig_bytes = malloc(sig_size); unsigned char * p;

memset_s(sig_bytes,sig_size,0xFF, sig_size);

p = sig_bytes;

sig_size = i2d_ECDSA_SIG(ec_sig, &p);

// Verify the data in the context against the signature and get final result

if (EVP_DigestVerifyFinal(ctx, sig_bytes, sig_size) != 1) {

fprintf(stderr, "%s\r\n", "ERROR: EVP_DigestVerifyFinal failed"); exit(1);

} else {

fprintf(stdout, "%s\r\n", "OK: ########## Message Verified! ##########");

free(sig_bytes);

exit(0);

}

//#endif

exit(1);

}

Conclusion

In this post, I went through a detailed examination of attestation documents produced by the AWS Nitro Enclaves. Then, I went over different types of validations (syntactic, semantic, and cryptographic) that safely help determine if an attestation document should be trusted. I’ve also included access to a public repository that contains the source code used in this post. New AWS Nitro Enclaves users can use it as a starting point when looking to integrate their applications with AWS Nitro Enclaves and build highly secure and confidential solutions.