Post Syndicated from Rajiv Arora original https://aws.amazon.com/blogs/big-data/how-gilead-used-amazon-redshift-to-quickly-and-cost-effectively-load-third-party-medical-claims-data/

This post was co-written with Rajiv Arora, Director of Data Science Platform at Gilead Life Sciences.

Gilead Sciences, Inc. is a biopharmaceutical company committed to advancing innovative medicines to prevent and treat life-threatening diseases, including HIV, viral hepatitis, inflammation, and cancer. A leader in virology, Gilead historically relied on these drugs for growth but now through strategic investments, Gilead is expanding and increasing their focus in oncology, having acquired Kite and Immunomedics to boost their exposure to cell therapy and non-cell therapy, making it the primary growth engine. Because Gilead is expanding into biologics and large molecule therapies, and has an ambitious goal of launching 10 innovative therapies by 2030, there is heavy emphasis on using data with AI and machine learning (ML) to accelerate the drug discovery pipeline.

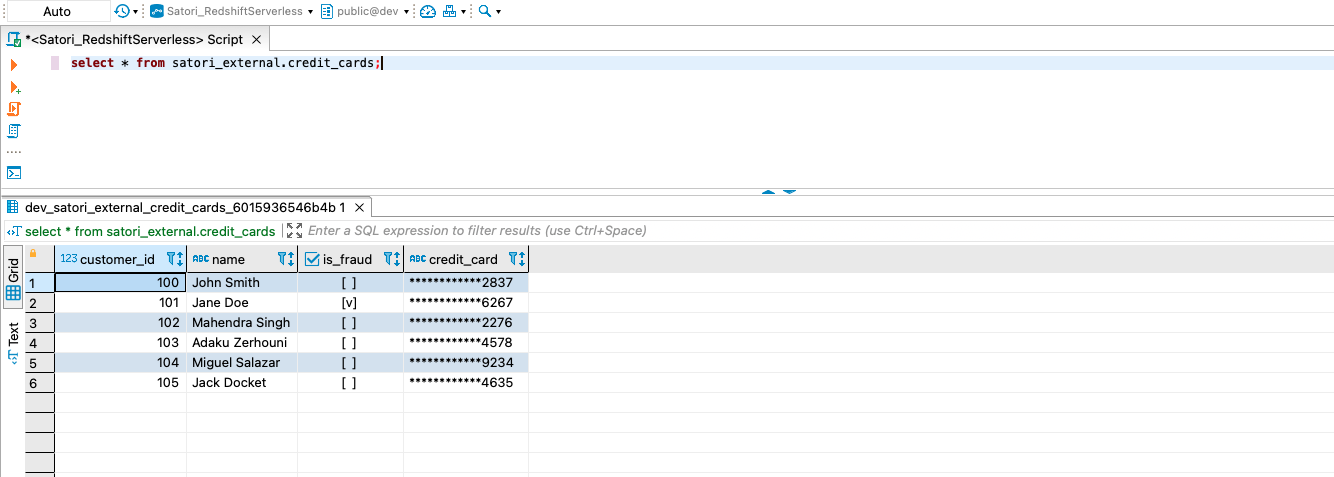

Amazon Redshift Serverless is a fully managed cloud data warehouse that allows you to seamlessly create your data warehouse with no infrastructure management required. You pay only for the compute resources and storage that you use. Redshift Serverless measures data warehouse capacity in Redshift Processing Units (RPUs), which are part of the compute resources. All of the data stored in your warehouse, such as tables, views, and users, make up a namespace in Redshift Serverless.

One of the benefits of Redshift Serverless is that you don’t need to size your data warehouse for your peak workload. The peak workload includes loading periodic large datasets in multi-terabyte range. You can set a base RPU from 8 up to 512 and Redshift Serverless will automatically scale the RPUs to meet your workload demands. This makes it straightforward to manage your data warehouse in a cost-effective manner.

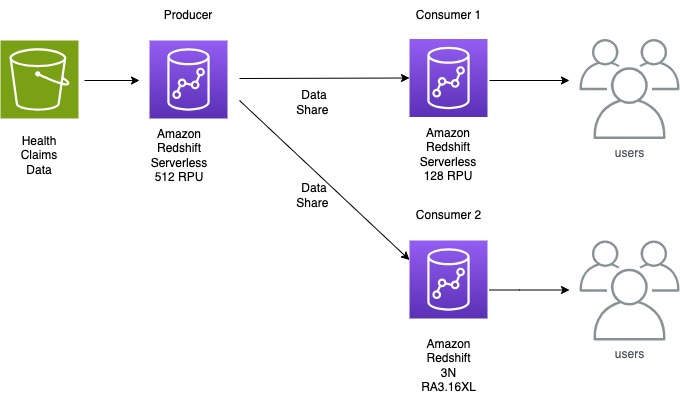

In this post, we share how Gilead collaborated with AWS to redesign their data ingestion process. They used Redshift Serverless as their data producer to load third-party medical claims data in a fast and cost-effective way, reducing load times from days to hours.

Gilead use case

Gilead loads a variety of data from hundreds of sources to their R&D data environment. They recently needed to do a monthly load of 140 TB of uncompressed healthcare claims data in under 24 hours after receiving it to provide analysts and data scientists with up-to-date information on a patient’s healthcare journey. This data volume is expected to increase monthly and is fully refreshed each month. The 3-node RA3 16XL provisioned cluster that had previously been hosting their warehouse was taking around 12 hours to ingest this data to Amazon Redshift, and Gilead was looking to optimize the data ingestion process in a more dynamic manner. Working with Amazon Redshift specialists from AWS, Gilead chose Redshift Serverless as a way to cost-effectively load this data and then use Redshift data sharing to share the final dataset to two additional Redshift data warehouses for end-user queries.

Loading data is a key process for any analytical system, including Amazon Redshift. When loading very large datasets, it’s important to not only load the data as quickly as possible but also in a way that optimizes the consumption queries.

Gilead’s healthcare claims data took 40 hours to load, which meant delays in using the data for downstream processes. The teams sought improvements, targeting a maximum 24-hour SLA for the load. They achieved the load in 8 hours, an 80% reduction in time to make data available.

Solution overview

After collaborating, the Gilead and AWS teams decided on a two-step process to load the data to Amazon Redshift. First, the data was loaded without a distkey and sortkey, which let the load process use the full parallel resources of the cluster. Then we used a deep copy to redistribute this data and add the desired distribution and sort characteristics.

The solution uses Redshift Serverless. The team wanted to ingest data to meet the required SLA, and the following approaches were benchmarked:

- COPY command – The COPY command uses the Amazon Redshift massively parallel processing (MPP) architecture to read and load data in parallel from files on Amazon Simple Storage Service (Amazon S3)

- Data lake analytics – Amazon Redshift Spectrum is used to query data directly from files on Amazon S3 by selecting a subset of columns and avoiding the intermediate step of copying data to staging table

Initial Solution approach: Single COPY command

The team determined it would be more effective to apply the distribution and sort keys in a post-copy step. The data was loaded first using automatic distribution of data. This took roughly 12 hours to complete. The team created open and closed claims tables with defined dist keys and with 20% of the columns to alleviate the need to query the larger table. With this success, we learned that we can still improve the big copy, as detailed in the following sections.

Proposed Solution approach 1: Parallel COPY command

Based on the initial solution approach above, the team tested yearly parallel copy commands as illustrated in the following diagram.

Below are the findings and learnings from this approach:

- Ingesting data for 4 years using parallel copy showed a 25% performance improvement over the single copy command.

- Compared to Initial solution approach, where we were taking 12 hours to ingest the data, we further optimized this runtime by 67% by segregating the data ingestion into separate yearly staging tables and running parallel copy commands.

- After the data was loaded into staging yearly tables, we created the open and closed claim tables with an auto

distkeywith the subset of columns required for larger reporting groups. It took an additional 1 hour to create.

The team used a manifest file to make sure that the COPY command loads all of the required files for the respective year for ingesting.

Proposed Solution approach 2: Data Lake analytics

The team used this approach with Redshift Spectrum to load only the required columns to Redshift Serverless, which avoided loading data into multiple yearly tables and directly to a single table. The following diagram illustrates this approach.

The workflow consists of the following steps:

- Crawl the files using AWS Glue.

- Create a data lake external schema and table in Redshift Serverless.

- Create two separate claims table for open and closed claims because open claims are most frequently consumed and are 20% of the columns and 100% of the data.

- Create open and closed tables with selective columns needed for optimal performance optimization during consumption instead of all columns in the original third-party dataset. The data volume distribution is as follows:

- Total number of open claims records = 50 billion

- Total number of closed claims records = 200 billion

- Overall, total number of records = 250 billion

- Distribute open and closed tables with a customer-identified

distkey. - Configure data ingestion into open and closed claims tables combined using Redshift Serverless with 512 RPUs. This took 1.5 hours, which is further improved by 70% compared to scenario 1. We chose 512 RPUs in order to load data in the fastest way possible.

In this method, data ingestion was streamlined by only loading essential fields from the medical claims dataset and by splitting the table into open and closed claims. Open claims data is most frequently accessed and constitutes only 20% of columns so by splitting the tables. The team not only improved the ingestion performance but also consumption.

Amazon Redshift recently launched automatic mounting of AWS Glue Data Catalog, making it easier to run data lake analytics without manually creating external schemas. You can query data lake tables directly from Amazon Redshift Query Editor v2 or your favorite SQL editors.

Recommendations and best practices

Consider the following recommendations when loading large-scale data in Amazon Redshift.

- Use Redshift Serverless with maximum 512 RPUs to efficiently and quickly load data

- Depending on consumption use case and query pattern, adopt either of the following approaches:

- When consumption queries require only selected fields from the dataset and most frequently access a subset of data, use data lake queries to load only the relevant columns from Amazon S3 into Amazon Redshift

- When consumption queries require all fields, use COPY commands with a manifest file to ingest data in parallel into multiple logically separated tables and create a database view with UNION ALL of all tables

- Avoid using varchar(max) while creating tables and create VARCHAR columns with the right size

Final Architecture

The following diagram shows the high-level final architecture that was implemented.

Conclusion

With the scalability of Redshift Serverless, data sharing to decouple ingestion from consumption workloads, and data lake analytics to ingest data, Gilead made their 140 TB dataset available to their analysts within hours of it being delivered. The innovative architecture of using a serverless ingestion data warehouse, a serverless consumption data warehouse for power users, and their original 3-node provisioned cluster for standard queries gives Gilead isolation to ensure data loads don’t affect their users. The architecture provides scalability to serve infrequent large queries with their serverless consumer along with the benefit of a fixed-cost and fixed-performance option of their provisioned cluster for their standard user queries. Due to the monthly schedule of the data load and the variable need for large queries by consumers, Redshift Serverless proved to be a cost-effective option compared to simply increasing the provisioned cluster to serve each of these use cases.

This split producer/consumer model of using Redshift serverless can bring benefits to many workloads that have similar performance characteristics to Gilead’s warehouse. Customers regularly run large data loads infrequently, and those processes compete with user queries. With this pattern, you can rely on your queries to perform consistently regardless of whether new data is being loaded to the system. This strikes a balance between minimizing cost while maintaining performance and frees the system administrators to load data without affecting users.

About the Authors

Rajiv Arora is a Director of Clinical Data Science at Gilead Sciences with over 20 years of experience in the industry. He is responsible for the multi-modal data platform for the development organization and supports all statistical and predictive analytical infrastructure for RWE and Advanced Analytical functions.

Rajiv Arora is a Director of Clinical Data Science at Gilead Sciences with over 20 years of experience in the industry. He is responsible for the multi-modal data platform for the development organization and supports all statistical and predictive analytical infrastructure for RWE and Advanced Analytical functions.

Ritesh Kumar Sinha is an Analytics Specialist Solutions Architect based out of San Francisco. He has helped customers build scalable data warehousing and big data solutions for over 16 years. He loves to design and build efficient end-to-end solutions on AWS. In his spare time, he loves reading, walking, and doing yoga.

Ritesh Kumar Sinha is an Analytics Specialist Solutions Architect based out of San Francisco. He has helped customers build scalable data warehousing and big data solutions for over 16 years. He loves to design and build efficient end-to-end solutions on AWS. In his spare time, he loves reading, walking, and doing yoga.

Raks Khare is an Analytics Specialist Solutions Architect at AWS based out of Pennsylvania. He helps customers architect data analytics solutions at scale on the AWS platform.

Raks Khare is an Analytics Specialist Solutions Architect at AWS based out of Pennsylvania. He helps customers architect data analytics solutions at scale on the AWS platform.

Brent Strong is a Senior Solutions Architect in the Healthcare and Life Sciences team at AWS. He has more than 15 years of experience in the industry, focusing on data and analytics and DevOps. At AWS, he works closely with large Life Sciences customers to help them deliver new and innovative treatments.

Brent Strong is a Senior Solutions Architect in the Healthcare and Life Sciences team at AWS. He has more than 15 years of experience in the industry, focusing on data and analytics and DevOps. At AWS, he works closely with large Life Sciences customers to help them deliver new and innovative treatments.

Phil Bates is a Senior Analytics Specialist Solutions Architect at AWS with over 25 years of data warehouse experience.

Phil Bates is a Senior Analytics Specialist Solutions Architect at AWS with over 25 years of data warehouse experience.

Mukul Sharma is a Software Development Engineer on Data & Analytics (DnA) organization at GoDaddy. He is a polyglot programmer with experience in a wide array of technologies to rapidly deliver scalable solutions. He enjoys singing karaoke, playing various board games, and working on personal programming projects in his spare time.

Mukul Sharma is a Software Development Engineer on Data & Analytics (DnA) organization at GoDaddy. He is a polyglot programmer with experience in a wide array of technologies to rapidly deliver scalable solutions. He enjoys singing karaoke, playing various board games, and working on personal programming projects in his spare time. Ozcan Ilikhan is a Director of Engineering on Data & Analytics (DnA) organization at GoDaddy. He is passionate about solving customer problems and increasing efficiency using data and ML/AI. In his spare time, he loves reading, hiking, gardening, and working on DIY projects.

Ozcan Ilikhan is a Director of Engineering on Data & Analytics (DnA) organization at GoDaddy. He is passionate about solving customer problems and increasing efficiency using data and ML/AI. In his spare time, he loves reading, hiking, gardening, and working on DIY projects. Harsh Vardhan Singh Gaur is an AWS Solutions Architect, specializing in analytics. He has over 6 years of experience working in the field of big data and data science. He is passionate about helping customers adopt best practices and discover insights from their data.

Harsh Vardhan Singh Gaur is an AWS Solutions Architect, specializing in analytics. He has over 6 years of experience working in the field of big data and data science. He is passionate about helping customers adopt best practices and discover insights from their data. Ramesh Kumar Venkatraman is a Senior Solutions Architect at AWS who is passionate about containers and databases. He works with AWS customers to design, deploy, and manage their AWS workloads and architectures. In his spare time, he loves to play with his two kids and follows cricket.

Ramesh Kumar Venkatraman is a Senior Solutions Architect at AWS who is passionate about containers and databases. He works with AWS customers to design, deploy, and manage their AWS workloads and architectures. In his spare time, he loves to play with his two kids and follows cricket.

Deepak Singh is a Senior Solutions Architect at Amazon Web Services with 20+ years of experience in Data & AIA. He enjoys working with AWS partners and customers on building scalable analytical solutions for their business outcomes. When not at work, he loves spending time with family or exploring new technologies in analytics and AI space.

Deepak Singh is a Senior Solutions Architect at Amazon Web Services with 20+ years of experience in Data & AIA. He enjoys working with AWS partners and customers on building scalable analytical solutions for their business outcomes. When not at work, he loves spending time with family or exploring new technologies in analytics and AI space. Piyush Patra is a Partner Solutions Architect at Amazon Web Services where he supports partners with their Analytics journeys and is the global lead for strategic Data Estate Modernization and Migration partner programs.

Piyush Patra is a Partner Solutions Architect at Amazon Web Services where he supports partners with their Analytics journeys and is the global lead for strategic Data Estate Modernization and Migration partner programs. Govind Mohan is an Associate Director with Cognizant with over 18 year of experience in data and analytics space, he has helped design and implement multiple large-scale data migration, application lift & shift and legacy modernization projects and works closely with customers in accelerating the cloud modernization journey leveraging Cognizant Data and Intelligence Toolkit (CDIT) platform.

Govind Mohan is an Associate Director with Cognizant with over 18 year of experience in data and analytics space, he has helped design and implement multiple large-scale data migration, application lift & shift and legacy modernization projects and works closely with customers in accelerating the cloud modernization journey leveraging Cognizant Data and Intelligence Toolkit (CDIT) platform. Kausik Dhar is a technology leader having more than 23 years of IT experience – primarily focused on Data & Analytics, Data Modernization, Application Development, Delivery Management, and Solution Architecture. He has played a pivotal role in guiding clients through the designing and executing large-scale data and process migrations, in addition to spearheading successful cloud implementations. Kausik possesses expertise in formulating migration strategies for complex programs and adeptly constructing data lake/Lakehouse architecture employing a wide array of tools and technologies.

Kausik Dhar is a technology leader having more than 23 years of IT experience – primarily focused on Data & Analytics, Data Modernization, Application Development, Delivery Management, and Solution Architecture. He has played a pivotal role in guiding clients through the designing and executing large-scale data and process migrations, in addition to spearheading successful cloud implementations. Kausik possesses expertise in formulating migration strategies for complex programs and adeptly constructing data lake/Lakehouse architecture employing a wide array of tools and technologies.

Rahul Nammireddy is a Senior Solutions Architect at AWS, focusses on guiding digital native customers through their cloud native transformation. With a passion for AI/ML technologies, he works with customers in industries such as retail and telecom, helping them innovate at a rapid pace. Throughout his 23+ years career, Rahul has held key technical leadership roles in a diverse range of companies, from startups to publicly listed organizations, showcasing his expertise as a builder and driving innovation. In his spare time, he enjoys watching football and playing cricket.

Rahul Nammireddy is a Senior Solutions Architect at AWS, focusses on guiding digital native customers through their cloud native transformation. With a passion for AI/ML technologies, he works with customers in industries such as retail and telecom, helping them innovate at a rapid pace. Throughout his 23+ years career, Rahul has held key technical leadership roles in a diverse range of companies, from startups to publicly listed organizations, showcasing his expertise as a builder and driving innovation. In his spare time, he enjoys watching football and playing cricket. Todd McGrath is a data streaming specialist at Amazon Web Services where he advises customers on their streaming strategies, integration, architecture, and solutions. On the personal side, he enjoys watching and supporting his 3 teenagers in their preferred activities as well as following his own pursuits such as fishing, pickleball, ice hockey, and happy hour with friends and family on pontoon boats. Connect with him on

Todd McGrath is a data streaming specialist at Amazon Web Services where he advises customers on their streaming strategies, integration, architecture, and solutions. On the personal side, he enjoys watching and supporting his 3 teenagers in their preferred activities as well as following his own pursuits such as fishing, pickleball, ice hockey, and happy hour with friends and family on pontoon boats. Connect with him on

Khandu Shinde is a Staff Engineer focused on Big Data Platforms and Solutions for Chime. He helps to make the platform scalable for Chime’s business needs with architectural direction and vision. He’s based in San Francisco where he plays cricket and watches movies.

Khandu Shinde is a Staff Engineer focused on Big Data Platforms and Solutions for Chime. He helps to make the platform scalable for Chime’s business needs with architectural direction and vision. He’s based in San Francisco where he plays cricket and watches movies. Edward Paget is a Software Engineer working on building Chime’s capabilities to mitigate risk to ensure our members’ financial peace of mind. He enjoys being at the intersection of big data and programming language theory. He’s based in Chicago where he spends his time running along the lake shore.

Edward Paget is a Software Engineer working on building Chime’s capabilities to mitigate risk to ensure our members’ financial peace of mind. He enjoys being at the intersection of big data and programming language theory. He’s based in Chicago where he spends his time running along the lake shore. Dylan Qu is a Specialist Solutions Architect focused on Big Data & Analytics with Amazon Web Services. He helps customers architect and build highly scalable, performant, and secure cloud-based solutions on AWS.

Dylan Qu is a Specialist Solutions Architect focused on Big Data & Analytics with Amazon Web Services. He helps customers architect and build highly scalable, performant, and secure cloud-based solutions on AWS.

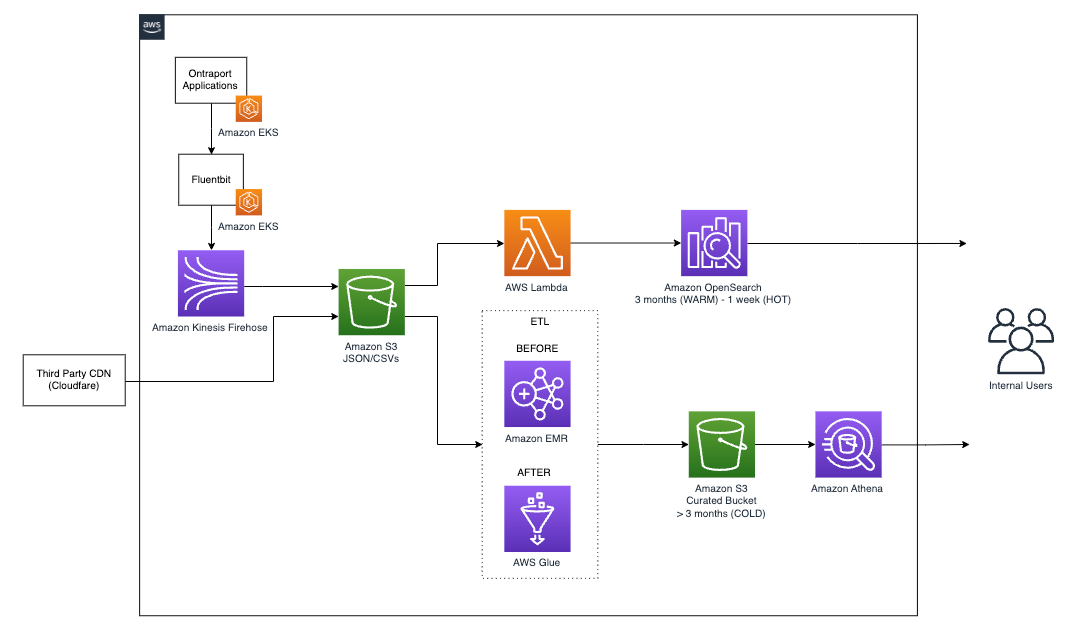

Elijah Ball has been a Sys Admin at Ontraport for 12 years. He is currently working to move Ontraport’s production workloads to AWS and develop data analysis strategies for Ontraport.

Elijah Ball has been a Sys Admin at Ontraport for 12 years. He is currently working to move Ontraport’s production workloads to AWS and develop data analysis strategies for Ontraport. Pablo Redondo is a Principal Solutions Architect at Amazon Web Services. He is a data enthusiast with over 16 years of FinTech and healthcare industry experience and is a member of the AWS Analytics Technical Field Community (TFC). Pablo has been leading the AWS Gain Insights Program to help AWS customers achieve better insights and tangible business value from their data analytics initiatives.

Pablo Redondo is a Principal Solutions Architect at Amazon Web Services. He is a data enthusiast with over 16 years of FinTech and healthcare industry experience and is a member of the AWS Analytics Technical Field Community (TFC). Pablo has been leading the AWS Gain Insights Program to help AWS customers achieve better insights and tangible business value from their data analytics initiatives. Vikram Honmurgi is a Customer Solutions Manager at Amazon Web Services. With over 15 years of software delivery experience, Vikram is passionate about assisting customers and accelerating their cloud journey, delivering frictionless migrations, and ensuring our customers capture the full potential and sustainable business advantages of migrating to the AWS Cloud.

Vikram Honmurgi is a Customer Solutions Manager at Amazon Web Services. With over 15 years of software delivery experience, Vikram is passionate about assisting customers and accelerating their cloud journey, delivering frictionless migrations, and ensuring our customers capture the full potential and sustainable business advantages of migrating to the AWS Cloud.

Thomas Burns,

Thomas Burns,  Aileen Zheng is a Solutions Architect supporting US Federal Civilian Sciences customers at Amazon Web Services (AWS). She partners with customers to provide technical guidance on enterprise cloud adoption and strategy and helps with building well-architected solutions. She is also very passionate about data analytics and machine learning. In her free time, you’ll find Aileen doing pilates, taking her dog Mumu out for a hike, or hunting down another good spot for food! You’ll also see her contributing to projects to support diversity and women in technology.

Aileen Zheng is a Solutions Architect supporting US Federal Civilian Sciences customers at Amazon Web Services (AWS). She partners with customers to provide technical guidance on enterprise cloud adoption and strategy and helps with building well-architected solutions. She is also very passionate about data analytics and machine learning. In her free time, you’ll find Aileen doing pilates, taking her dog Mumu out for a hike, or hunting down another good spot for food! You’ll also see her contributing to projects to support diversity and women in technology.

Rupesh Tiwari is a Senior Solutions Architect at AWS in New York City, with a focus on Financial Services. He has over 18 years of IT experience in the finance, insurance, and education domains, and specializes in architecting large-scale applications and cloud-native big data workloads. In his spare time, Rupesh enjoys singing karaoke, watching comedy TV series, and creating joyful moments with his family.

Rupesh Tiwari is a Senior Solutions Architect at AWS in New York City, with a focus on Financial Services. He has over 18 years of IT experience in the finance, insurance, and education domains, and specializes in architecting large-scale applications and cloud-native big data workloads. In his spare time, Rupesh enjoys singing karaoke, watching comedy TV series, and creating joyful moments with his family. Sheel Saket is a Senior Data Science Manager at FIS in Chicago, Illinois. He has over 11 years of IT experience in the finance, insurance, and e-commerce domains, and specializes in architecting large-scale AI solutions and cloud MLOps. In his spare time, Sheel enjoys listening to audiobooks, podcasts, and watching movies with his family.

Sheel Saket is a Senior Data Science Manager at FIS in Chicago, Illinois. He has over 11 years of IT experience in the finance, insurance, and e-commerce domains, and specializes in architecting large-scale AI solutions and cloud MLOps. In his spare time, Sheel enjoys listening to audiobooks, podcasts, and watching movies with his family.

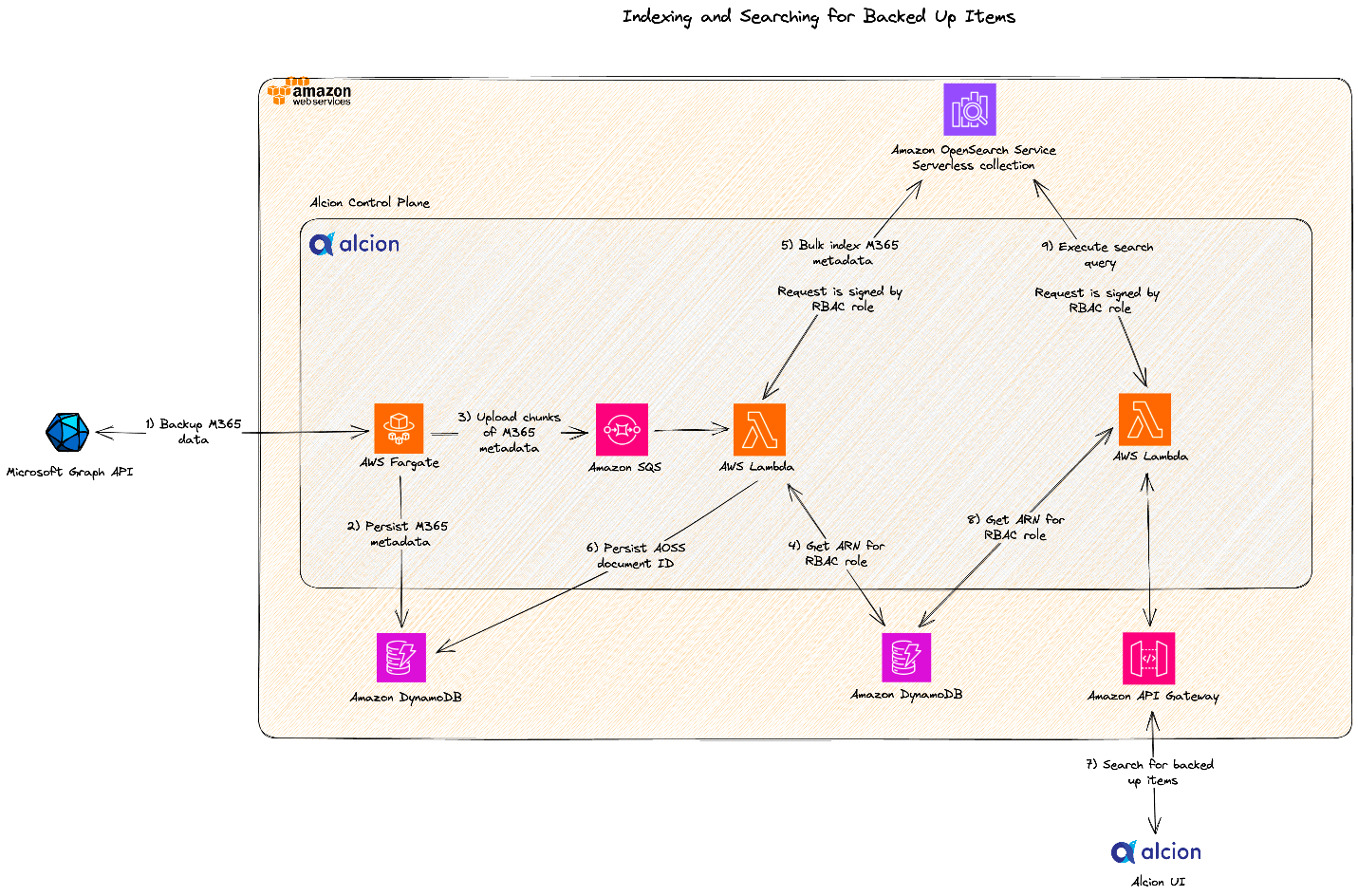

Zack Rossman is a Member of Technical Staff at Alcion. He is the tech lead for the search and AI platforms. Prior to Alcion, Zack was a Senior Software Engineer at Okta, developing core workforce identity and access management products for the Directories team.

Zack Rossman is a Member of Technical Staff at Alcion. He is the tech lead for the search and AI platforms. Prior to Alcion, Zack was a Senior Software Engineer at Okta, developing core workforce identity and access management products for the Directories team. Niraj Jetly is a Software Development Manager for Amazon OpenSearch Serverless. Niraj leads several data plane teams responsible for launching Amazon OpenSearch Serverless. Prior to AWS, Niraj led several product and engineering teams as CTO, VP of Engineering, and Head of Product Management for over 15 years. Niraj is a recipient of over 15 innovation awards, including being named CIO of the year in 2014 and top 100 CIO in 2013 and 2016. A frequent speaker at several conferences, he has been quoted in NPR, WSJ, and The Boston Globe.

Niraj Jetly is a Software Development Manager for Amazon OpenSearch Serverless. Niraj leads several data plane teams responsible for launching Amazon OpenSearch Serverless. Prior to AWS, Niraj led several product and engineering teams as CTO, VP of Engineering, and Head of Product Management for over 15 years. Niraj is a recipient of over 15 innovation awards, including being named CIO of the year in 2014 and top 100 CIO in 2013 and 2016. A frequent speaker at several conferences, he has been quoted in NPR, WSJ, and The Boston Globe. Jon Handler is a Senior Principal Solutions Architect at Amazon Web Services based in Palo Alto, CA. Jon works closely with OpenSearch and Amazon OpenSearch Service, providing help and guidance to a broad range of customers who have search and log analytics workloads that they want to move to the AWS Cloud. Prior to joining AWS, Jon’s career as a software developer included 4 years of coding a large-scale, ecommerce search engine. Jon holds a Bachelor of the Arts from the University of Pennsylvania and a Master of Science and a PhD in Computer Science and Artificial Intelligence from Northwestern University.

Jon Handler is a Senior Principal Solutions Architect at Amazon Web Services based in Palo Alto, CA. Jon works closely with OpenSearch and Amazon OpenSearch Service, providing help and guidance to a broad range of customers who have search and log analytics workloads that they want to move to the AWS Cloud. Prior to joining AWS, Jon’s career as a software developer included 4 years of coding a large-scale, ecommerce search engine. Jon holds a Bachelor of the Arts from the University of Pennsylvania and a Master of Science and a PhD in Computer Science and Artificial Intelligence from Northwestern University.

Tamara Astakhova is a Sr. Partner Solutions Architect in Data and Analytics at AWS. She has over 18 years of experience in the architecture and development of large-scale data analytics systems. Tamara is working with strategic partners helping them build complex AWS-optimized architectures.

Tamara Astakhova is a Sr. Partner Solutions Architect in Data and Analytics at AWS. She has over 18 years of experience in the architecture and development of large-scale data analytics systems. Tamara is working with strategic partners helping them build complex AWS-optimized architectures. Cameron Davie is a Principal Solutions Engineer for the Tech Alliances team. He oversees the technical responsibilities of Talend’s most strategic ISV partnerships. Cameron has been with Talend for 6 years in this role, working directly as the primary technical resource for partners such as AWS, Snowflake, and more. Cameron’s role at Talend is primarily focused on technical enablement and evangelism. This includes showcasing key capabilities of our partners’ solution internally as well as demonstrating Talend’s core technical capabilities with the technical sellers at Talend’s strategic ISV partners. Cameron is a veteran of ISV partnerships and enterprise software, with over 23 years of experience. Before Talend, he spent 14 years at SAP on their OEM/Embedded Solutions partnership team.

Cameron Davie is a Principal Solutions Engineer for the Tech Alliances team. He oversees the technical responsibilities of Talend’s most strategic ISV partnerships. Cameron has been with Talend for 6 years in this role, working directly as the primary technical resource for partners such as AWS, Snowflake, and more. Cameron’s role at Talend is primarily focused on technical enablement and evangelism. This includes showcasing key capabilities of our partners’ solution internally as well as demonstrating Talend’s core technical capabilities with the technical sellers at Talend’s strategic ISV partners. Cameron is a veteran of ISV partnerships and enterprise software, with over 23 years of experience. Before Talend, he spent 14 years at SAP on their OEM/Embedded Solutions partnership team.

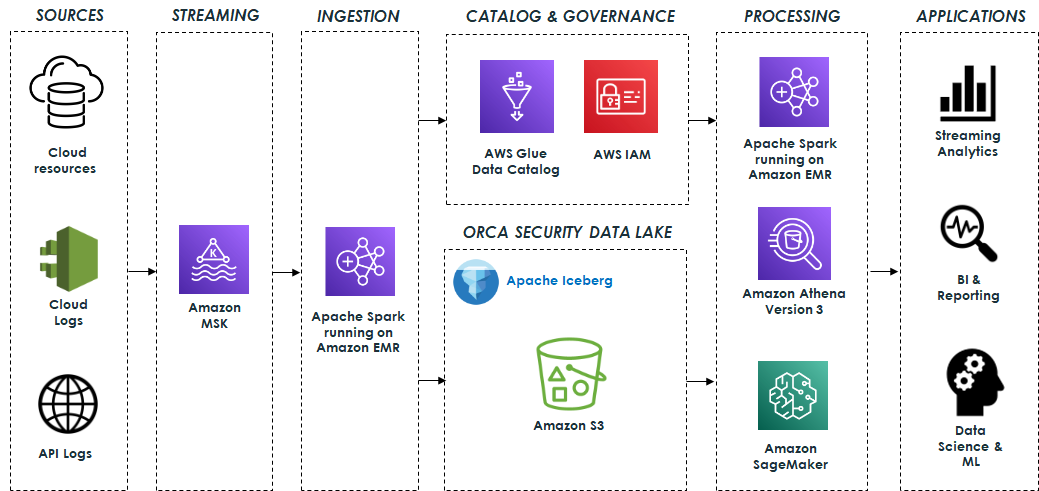

Eliad Gat is a Big Data & AI/ML Architect at Orca Security. He has over 15 years of experience designing and building large-scale cloud-native distributed systems, specializing in big data, analytics, AI, and machine learning.

Eliad Gat is a Big Data & AI/ML Architect at Orca Security. He has over 15 years of experience designing and building large-scale cloud-native distributed systems, specializing in big data, analytics, AI, and machine learning. Oded Lifshiz is a Principal Software Engineer at Orca Security. He enjoys combining his passion for delivering innovative, data-driven solutions with his expertise in designing and building large-scale machine learning pipelines.

Oded Lifshiz is a Principal Software Engineer at Orca Security. He enjoys combining his passion for delivering innovative, data-driven solutions with his expertise in designing and building large-scale machine learning pipelines. Yonatan Dolan is a Principal Analytics Specialist at Amazon Web Services. He is located in Israel and helps customers harness AWS analytical services to leverage data, gain insights, and derive value. Yonatan also leads the Apache Iceberg Israel community.

Yonatan Dolan is a Principal Analytics Specialist at Amazon Web Services. He is located in Israel and helps customers harness AWS analytical services to leverage data, gain insights, and derive value. Yonatan also leads the Apache Iceberg Israel community. Carlos Rodrigues is a Big Data Specialist Solutions Architect at Amazon Web Services. He helps customers worldwide build transactional data lakes on AWS using open table formats like Apache Hudi and Apache Iceberg.

Carlos Rodrigues is a Big Data Specialist Solutions Architect at Amazon Web Services. He helps customers worldwide build transactional data lakes on AWS using open table formats like Apache Hudi and Apache Iceberg. Sofia Zilberman is a Sr. Analytics Specialist Solutions Architect at Amazon Web Services. She has a track record of 15 years of creating large-scale, distributed processing systems. She remains passionate about big data technologies and architecture trends, and is constantly on the lookout for functional and technological innovations.

Sofia Zilberman is a Sr. Analytics Specialist Solutions Architect at Amazon Web Services. She has a track record of 15 years of creating large-scale, distributed processing systems. She remains passionate about big data technologies and architecture trends, and is constantly on the lookout for functional and technological innovations.