Post Syndicated from Mahmoud Matouk original https://aws.amazon.com/blogs/security/protect-public-clients-for-amazon-cognito-by-using-an-amazon-cloudfront-proxy/

In Amazon Cognito user pools, an app client is an entity that has permission to call unauthenticated API operations (that is, operations that don’t have an authenticated user), such as operations to sign up, sign in, and handle forgotten passwords. In this post, I show you a solution designed to protect these API operations from unwanted bots and distributed denial of service (DDoS) attacks.

To protect Amazon Cognito services and customers, Amazon Cognito applies request rate quotas on all API categories, and throttles rapid calls that exceed the assigned quota. For that reason, you must ensure your applications control who can call unauthenticated API operations and at what rate, so that user calls aren’t throttled because of unwanted or misconfigured clients that call these API operations at high rates.

App clients fall into one of two categories: public clients (used from web or mobile applications) and private or confidential clients (used from a secured backend). Public clients shouldn’t have secrets, because it isn’t possible to protect secrets in these types of clients. Confidential clients, on the other hand, use a secret to authorize calls to unauthenticated operations. In these clients, the secret can be protected in the backend.

The benefit of using a confidential app client with a secret in Amazon Cognito is that unauthenticated API operations will accept only the calls that include the secret hash for this client, and will drop calls with an invalid or missing secret. In this way, you control who calls these API operations. Public applications can use a confidential app client by implementing a lightweight proxy layer in front of the Amazon Cognito endpoint, and then using this proxy to add a secret hash in relevant requests before passing the requests to Amazon Cognito.

There are multiple options that you can use to implement this proxy. One option is to use Amazon CloudFront and Lambda@Edge to add the secret hash to the incoming requests. When you use a CloudFront proxy, you can also use AWS WAF, which gives you tools to detect and block unwanted clients. From Lambda@Edge, you can also integrate with other services (like Amazon Fraud Detector or third-party bot detection services) to help you detect possible fraudulent requests and block them. The CloudFront proxy, with the right set of security tools, helps protect your Amazon Cognito user pool from unwanted clients.

Solution overview

To implement this lightweight proxy pattern, you need to create an application client with a secret. Unauthenticated API calls to this client must include the secret hash which is added to the request from the proxy layer. Client applications use an SDK like AWS Amplify, the Amazon Cognito Identity SDK, or a mobile SDK to communicate with Amazon Cognito. By default, the SDK sends requests to the Regional Amazon Cognito endpoint. Your application must override the default endpoint by manually adding an “Endpoint” property in the app configuration. See the Integrate the client application with the proxy section later in this post for more details.

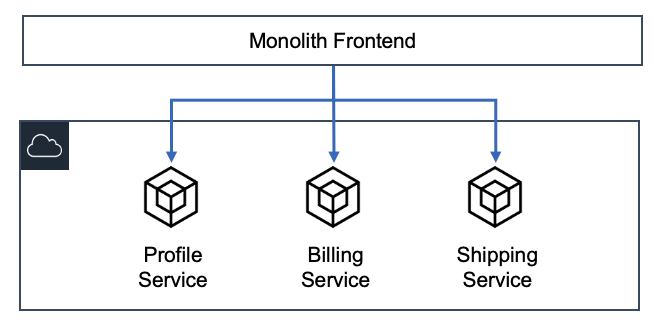

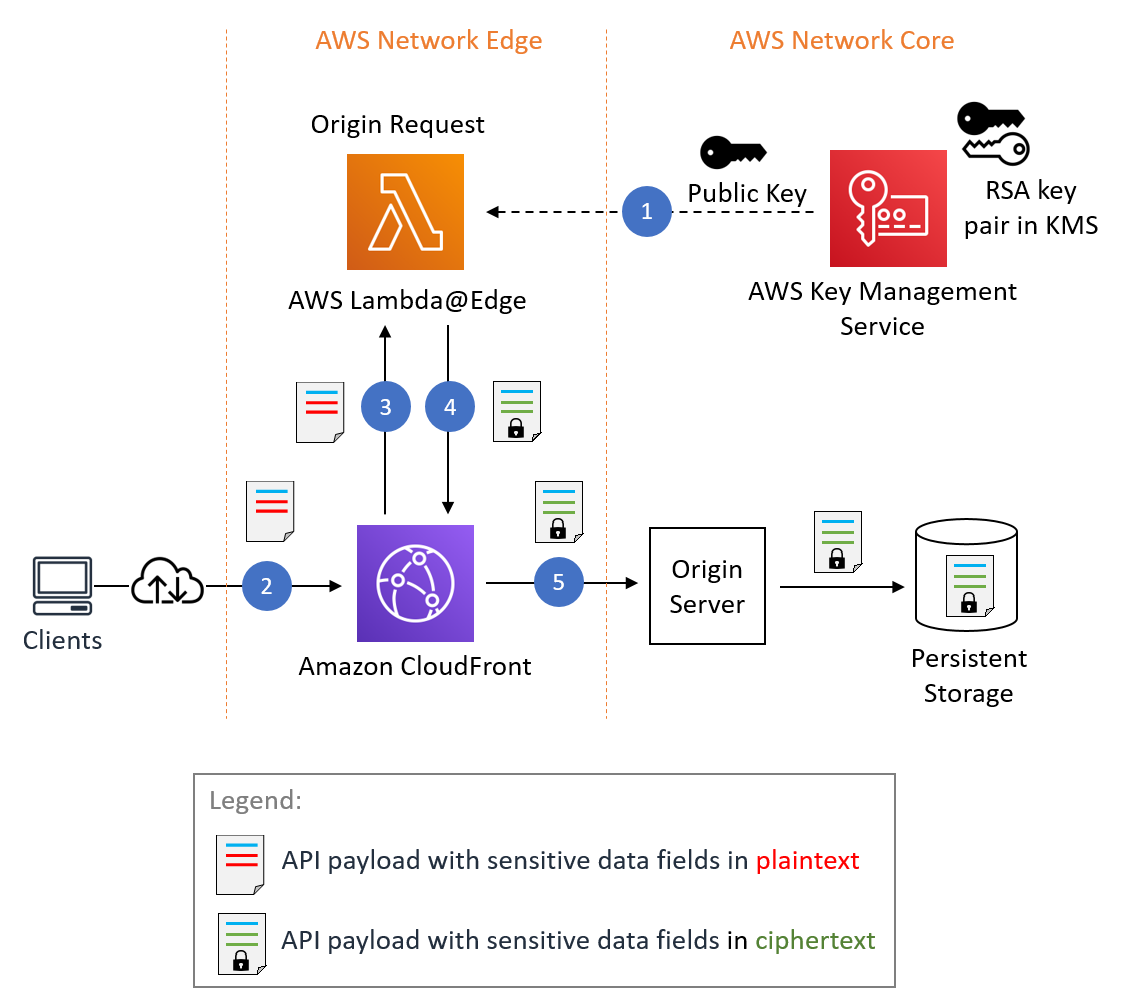

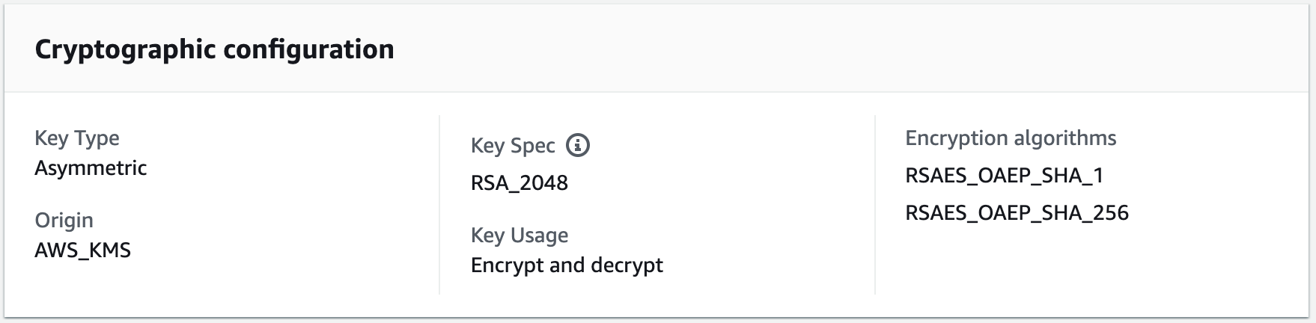

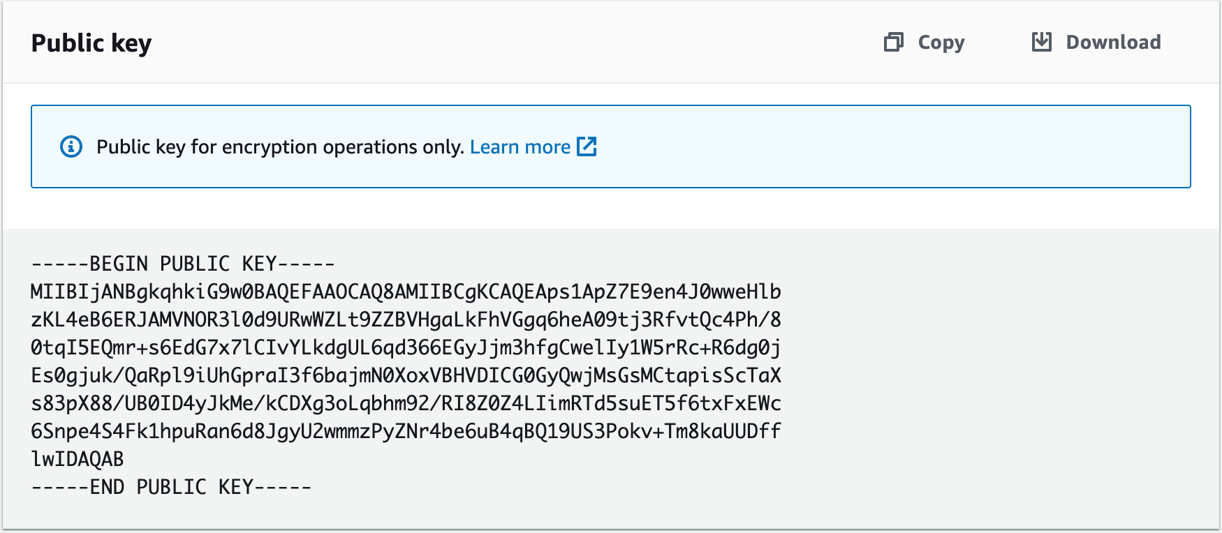

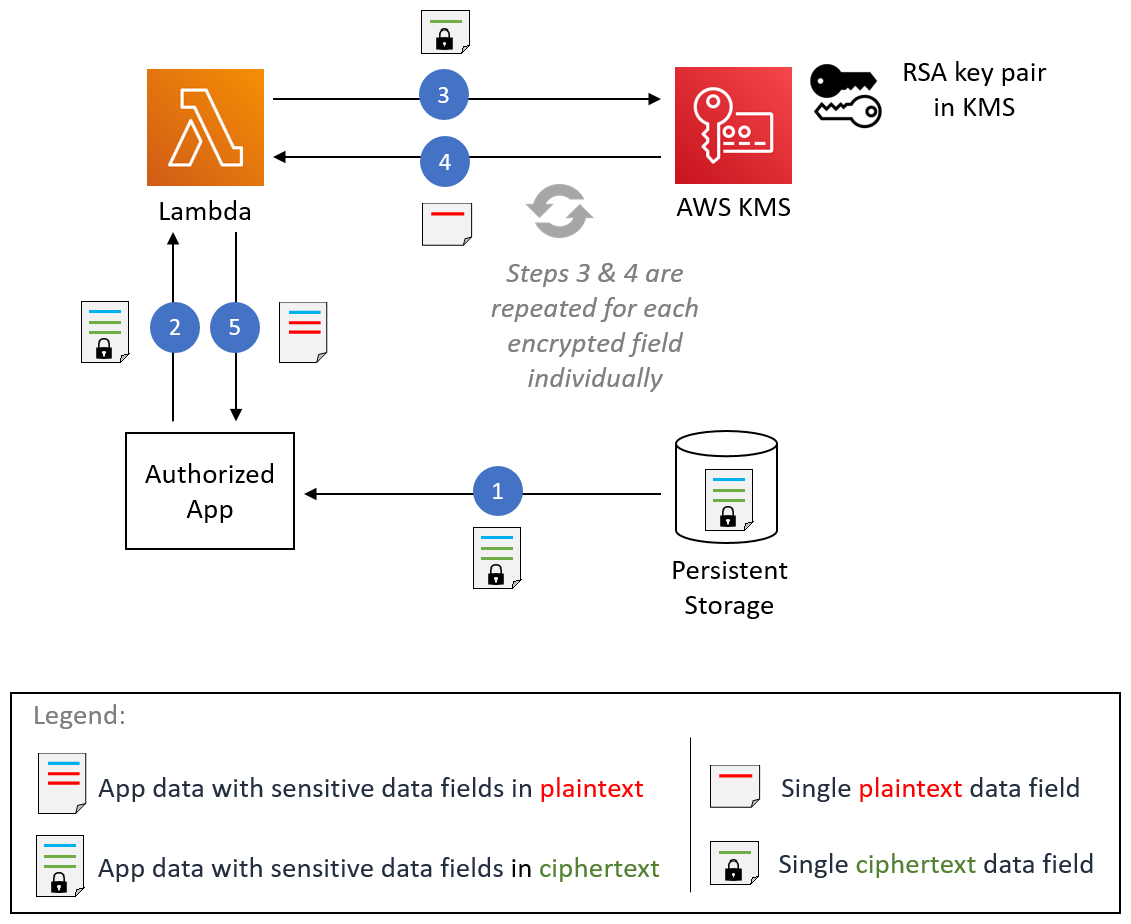

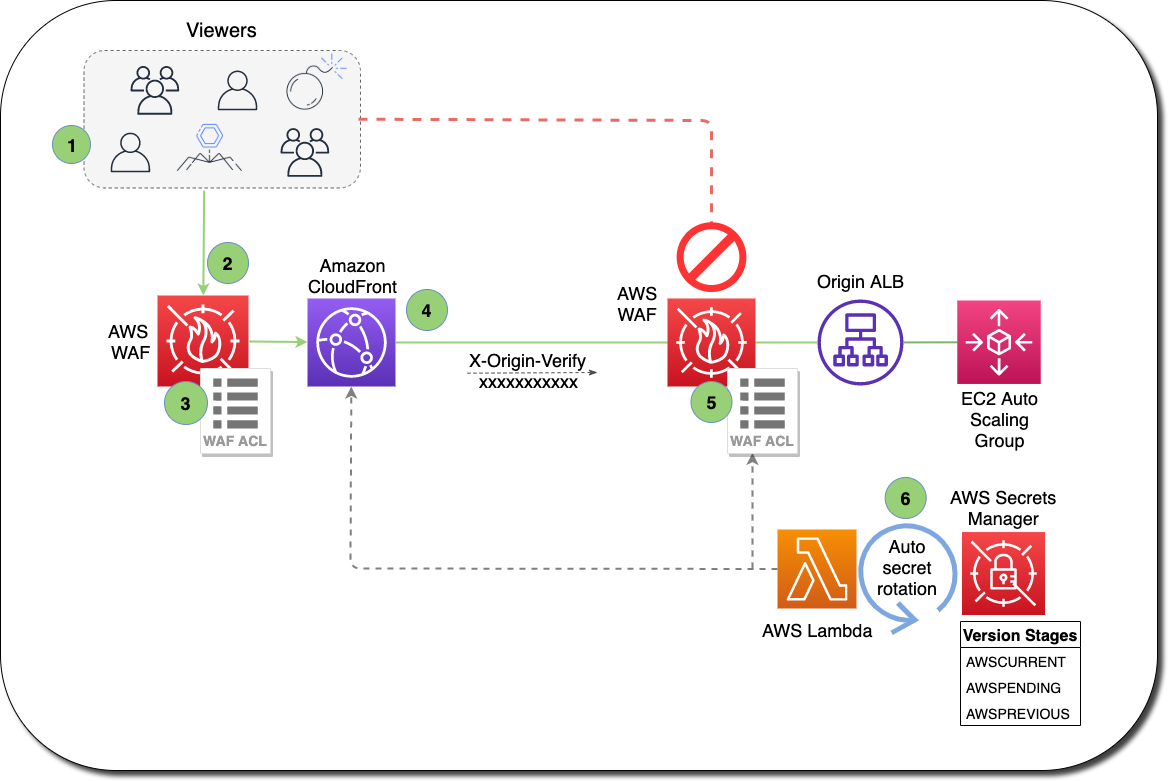

Figure 1 shows how this works, step by step.

Figure 1: A proxy solution to the Amazon Cognito Regional endpoint

The workflow is as follows:

- You configure the client application (mobile or web client) to use a CloudFront endpoint as a proxy to an Amazon Cognito Regional endpoint. You also create an application client in Amazon Cognito with a secret. This means that any unauthenticated API call must have the secret hash.

- Clients that send unauthenticated API calls to the Amazon Cognito endpoint directly are blocked and dropped because of the missing secret.

- You use Lambda@Edge to add a secret hash to the relevant incoming requests before passing them on to the Amazon Cognito endpoint.

- From Lambda@Edge, you must have the app client secret to be able to calculate the secret hash and add it to the request. It’s recommended that you keep the secret in AWS Secrets Manager and cache it for the lifetime of the function.

- You use AWS WAF with CloudFront distribution to enforce rate limiting, allow and deny lists, and other rule groups according to your security requirements.

When to use this pattern

It’s a best practice to use this proxy pattern with clients that use SDKs to integrate with Amazon Cognito user pools. Examples include mobile applications that use the iOS or Android SDK, or web applications that use client-side libraries like Amplify or the Amazon Cognito Identity SDK to integrate with Amazon Cognito.

You don’t need to use a proxy pattern with server-side applications that use an AWS SDK to integrate with Amazon Cognito user pools from a protected backend, because server-side applications can natively use confidential clients and protect the secret in the backend.

You can’t use this solution with applications that use Hosted UI and OAuth 2.0 endpoints to integrate with Amazon Cognito user pools. This includes federation scenarios where users sign in with an external identity provider (IdP).

Implementation and deployment details

Before you deploy this solution, you need a user pool and an application client that has the client secret. When you have these in place, choose the following Launch Stack button to launch a CloudFormation stack in your account and deploy the proxy solution.

Note: The CloudFormation stack must be created in the us-east-1 AWS Region, but the user pool itself can exist in any supported Region.

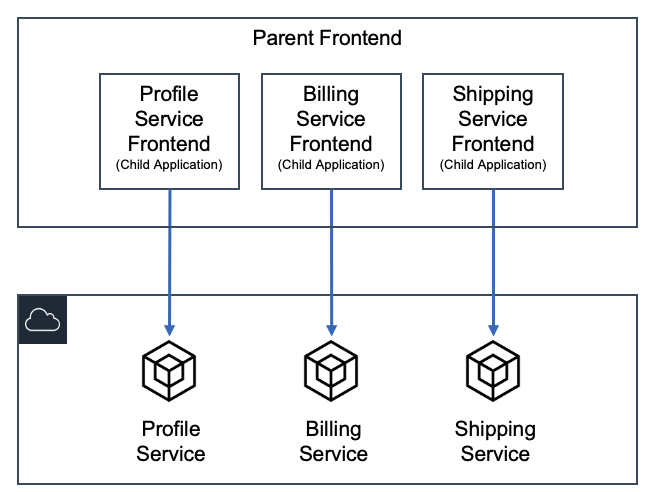

The template takes the parameters shown in Figure 2 below.

Figure 2: CloudFormation stack creation with initial parameters

The parameters in Figure 2 include:

- AdvancedSecurityEnabled is a flag that indicates whether advanced security is enabled in the user pool or not. This flag determines which version of the Lambda function is deployed. Notice that if you change this flag as part of a stack update, it overrides the function code, so if you have any manual changes, make sure to back up your changes.

- AppClientSecret is the secret for your application client. This secret is stored in Secrets Manager and accessed from Lambda@Edge as needed.

- LambdaS3BucketName is the bucket that hosts the Lambda code package. You don’t need to change this parameter unless you have a requirement to modify or extend the solution with your own Lambda function.

- RateLimit is the maximum number of calls from a single IP address that are allowed within a 5 minute period. Values between 100 requests and 20 million requests are valid for RateLimit.

- UserPoolId is the ID of your user pool. This value is used by Lambda@Edge when needed (for example, to call admin APIs, which require the user pool ID).

- UserPoolRegion is the AWS Region where you created your user pool. This value is used to determine which Amazon Cognito Regional endpoint to proxy the calls to.

Important: provide a value suitable for your application and security requirements.

This template creates several resources in your AWS account, as follows:

- A CloudFront distribution that serves as a proxy to an Amazon Cognito Regional endpoint.

- An AWS WAF web access control list (ACL) with rules for the allow list, deny list, and rate limit.

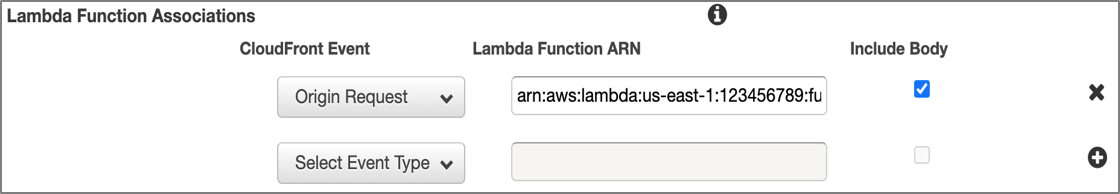

- A Lambda function to be deployed at the edge and assigned to the origin request event.

- A secret in Secrets Manager, to hold the values of the application client secret and user pool ID.

After you create the stack, the CloudFront distribution domain name is available on the Outputs tab in the CloudFront console, as shown in Figure 3. This is the value that’s used as the Endpoint property in your client-side application. You can optionally add an alternative domain name to the CloudFront distribution if you prefer to use your own custom domain.

Figure 3: The output of the CloudFormation stack creation, displaying the CloudFront domain name

Use Lambda@Edge to add a secret hash to the request

As explained earlier, the purpose of having this proxy is to be able to inject the secret hash in unauthenticated API calls before passing them to the Amazon Cognito endpoint. This injection is achieved by a Lambda function that intercepts incoming requests at the edge (the CloudFront distribution) before passing them to the origin (the Amazon Cognito Regional endpoint).

The Lambda function that is deployed to the edge has two versions. One is a simple pass-through proxy that only adds the secret hash, and this version is used if Amazon Cognito advanced security isn’t enabled. The other version is a proxy that uses the AdminInitiateAuth and AdminRespondToAuthChallenge API operations instead of unauthenticated API operations for the user authentication and challenge response. This allows the proxy layer to propagate the client IP address to the Amazon Cognito endpoint, which guides the adaptive authentication features of advanced security. The version that is deployed by the stack is determined by the AdvancedSecurityEnabled flag when you create or update the CloudFormation stack.

You can extend this solution by manually modifying the Lambda function with your own processing logic. For example, you can integrate with fraud detection or bot detection services to evaluate the request and decide to proceed or reject the call. Note that after making any change to the Lambda function code, you must deploy a new version to the edge location. To do that from the Lambda console, navigate to Actions, choose Deploy to Lambda@Edge, and then choose Use existing CloudFront trigger on this function.

Important: If you update the stack from CloudFormation and change the value of the AdvancedSecurityEnabled flag, the new value overrides the Lambda code with the default version for the choice. In that case, all manual changes are lost.

Allow or block requests

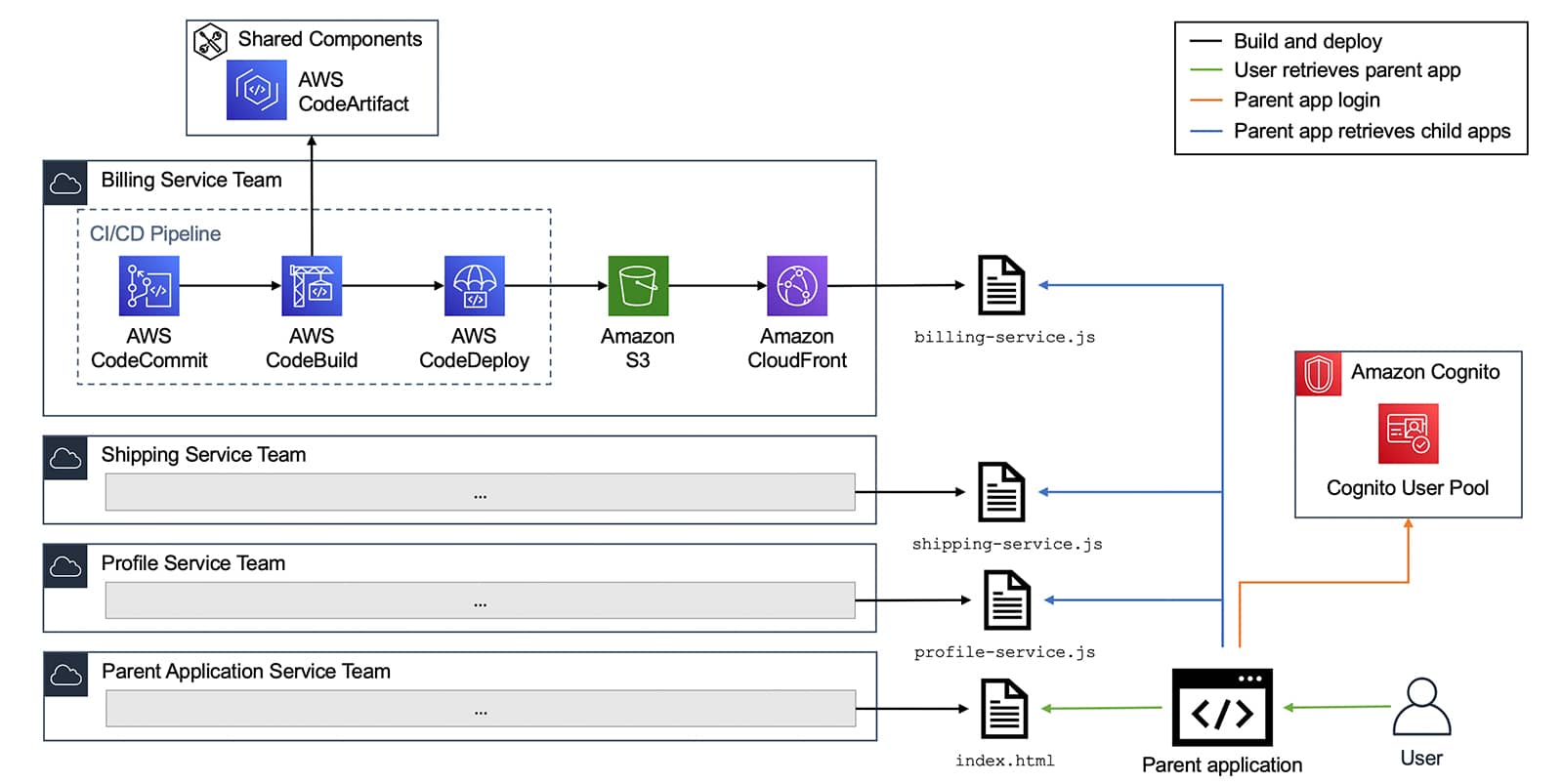

The template that is provided in this blog post creates a web ACL with three rules: AllowList, DenyList, and RateLimit. These rules are evaluated in order and determine which requests are allowed or blocked. The template also creates four IP sets, as shown in Figure 4, to hold the values of allowed or blocked IPs for both IPv4 and IPv6 address types.

Figure 4: The CloudFormation template creates IP sets in the AWS WAF console for allow and deny lists

If you want to always allow requests from certain clients, for example, trusted enterprise clients or server-side clients in cases where a large volume of requests is coming from the same IP address like a VPN gateway, add these IP addresses to the corresponding AllowList IP set. Similarly, if you want to always block traffic from certain IPs, add those IPs to the corresponding DenyList IP set.

Requests from sources that aren’t on the allow list or deny list are evaluated based on the volume of calls within 5 minutes, and sources that exceed the defined rate limit within 5 minutes are automatically blocked. If you want to change the defined rate limit, you can do so by updating the CloudFormation stack and providing a different value for the RateLimit parameter. Or you can modify this value directly in the AWS WAF console by editing the RateLimit rule.

Note: You can also use AWS Managed Rules for AWS WAF to add additional protection according to your security needs.

Integrate the client application with the proxy

You can integrate the client application with the proxy by changing the Endpoint in your client application to use the CloudFront distribution domain name. The domain name is located in the Outputs section of the CloudFormation stack.

You then need to edit your client-side code to forward calls to Amazon Cognito through the proxy endpoint. For example, if you’re using the Identity SDK, you should change this property as follows.

If you’re using AWS Amplify, you can change the endpoint in the aws-exports.js file by overriding the property aws_cognito_endpoint. Or, if you configure Amplify Auth in your code, you can provide the endpoint as follows.

If you have a mobile application that uses the Amplify mobile SDK, you can override the endpoint in your configuration as follows (don’t include AppClientSecret parameter in your configuration). Note that the Endpoint value contains the domain name only, not the full URL. This feature is available in the latest releases of the iOS and Android SDKs.

Warning: The Amplify CLI overwrites customizations to the awsconfiguration.json and amplifyconfiguration.json files if you do an amplify push or amplify pull operation. You must manually re-apply the Endpoint customization and remove the AppClientSecret if you use the CLI to modify your cloud backend.

Solution limitations

This solution has these limitations:

- If advanced security features are enabled for the user pool, Amazon Cognito calculates risk for user events. If you use this proxy pattern, the IP address that is propagated in user events is the proxy IP address, which causes risk calculation for SignUp, ForgotPassword, and ResendCode events to be inaccurate. On the other hand, Sign-In events still have the client IP address propagated correctly, and risk calculation and adaptive authentication for Sign-In events aren’t affected by the use of this proxy.

- This solution is not applicable to Hosted UI, OAuth 2.0 endpoints, and federation flows.

- Authenticated and admin API operations (which require developer credentials or an access token) aren’t covered in this solution. These API operations don’t require a secret hash, and they use other authentication mechanisms.

- Using this proxy solution with mobile apps requires an update to the application. The update might take time to be available in the relevant app store, and you must depend on end users to update their app. Plan ahead of time to use the solution with mobile apps.

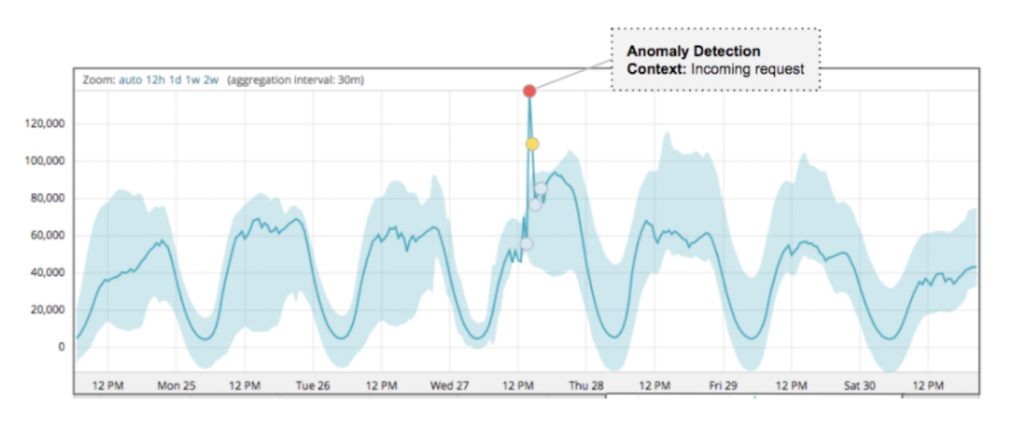

How to detect unusual behavior

In this section, I share with you the steps to detect, quickly analyze and respond to unwanted clients. It’s a best practice to configure monitoring and alarms that help you to detect unexpected spikes in activity. Additionally, I show you how to be ready to quickly identify clients that are calling your resources at a higher-than-usual rate.

Monitor utilization compared to quotas

Amazon Cognito integrates with Service Quotas, which monitor service utilization compared to quotas. These metrics help you detect unexpected spikes and be alerted if you’re approaching your quota for a certain API category. Approaching your quota indicates that there is a risk that calls from legitimate users will be throttled.

To view utilization versus quota metrics

- In the Service Quotas console, choose Service Quotas, choose AWS Services, and then choose Amazon Cognito User Pools.

- Under Service quotas, enter the search term rate of. This shows you the list of API categories and the assigned quotas for each category.

Figure 5: The Service Quotas console showing Amazon Cognito API category rate quotas

- Choose any of the API categories to see utilization versus quota metrics.

Figure 6: The Service Quotas console showing utilization vs quota metrics for Amazon Cognito UserCreation APIs

- You can also create alarms from this page to alert you if utilization is above a pre-defined threshold. You can create alarms starting at 50 percent utilization. It’s recommended that you create multiple alarms, for example at the 50 percent, 70 percent, and 90 percent thresholds, and configure CloudWatch alarms as appropriate.

Figure 7: Creating an alarm for the utilization of the UserCreation API category

Analyze CloudTrail logs with Athena

If you detect an unexpected spike in traffic to a certain API category, the next step is to identify the sources of this spike. You can do that by using CloudTrail logs or, after you deploy and use this proxy solution, CloudFront logs as sources of information. You can then analyze these logs by using Amazon Athena queries.

The first step is to create Athena tables from CloudTrail and CloudFront logs. You can do that by following these steps for CloudTrail and similar steps for CloudFront. After you have these tables created, you can create a set of queries that help you identify unwanted clients. Here are a couple of examples:

- Use the following query to identify clients with the highest call rate to the InitiateAuth API operation within the timeframe you noticed the spike (change the eventtime value to reflect the attack window).

- Use the following query to identify clients that come through CloudFront with the highest error rate.

After you identify sources that are calling your service with a higher-than-usual rate, you can block these clients by adding them to the DenyList IP set that was created in AWS WAF.

Analyze CloudTrail events with CloudWatch Logs Insights

It’s a best practice to configure your trail to send events to CloudWatch Logs. After you do this, you can interactively search and analyze your Amazon Cognito CloudTrail events with CloudWatch Logs Insights to identify errors, unusual activity, or unusual user behavior in your account.

Conclusion

In this post, I showed you how to implement a lightweight proxy to an Amazon Cognito endpoint, which can be used with an application client secret to control access to unauthenticated API operations. This approach, together with security tools such as AWS WAF, helps provide protection for these API operations from unwanted clients. I also showed you strategies to help detect an ongoing attack and quickly analyze, identify, and block unwanted clients.

For more strategies for DDoS mitigation, see the AWS Best Practices for DDoS Resiliency.

If you have feedback about this post, submit comments in the Comments section below. If you have questions about this post, start a new thread on the Amazon Cognito forum or contact AWS Support.

Want more AWS Security how-to content, news, and feature announcements? Follow us on Twitter.